The GeForce GTX 660 Ti Review, Feat. EVGA, Zotac, and Gigabyte

NVIDIA has a bit of a problem right now: their products are a bit too popular. Between the GK104-heavy desktop GeForce lineup, the GK104 based Tesla K10, and the GK107-heavy mobile GeForce lineup, NVIDIA is selling every 28nm chip they can make. As a result NVIDIA has been unable to expand their market presence as quickly as customers would like. For the desktop in particular this means NVIDIA has a very large, very noticeable hole in their product lineup between $100 and $400, which composes the mainstream and performance market segments.

Long-term NVIDIA needs more production capacity and a wider selection of GPUs to fill this hole, but in the meantime they can at least begin to fill it with what they have to work with. This brings us to today’s product launch: the GeForce GTX 660 Ti. With nothing between GK104 and GK107 at the moment, NVIDIA is pushing out one more desktop product based on GK104 in order to bring Kepler to the performance market. Serving as an outlet for further binned GK104 GPUs, the GTX 660 Ti will be launching today as NVIDIA’s $300 performance part.

Read More ...

Xiaomi Announces MIUI Phone Mi-Two with Quad Core Krait APQ8064 inside

About a year ago, I had the chance to play with a Xiaomi MIUI Mi-One handset while working on our Vellamo introduction and benchmarking story. The phone was based on a dual core 1.5 GHz MSM8260, and shocked me with its fit and finish, considering this was Xiaomi's first handset.

Today Xiaomi announced its Mi-Two handset, which includes a 1.5 GHz Qualcomm Snapdragon S4 Pro APQ8064 SoC and 2 GB of LPDDR2 RAM. Though there have been leaks and rumors about other phones in develoment based around APQ8064, this is to my knowledge the first handset officially announced with the quad core Krait (and Adreno 320) SoC inside. Xiaomi has priced the Mi-Two at 1999HKD which works out to 315USD for comparison.

This is the same APQ8064 we've already done some performance analysis with on a mobile development platform. Xiaomi has been a pretty strong Qualcomm partner thus far, so it isn't surprising to see them announce an APQ8064 phone. I've put together a spec table with what I've gathered from Xiaomi's twitter and microblogging feeds from their announcement today.

| Xiaomi Mi-One and Mi-Two Comparison | |||||

| Device | Mi-One | Mi-Two | |||

| SoC | 1.5 GHz MSM8x60 (Dual core Scorpion + Adreno 220) | 1.5 GHz APQ8064 (Quad core Krait + Adreno 320) | |||

| RAM/NAND/Expansion | 1 GB LPDDR2, 4 GB NAND, microSD | 2 GB LPDDR2, 16 GB NAND, microSD | |||

| Display | 4.0" 854x480 | 4.3" IPS 1280x720 | |||

| Network | WCDMA (14.4) / GPRS / EDGE / (1x+EVDO if MSM8660) | DC-HSPA+ (42.2) / GPRS / EDGE | |||

| Dimensions | 125mm x 63mm x 11.9mm | 126mm x 62mm x 10.2mm | |||

| Camera | 8 MP | 8 MP 5P F/2.0 | |||

Xiaomi doesn't pull any punches and directly compares the Mi-Two to the competition from HTC and Samsung in their slides. I've gathered almost all of them up into a gallery for your perusal. It's great to see Xiaomi going into this level of detail about their handset.

Update: The Xiaomi Mi-Two page is now live and includes a bit more detail, including band support, which is WCDMA 850, 1900, 2100 alongside quad band GSM. There's also a small note about the Mi-Two having antenna diversity. The pages also note that the Mi-Two will go on sale in October for 1999HKD.

Xiaomi also made their slide deck from the presentation live after some time, I've exported the slide deck pages to a gallery as well.

Sources: Xiaomi (microblogging), Twitter, Xiaomi (Mi-Two Page)

Read More ...

Samsung Series 7 NP700Z7C Review

We recently posted our first look at Dell’s new XPS 15, a Windows-focused laptop that took more than a few design elements of the standard MacBook Pro 15. While there was plenty to like with the design and features, we’re still waiting for a final BIOS fix that will hopefully address the throttling issues many have experienced with the laptop. Today, we have a notebook from Samsung that’s very similar in many areas to the XPS 15, only it’s in a larger 17.3”-screen chassis and has slightly faster components.

First impressions of the Series 7 are definitely favorable, with a relatively thin but sturdy feeling chassis backed up by some great design choices. Given Samsung’s background in displays and related technology, we were very pleased to find a high quality matte 1080p LCD—interestingly enough, an LCD that’s not made by Samsung, though that’s not a big deal. Performance is primarily influenced by the CPU, GPU, and storage choices, and Samsung uses the standard one-two punch of a quad-core Ivy Bridge CPU with an NVIDIA Kepler GPU. Storage is the more interesting aspect: Samsung opts for a 1TB 5400RPM hard drive with an 8GB SSD and Diskeeper’s ExpressCache software providing caching of frequently used data. Let’s find out if the final result can succeed where other notebooks fall short, or if we end up with another series of compromises.

Read More ...

Intel Brings TRIM to RAID-0 SSD Arrays on 7-Series Motherboards, We Test It

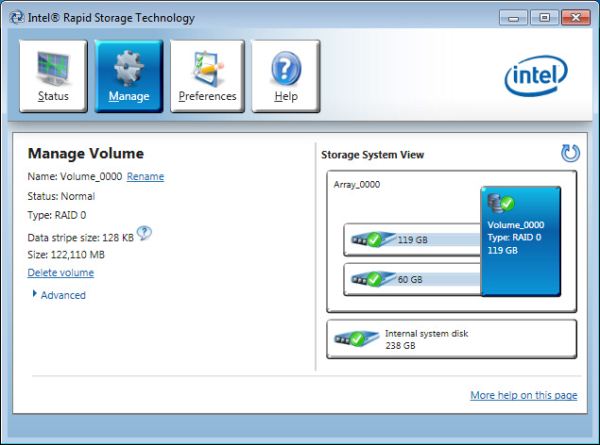

In an unusually terse statement, Intel officially confirmed that the ATA TRIM command now passes through to RAID-0 SSD arrays on some systems running Intel's RST (Rapid Storage Technology ) RAID driver version 11.0 and newer. The feature is limited to Intel 7 series chipsets with RST RAID support and currently only works on Windows 7 OSes, although Windows 8 support is forthcoming.

As soon as I got confirmation from Intel, I fired up a testbed to confirm the claim. Before I get to the results, let's have a quick recap of what all of this means.

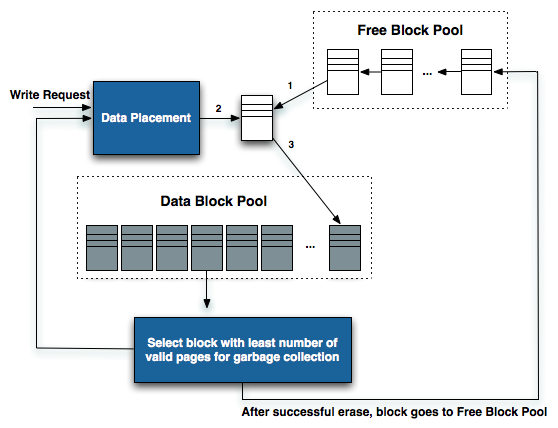

Why does TRIM Matter?

The building block of today's SSDs that we love so much is 2-bit-per-cell MLC NAND Flash. The reason that not all SSDs are created equal is because of two important factors:1) Each NAND cell has a finite lifespan (determined by the number of program and erase cycles), and

2) Although you can write to individual NAND pages, you can only erase large groups of pages (called blocks)

These two factors go hand in hand. If you only use 10% of your drive's capacity, neither factor is much of an issue. But if you're like most users and run your drive near capacity, difficulties can arise.

Your SSD controller has no knowledge of what pages contain valid vs. invalid (aka deleted) data. As a result, until your SSD is told to overwrite a particular address, it has to keep all data on the drive. This means that your SSD is eternally running out of free space. Thankfully all SSDs have some percentage of spare area set aside to ensure they never actually run out of free space before the end user occupies all available blocks, but how aggressively they use this spare area determines a lot.

Aggressive block recycling keeps performance high, at the expense of NAND endurance. Conservative block recycling preserves NAND lifespan, at the expense of performance. It's a delicate balance that a good SSD controller must achieve, and there are many tricks that can be employed to make things easier (e.g. idle time garbage collection). One way to make things easier on the controller is the use of the ATA TRIM command.

In a supported OS, with supported storage drivers and on an SSD with firmware support, the ATA TRIM command is passed from the host to the SSD whenever specific logical block addresses (LBAs) are no longer needed. In the case of Windows 7, a TRIM command is sent whenever a drive is formatted (all LBAs are TRIMed), whenever the recycle bin is emptied or whenever a file is shift + deleted (the LBAs occupied by that file are TRIMed).

The SSD doesn't have to take immediate action upon receiving the TRIM command for specific LBAs, but many do. By knowing what pages and blocks no longer contain valid data, the SSD controller can stop worrying about preserving that data and instead mark those blocks for garbage collection or recycling. This increases the effective free space from the controller's perspective, and caps a drive's performance degradation to the amount of space that's actively used vs. a continuing downward spiral until the worst case steady state is reached.

TRIM was pretty simple to implement on a single drive. These days all modern SSDs support it. It's only if you have one of the early Intel X25-M G1s that you're stuck without TRIM. Drives that preceded Intel's X25-M are also TRIMless. Nearly all subsequent drives we recommended either had TRIM support enabled through a firmware update or had it from the start.

Enabling TRIM on a RAID array required more effort, but only on the part of the storage driver. The SSD's firmware and OS remain unchanged. Intel eventually added TRIM support in its RAID drivers for RAID-1 (mirrored) arrays, but RAID-0 arrays were a different story entirely. There's a danger in getting rid of data in a RAID-0 array, if a page or a block gets TRIMed on one drive that's actually necessary, the entire array can be shot. There was talk of Intel enabling TRIM support on RAID-0 arrays as early as 2009, but given the cost of SSDs back then not many users were buying multiple to throw in an array.

The cost of SSDs has dropped considerably in the past 4 years. The SSD market is far more mature than it used to be. Intel isn't as burdened with the responsibility of bringing a brand new controller and storage technology to market. With some spare time on its hands, Intel finally delivered a build of its RAID drivers that will pass the ATA TRIM command to RAID-0 arrays.

The Requirements

The requirements for RAID-0 TRIM support are as follows:A 7-series motherboard (6-series chipsets are unfortunately not supported).

Intel's Rapid Storage Technology (RST) for RAID driver version 11.0 or greater (11.2 is the current release)

Windows 7 (Windows 8 support is forthcoming)

The lack of support for 6-series chipsets sounds a lot like a forced feature upgrade. Internally Intel likely justifies it by not wanting to validate on older hardware, but I don't see a reason why TRIM on RAID-0 wouldn't work on 6-series chipsets.

I am not sure if TRIM will work on RAID-10 arrays. I'm going to run some tests shortly to try and confirm. Update: I don't believe it works on RAID-10 arrays. I'm still running tests to confirm but so far it looks like the answer is no.

Testing TRIM on RAID-0

I set up a Z77 testbed using Intel's DZ77GA-70K motherboard. I configured the board for RAID operation and installed Windows 7 SP1 to a single boot SSD. I then took two Samsung SSD 830s and created a 128GB RAID-0 array (64GB + 64GB). I picked the 830 because it benefits tremendously from TRIM, when full and tortured with random writes the 830's performance tanks. I secure erased both drives before creating the RAID array to ensure I started with a clean slate.The 64GB Samsung SSD 830 is good for almost 500MB/s in sequential reads and under 160MB/s sequential writes. Two of them in RAID-0 should be able to deliver over 1GB/s of sequential read performance and over 300MB/s in sequential writes. A quick pass of HDTach confirms just that:

Take a moment to marvel at just how much performance you can get out of two $90 SSDs.

For the control run I used Intel's 10.6 RST drivers (10.6.0.1022). I filled the array with sequential data, then randomly wrote 4KB files over the entire array at a queue depth of 32 for 30 minutes straight. I formatted the array (thus TRIMing all LBAs) and ran an HD Tach pass to see if performance recovered. Remember if the controller was told that all of its data was invalid, a sequential write pass would run at full speed since all data would be thrown away as it was being overwritten. Otherwise, the controller would try to preserve its drive full of garbage data as long as possible.

The 10.6 RST drivers don't pass TRIM through to RAID-0 arrays, and the results show us just that:

That's no surprise, but what happens if we do the same test using Intel's 11.2 RST drivers?

Here's what the pass looks like after the same fill, torture, TRIM, HD Tach routine with the 11.2 drivers installed:

Perfect. TRIM works as promised. Users running SSDs in RAID-0 on 7-series motherboards can enjoy the same performance maintaining features that single-drive users have.

Bringing TRIM support to RAID-0 arrays provides users with a way of enjoying next-gen SSD performance sooner rather than later, without giving up an important feature. Pretty much all high-end SSDs are capped to 6Gbps limits when it comes to sequential IO. Modern SATA controllers deliver 6Gbps per port, allowing you to break through the 6Gbps limit by aggregating drives in RAID.

The only negative here is that Intel is only offering support on 7-series chipsets and not on previous hardware. That's great news for anyone who just moved to Ivy Bridge and has a RAID-0 array of SSDs, but not so great for everyone else. A lot of folks supported Intel over the past couple of years and Intel has had some amazing quarters as a result - I feel like the support should be rewarded. While I understand Intel's desire to limit its validation costs, I don't have to be happy about it.

For more information on how SSDs work, check out our last major article on the topic.

Read More ...

Live from Samsung's Galaxy Note 10.1 Launch

We just sat down at Samsung's Galaxy Note 10.1 US launch event. First announced at Mobile World Congress earlier this year, the Galaxy Note 10.1 brings Samsung's Note brand to a 10.1-inch tablet. The final version uses Samsung's 32nm Exynos 4 quad-core A9 SoC, combined with 2GB of RAM and all driving a 10.1-inch 1280 x 800 display.

Samsung got Moulin Rouge director Baz Luhrmann on stage to talk about his experience with the Galaxy Note 10.1. Ultimately Samsung is touching on a major issue with tablets today: the inability to enjoy the same level of productive multitasking we have on traditional PCs and notebooks.

The Note 10.1 brings multi-window support to Android courtesy of Samsung's own software customizations. The bundled S Pen helps on the creation side as well.

I do feel that Windows 8/RT based tablets will ultimately address a lot of what Samsung has been trying to do with Android over the past couple of years. The real question is whether or not Samsung will bring a lot of its Android experiments to Windows RT later this year.

The Galaxy Note 10.1 will be available for purchase starting tomorrow, priced at $499 for the 16GB WiFi model and $549 for the 32GB WiFi model (white and dark gray colors available). The tablet comes preloaded with Adobe's Photoshop Touch software that's optimized for use with the bundled S Pen.

| Android Tablet Specification Comparison | ||||||

| ASUS Eee Pad Transformer Prime | ASUS Transformer Pad Infinity | Google Nexus 7 | Samsung Galaxy Note 10.1 | |||

| Dimensions | 263 x 180.8 x 8.3mm | 263 x 180.6 x 8.4mm | 198.5 x 120 x 10.45mm | 262 x 180 x 8.9mm | ||

| Chassis | Aluminum | Aluminum + Plastic RF Strip | Plastic | Plastic | ||

| Display | 10.1-inch 1280 x 800 Super IPS+ | 10.1-inch 1920 x 1200 Super IPS+ | 7-inch 1280 x 800 IPS | 10.1-inch 1280 x 800 | ||

| Weight | 586g | 594g | 340g | 597g | ||

| Processor | 1.3GHz NVIDIA Tegra 3 (T30 - 4 x Cortex A9) | 1.6GHz NVIDIA Tegra 3 (T33 - 4 x Cortex A9) | 1.3GHz NVIDIA Tegra 3 (T30L - 4 x Cortex A9) | 1.4GHz Exynos 4 (4 x Cortex A9) | ||

| Memory | 1GB | 1GB DDR3-1600 | 1GB | 2GB | ||

| Storage | 32GB/64GB + microSD slot | 32/64GB + microSD slot | 8/16GB | 16/32GB + microSD slot | ||

| Battery | 25Whr | 25Whr | 16Whr | 26Whr | ||

| OS | Android 4.0 | Android 4.0 | Android 4.1 | Android 4.0 | ||

| Pricing | $499/$599 | $499/$599 | $199/$249 | $499/$549 | ||

Read More ...

Microsoft Reveals First Battery Life Specs for Windows RT Tablets

Yesterday Microsoft announced the final roster for ARM based Windows RT tablets expected to launch this year. We'll see Windows RT tablets from ASUS, Dell, Lenovo and Samsung, as well as Microsoft itself with Surface. Those who aren't listed either opted to go x86 exclusively (e.g. Acer) or simply won't have a Windows RT device in the first round. Microsoft is trying to exercise more control over its partners with Windows 8, with hopes of boosting the overall quality of launch devices. Powering these tablets will be NVIDIA's Tegra 3, Qualcomm's Snapdragon S4 or TI's OMAP 4 SoC. Thanks to ARMv7 ISA compatibility across all three SoCs, only a single build of Windows RT is needed to run across all Windows RT tablets.

The OS is final as of now, but there's still a lot of work being done on drivers. I don't expect to see anything resembling final drivers until early October. That being said, Microsoft did share a bit of early data about the first Windows RT tablets:

| Windows RT Launch Tablet Specification Range | |||||

| Min | Max | Apple iPad (2012) | |||

| HD Video Playback at 200 nits | 8 hours | 13 hours | 11.15 hours | ||

| Connected Standby | 320 hours | 409 hours | - | ||

| Battery Capacity | 25 Wh | 42 Wh | 42 Wh | ||

| Screen Size | 10.1-inches | 11.6-inches | 9.7-inches | ||

| Weight | 520 g | 1200 g | 652 g | ||

| Length | 263 mm | 298 mm | 241.2 mm | ||

| Width | 168.5 mm | 204 mm | 185.7 mm | ||

| Height | 8.35 mm | 15.6 mm | 9.4 mm | ||

It's disappointing to see a lack of commentary on battery life stressing more than just the video decode logic on the SoC and display. I'm also interested to see how Atom based Clovertrail Windows 8 tablets stack up against these Windows RT devices in terms of battery life and performance. If Atom based Windows 8 tablets can deliver a comparable experience there, and are comparably priced (which seems to be the case based on what I heard at Computex), then the choice between RT and Atom based Windows 8 tablets may boil down to whether free Office or legacy compatibility matter more to you.

Read More ...

OWC Announces 480GB SSD Upgrade for MacBook Pro with Retina Display

In our review of the MacBook Pro with Retina Display I mentioned that the base $2199 configuration is near perfect, save for its 256GB SSD. With no room for internal storage expansion, you either have to be ok with only having 256GB of internal storage or pay the extra $500 for the BTO 512GB SSD upgrade.

Today, as expected, OWC announced its 480GB Mercury Aura Pro upgrade for the Retina MacBook Pro. The SandForce SF-2281 based SSD is priced at $579.99, which actually doesn't save you any money compared to buying the upgraded configuration directly from Apple. OWC's route does offer a couple of benefits however: 1) customers who order before September 30th will receive a USB 3.0 enclosure that will let you use your old 256GB SSD as an external drive (the enclosure costs $60 separately, and you still get to use your 256GB SSD), and 2) if you've already purchased a 256GB rMBP and later discover that you need more storage simply buying the $2799 model isn't an option, making the Mercury Aura Pro a viable option.

A quick search of 480GB SF-2281 based drives reveals that many are still priced over $500, although it's still possible to get some drives at lower price points. NAND pricing tends to be highly volatile, not to mention the benefits that having a direct relationship with a fab offers, both of which contribute to the wide spread.

The other thing to keep in mind with any SF-2281 based SSD is the difference in performance between compressible and incompressible data. If you're running FileVault on your rMBP you will see lower performance from anything SandForce based compared to the standard Samsung PM830 used in the stock rMBP.

All of that being said, it's great to see OWC offer an upgrade path for the MacBook Pro with Retina Display. Pre-orders for the Mercury Aura Pro are available immediately, with first shipments going out on or around August 21.

Read More ...

AMD Announces New, Higher Clocked Radeon HD 7950 with Boost

August is not typically a busy time of the year for the GPU industry. But this is quickly turning out to be anything but a normal August. Between professional and consumer graphics cards we have a busy week ahead.

Kicking things off on the consumer side today, AMD is announcing that they will be releasing a new Radeon HD 7950 with higher clockspeeds. The new 7950, to be called the Radeon HD 7950, is a revised version of the existing 7950 that is receiving the same performance enhancements that the 7970 received back in June, which were the basis of the Radeon HD 7970 GHz Edition.

Read More ...

The AMD FirePro W9000 & W8000 Review: Part 1

Despite the wide range of the GPU coverage we do here at AnandTech, from reading our articles you would be hard pressed to notice that AMD and NVIDIA have product lines beyond their consumer Radeon and GeForce brands. Consumer video cards compose the bulk of all video cards shipped, the bulk of revenue booked, and since they’re targeted at a very wide audience, the bulk of all marketing attention. But that doesn't mean they're the only video cards that matter.

Abutting the consumer market is the smaller, specialized, but equally important professional market that makes up the rest of the desktop GPU marketplace. Where consumers need gaming performance and video playback, professionals need compute performance, specialized rendering performance, and above all a level of product reliability and support beyond what consumers need.

This leads us to today’s product review: AMD’s FirePro W9000 video card. Having launched their Graphics Core Next architecture and the first GPUs based on it at the beginning of the year, AMD has been busy tuning and validating GCN for the professional graphics and compute markets, and that process has finally reached its end. This month AMD is launching a complete family of professional video cards, the FirePro W series, led by the flagship W9000.

Read More ...

The AnandTech Podcast: Episode 1

Last Friday, Brian Klug, Ian Cutress and myself took an hour to discuss a lot of what was on our minds lately. At a high level we discussed building a new Ivy Bridge PC, Brian's picks for best Android smartphones on the market today and even talked a bit about the next iPhone. Go a bit deeper and we had discussions about SSDs, the changing PC landscape, Haswell and much more.

This marks our first ever official AnandTech podcast. The plan is to regularly do more of these and my hope is to be able to rope in all of the AnandTech writers to spend some time on the mic. We're getting an RSS feed for the podcast setup but for now here are direct links to the first episode (mp3 and m4a formats) as well as an embedded player here if you want to get started immediately. The total play time is just over 1 hour (01:01:05) and that will likely be our target going forward. As always, comments are welcome and appreciated. Let us know what you liked, hated and want to hear more of.

Read More ...

ASUS P8Z77-V Premium Review: A Bentley Among Motherboards

In the car industry, there is a large variety of cars to choose from - both the cheap and the expensive will get you from A to B, but in various amounts of luxury, with different engines and features under the hood. In comparison the motherboard industry, we have nothing like this - products are built to specifications and have to remain price competitive. Very rarely do we get a price competitive motherboard with a ton of features that also stretches the wallet in the same way a luxury car might do. For this analogy, we have the P8Z77-V Premium from ASUS to review, which comes in at $450 MSRP, but features Thunderbolt connectivity, dual Intel NIC, an onboard 32GB mSATA SSD, a PLX chip for 4-way PCIe devices, onboard WiFi, Bluetooth, and extra SATA/USB ports.

Read More ...

RightWare Launches Basemark GUI Free on Android Market - We Test It Out

Earlier this year RightWare launched a free version of the popular Basemark ES 2.0 benchmark free on the Android market in the form of Basemark ES 2.0 Taiji Free. The benchmark contains the Taiji subtest of that suite and offers benchmarking functionality at full resolution for Android smartphones and tablets. Today, RightWare is doing much the same thing with its Basemark GUI benchmark which we covered a year and a half ago, by releasing a slimmed down version called Basemark GUI Free on the Google Play Strore.

Basemark GUI Free includes both the Vertex and Blend subtests, which are designed to test different areas of OpenGL ES 2.0 performance on Android devices. We're no stranger to this test, having seen it in original form a while ago. New and improved functionality in this version includes an offscreen version of each subtest which runs at 720p in addition to the native resolution run. The offscreen run displays small tiles of the currently rendered scene to verify that the scene is being drawn, just like GLBenchmark does. Results also get uploaded to RightWare's PowerBoard and presented (for example, see this HTC One X result page) alongside RightWare's other data. Check out the gallery for screenshots from the entire application. I should note that there's also a video of the full Basemark GUI run back in my original launch story.

The Vertex and Blend subtests haven't changed at all since we originally saw them, but I'm reproducing RightWare's description of the tests in full below to give an idea what the workload is for each part. In general, Basemark GUI is a much more challenging and modern workload compared to Basemark ES 2.0, though it ends up being heavy on its geometry payload thanks to the Vertex test.

Vertex Test:

The Vertex test loads vertex data from the disk, loads it into vertex buffers, displays the geometry and then discards unnecessary data after a frame has been rendered.Blend Test:

The Vertex test contains several load cycles of increasing complexity. A cycle contains a preprocessing step that is not scored, and the final scoring stage. The geometry is composed of 10 distinct city blocks which are each approximately 3000 triangles and 6000 vertices in size. In the preprocessing stage, a city layout is generated out of the blocks based on the complexity requirements of a specific cycle. The entire layout is then saved to disk into an indexed binary file. Although visually similar, each block has its own unique location in this file. Identical blocks are textured using the same texture which resides in the GPU memory throughout the test.

Once the actual scoring run starts, the city is moved towards the camera. Blocks that disappear beyond the plane of view are discarded from the memory. New blocks appearing at the visible horizon are read from the disk.

The test load is increased incrementally to a maximum load. The test load ranges from approximately 3k vertices per frame to 15k vertices per frame. The number of triangles on the screen ranges from 190k to 250k.

The scene geometry is rendered using a single texture material and fog. There are two lights. The OpenGL ES 2.0 shaders use per vertex lighting.

The Blend test measures the efficiency of alpha blended rendering on the platform. Blending combines the incoming source fragment’s RGBA values with the RGBA values in the destination fragment stored in the frame buffer at the fragment’s x,y location. The active blend equation calculates the final RGBA value at the location from the input values. Overlay of graphical elements is a critical component of composition operations.Results and Analysis:

Overlay draw renders a large set of simple primitives. The number of overlain primitives will increase through the duration of the test up to a maximum specified count. The test is rendered twice: First with front-to-back ordering of the image planes and then with back-to-front ordering.

I've gone ahead and run Basemark GUI Free on a fairly representative combination of devices, same as what I did in the GLBenchmark 2.5 piece, for comparison.

Here we have two Samsung SoCs paired with the ARM Mali-400 MP4 GPU: the original dual-core Exynos 4210 as well as the Exynos 4 Quad (4412) from the international Galaxy S 3 which we're reviewing. Tegra 3 makes an appearance in the HTC One X International, as does TI's OMAP 4460. The latter shows up in two configurations, one with the SGX 540 running at full 384MHz clocks in the Huawei Ascend P1 and another with the SGX 540 running at 307MHz in the Galaxy Nexus (CPU clocks are 20% lower as well in the Galaxy Nexus). Finally we have Qualcomm's dual-core Snapdragon S4 with its Adreno 225 GPU in the HTC One X and the US Galaxy S 3, and Adreno 205 inside the HTC One V which I tossed in as well.

In the offscreen tests, both Adreno and Tegra 3 do very well, posting impressive numbers. In addition, SGX540 does surprisingly well. What's surprising is to see the Exynos based SoCs not do as well here, until you realize that the Vertex test is (hence the name) strongly dominated by vertex data, it isn't the kind of shader compute test we're used to seeing with GLBenchmark. Recall that Mali-400 isn't a unified shader architecture, and explicitly features more pixel shader hardware than vertex shader hardware.

Because the end score (in FPS) for on and off screen is the average of both subtest scores, it's hard to be completely certain that the large vertex workload is the reason for Mali-400MP4 doing how it does in the above tests, but it's a logical conclusion. Of course, the licensed version of Basemark GUI not on the Play Store does expose data from individual subtests.

The native resolution tests largely just show that a number of the 720p devices are actually at vsync for a large part of both test, though portions below 60 FPS end up bringing the average down slightly.Unlike Basemark ES 2.0 Taiji Free, Basemark GUI Free includes that offscreen 720p test, which makes it a much more functional tool for end user comparison across devices. I'm told that future RightWare benchmarks in the Play Store will also include offscreen subtests, which is a great step in the right direction.

Source: Google Play Store (Basemark GUI Free), PowerBoard (HTC One X)

Read More ...

HP 2311xi IPS Monitor

HP managed to make the right choices with their 27” ZR2740w monitor, hitting a reasonable price point without sacrificing quality. Now HP has introduced their 2311xi monitor, a 23” IPS display with LED backlighting that is designed with value in mind. Even with their value target, they haven't cut back on features, with multiple inputs and a good amount of adjustments available inside of the display.

With a street price of $200, HP is aiming directly at value priced TN displays that have ruled the low-end of the LCD market for years. We finally might be starting to move to better panels, as the price of IPS continues to come down. Has HP managed to get enough quality into a $200 display that it can convince people to move from TN panels when looking for a value display, or have there been too many sacrifices made in order to hit this aggressive price point?

Read More ...

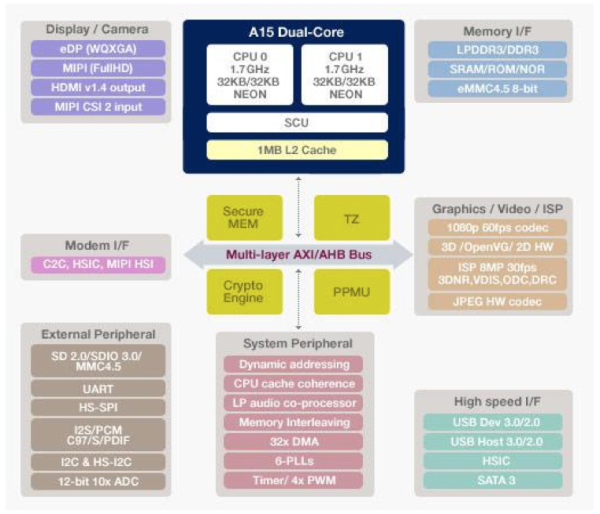

Samsung Announces A15/Mali-T604 Based Exynos 5 Dual

Yesterday Samsung officially announced what we all knew was coming: the Exynos 5 Dual. Due to start shipping sometime between the end of the year and early next year, the Exynos 5 Dual combines two ARM Cortex A15s with an ARM Mali-T604 GPU on a single 32nm HK+MG die from Samsung.

The CPU

Samsung's Exynos 5 Dual integrates two ARM Cortex A15 cores running at up to 1.7GHz with a shared 1MB L2 cache. The A15 is a 3-issue, Out of Order ARMv7 architecture with advanced SIMDv2 support. The memory interface side of the A15 should be much improved compared to the A9. The wider front end, beefed up internal data structures and higher clock speed will all contribute to a significant performance improvement over Cortex A9 based designs. It's even likely that we'll see A15 give Krait a run for its money, although Qualcomm is expected to introduce another revision of the Krait architecture sometime next year to improve IPC and overall performance. The A15 is also found in TI's OMAP 5. It will likely be used in NVIDIA's forthcoming Wayne SoC, as well as the Apple SoC driving the next iPad in 2013.The Memory Interface

With its A5X Apple introduced the first mobile SoC with a 128-bit wide memory controller. A look at the A5X die reveals four 32-bit LPDDR2 memory partitions. The four memory channels are routed to two LPDDR2 packages each with two 32-bit interfaces (and two DRAM die) per package. Samsung, having manufactured the A5X for Apple, learned from the best. The Exynos 5 Dual is referred to as having a two-port LPDDR3-800 controller delivering 12.8GB/s of memory bandwidth. Samsung isn't specific about the width of each port, but the memory bandwidth figure tells us all we need to know. Each port is either 64-bits wide or the actual LPDDR3 data rate is 1600MHz. If I had to guess I would assume the latter. I don't know that the 32nm Exynos 5 Dual die is big eough to accommodate a 128-bit memory interface (you need to carefully balance IO pins with die size to avoid ballooning your die to accommodate a really wide interface). Either way the Exynos 5 Dual will equal Apple's A5X in terms of memory bandwidth.TI's OMAP 5 features a 2x32-bit LPDDR2/DDR3 interface and is currently rated for data rates of up to 1066MHz, although I suspect it wouldn't be too much of a stretch to get DDR3-1600 memory working with the SoC. Qualcomm's Krait based Snapdragon S4 also has a dual-channel LPDDR2 interface, although once again there's no word on what the upper bound will be for supported memory frequencies.

The GPU

Samsung's fondness of ARM designed GPU cores continues with the Exynos 5 Dual. The ARM Mali-T604 makes its debut in the Exynos 5 Dual in quad-core form. Mali-T604 is ARM's first unified shader architecture GPU, which should help it deliver more balanced performance regardless of workload (the current Mali-400 falls short in the latest polygon heavy workloads thanks to its unbalanced pixel/vertex shader count). Each core has been improved (there are now two ALU pipes per core vs. one in the Mali-400) and core clocks should be much higher thanks to Samsung's 32nm LP process. Add in gobs of memory bandwidth and you've got a recipe for a pretty powerful GPU. Depending on clock speeds I would expect peak performance north of the PowerVR SGX 543MP2, although I'm not sure if we'll see performance greater than the 543MP4. The Mali-T604 also brings expanded API support including DirectX 11 (feature level 9_3 though, not 11_0).Video encode and decode are rated at 1080p60.

The Rest

To complete the package Samsung integrates USB 3.0, SATA 3, HDMI 1.4 and eDP interfaces into the Exynos 5 Dual. The latter supports display resolutions up to 2560 x 1600. The complete package is the new face of a modern day mobile system on a chip.Samsung remains very aggressive on the SoC front. The real trick will be whether or not Samsung can convince other smartphone and tablet vendors (not just Samsung Mobile) to use its solution instead of something from TI, NVIDIA or Qualcomm. As long as Samsung Mobile ships successful devices the Samsung Semiconductor folks don't have to worry too much about growing marketshare, but long term it has to be a concern.

Read More ...

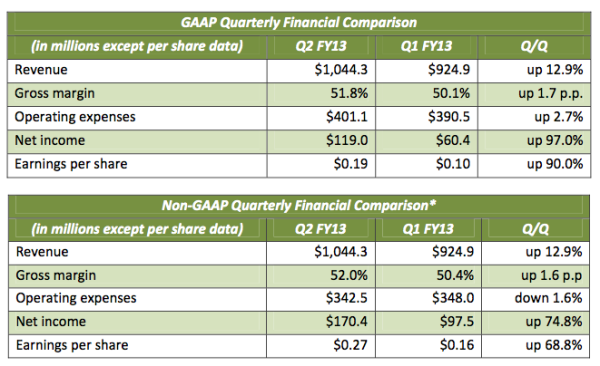

NVIDIA Q2 FY13 Earnings Report: $1.04B Revenue, Tegra Sales Recover

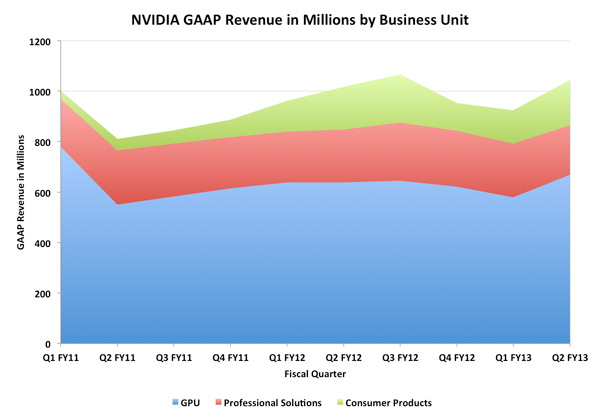

I don't normally comment on earning's calls, but this is something I've been talking a lot about in meetings offline so I decided to write up a short post. Yesterday NVIDIA announced its Q2 earnings. In short, they were good. Total revenue was up to $1.04 billion and gross margins were healthy at 51.8%. The more interesting numbers were in the breakdown of where all of that revenue came from. NVIDIA reports on revenue from three primary businesses: GPU, Professional Solutions and Consumer Products. The GPU business includes all consumer GPUs (notebook, desktop and memory - NVIDIA sells GPU + memory bundles to its partners) as well as license revenue from NVIDIA's cross licensing agreement with Intel. The Professional Solutions business is all things Quadro and Tesla. Finally the Consumer Products business is home to Tegra, Icera, game console revenue and embedded products.

I plotted revenue across all three businesses going back over the past 2.5 years:

The quarter that just ended was NVIDIA's second quarter for fiscal year 2013 which is why the quarter stamps along the x-axis look a bit forward looking at first glance. Going back two years ago, the consumer products business was virtually nonexistent. Two years ago NVIDIA's consumer products sales were one quarter what they are today. The growth in the Tegra space has been steady since then, but late last year it saw a bit of a fall off (Tegra 2 wasn't exactly competitive in the second half of 2011). NVIDIA boasted healthy growth this quarter thanks to some fairly high profile Tegra 3 design wins, but the overall revenue for the consumer products group is still below its $191.1M peak three quarters ago. There's still a lot of hope for the business and it's definitely healthier than it was a couple of years ago, but there's still a long way to go. Ultimately NVIDIA needs to produce designs competitive enough to last until the next design cycle, and not taper off early. Tegra 2 was late to market and thus its competitive position was understandable at the end of 2011. Tegra 3 did a lot better but the real hope is for its Cortex A15 based successor, Wayne.

As it stands, Tegra (and the rest of the consumer products group) is responsible for 17.2% of NVIDIA's total quarterly revenue. That's hardly insignificant. If more PCs move over to processor graphics in lieu of discrete GPUs, Tegra will need to grow even more to make up for that loss. I have been concerned about the margins NVIDIA gets out of its Tegra parts. OEMs in the ARM mobile space expect to pay below $30 for close to 100mm2of silicon. As a result, margins on a chip like Tegra are no where near what they are on a high-end GPU. The question is, are they competitive with entry level discrete GPUs? It's still too early to correlate increases in Tegra revenue with an impact on gross margins, but so far there's nothing to complain about:

The dip back in Q2 FY11 has to do with bumpgate, but if we look at Q3-FY12 and Q2-FY13 gross margins remained healthy during Tegra's two biggest quarters. This isn't enough data to conclude for sure that Tegra is selling at good margins, but it's promising for NVIDIA as a whole for now. Today NVIDIA's 28nm GPUs are selling at what appears to be very healthy margins. NVIDIA seems to have no problem selling nearly everything they make, at very good (for NVIDIA) prices. It's entirely possible that better than average GPU margins can help offset lower Tegra margins. It's also possible that both the GPU and consumer products businesses enjoy healthy margins.

At the end of the day, NVIDIA had a good quarter and Tegra remains an important part of the business. NVIDIA has a couple of high profile design wins with Tegra 3 in Windows 8 devices later this year, including Microsoft's Surface tablet. The real question is how good will Wayne be next year. What I've heard thus far is promising, but I don't have any hard data yet. I suspect we'll also really begin to see the impact of processor graphics integration next year with Haswell. NVIDIA doesn't break down GPU revenue by market segment so it's unclear how much of a loss the consumer products division would have to cover should more OEMs choose to leave discrete GPUs out of their systems.

The good news for NVIDIA is the high end (and quite profitable) GPU market is likely safe for the near future. The question remains how big of an impact we'll see at the entry level and lower midrange segments. A lot of that really depends on the success of Ultrabooks, Windows 8 tablets and other ultra small form factor machines that may not prioritize discrete GPUs.

For now it's clear the Consumer Products group and Tegra has legs. NVIDIA's margins and revenue have improved over the past two years but there's a lot of room to grow on the back of smartphones and tablets. NVIDIA has done surprisingly well with Tegra over the past couple of years, especially in the face of some very strong qualcompetition. We'll find out soon enough if Wayne has what it takes to give NVIDIA's Consumer Products business its first $200M+ quarter.

Read More ...

The Intel SSD 910 Review

The increase in compute density in servers over the past several years has significantly impacted form factors in the enterprise. Whereas you used to have to move to a 4U or 5U chassis if you wanted an 8-core machine, these days you can get there with just a single socket in a 1U or 2U chassis (or smaller if you go the blade route). The transition from 3.5" to 2.5" hard drives helped maintain IO performance as server chassis shrunk, but even then there's a limit to how many drives you can fit into a single enclosure. In network architectures that don't use a beefy SAN or still demand high-speed local storage, PCI Express SSDs are very attractive. As SSDs just need lots of PCB real estate, a 2.5" enclosure can be quite limiting. A PCIe card on the other hand can accommodate a good number of controllers, DRAM and NAND devices. Furthermore, unlike a single 2.5" SAS/SATA SSD, PCIe offers enough bandwidth headroom to scale performance with capacity. Instead of just adding more NAND to reach higher capacities you can add more controllers with the NAND, effectively increasing performance as you add capacity.

It took surprisingly long for Intel to dip its toe in the PCIe SSD waters. In fact, Intel's SSD behavior post-2008 has been a bit odd. To date Intel still hasn't released a 6Gbps SATA controller based on its own IP. Despite the lack of any modern Intel controllers, its SSDs based on third party controllers with Intel firmware continue to be some of the most dependable and compatible on the market today. Intel hasn't been the fastest for quite a while, but it's still among the best choices. It shouldn't be a surprise that the market eagerly anticipated Intel's SSD move into PCI Express. Read on for our full review of Intel's SSD 910, the company's first PCIe SSD.

Read More ...

Pulse Launches Pulse for Web

Pulse, one of the more popular and well-designed newsreaders has finally launched a web-based version today. While previously only available on Android and iOS, the new web-based version is a blessing for Windows Phone users that don't yet have a native app. The interface is extremely slick and lets you customize the layout in a few different ways. The tiles even rearrange themselves automatically as you resize the browser window.

The website has a prominent Internet Exporer branding, and Windows 8 & Internet Explorer 10 users can take advantage of some cool multi-touch gestures as well. Users with existing Pulse.me accounts can sign in immediately to find all their sources synced and ready to go. Alternatively, you can create a new account or sign in using Facebook credentials as well.

Pulse has almost exlusively been my favorite newsreader app for quite some time now and the new web-based version makes things even better.

Head on over to Pulse right now and check it out for yourself.

Read More ...

T-Mobile RF Engineer Does Reddit AMA - Answers a Few LTE Questions

In the past, carriers have been guarded if not purposely opaque about things like radio network planning, infrastructure rollouts, and other competitive details. With the smartphone boom well underway, many of those curtains are starting to fall as customers get more and more savvy with air interfaces and asking the important questions. This morning on Reddit, a T-Mobile RF engineer started an AMA,

I obviously could not resist the temptation to ask a few questions myself, and got some interesting replies.

My questions:

- Can you talk briefly about how much traffic on GERAN you see from iPhone customers? How much of a catch-22 is that situation for moving that PCS spectrum dedicated to it over to WCDMA?

- In some markets it seems as though T-Mobile will be unable to run DC-HSDPA on AWS alongside any LTE because of lack of spectrum. Obviously multi-band carrier aggregation (WCDMA carriers on PCS and AWS) is a big part of that future, can you talk about the challenges involved there?

- Will T-Mobile deploy 3GPP Rel 8, or will you guys go right to Rel 10 for LTE?

- How much variance in WCDMA utilization do you see across markets? From your point of view, are caps and the end of unlimited data plans really backed up across the board, or just in a few markets? In my AZ markets (Tucson, Phoenix) where I do a lot of testing, I regularly find I have the sector to myself.

- Traditional PA at bottom architecture, or Remote Radio Head architecture for T-Mobile LTE?

- This is already somewhat obvious, but could you confirm/discuss T-Mobile LTE channel bandwidths?

- We have about a million iPhones on our network now. 99.9% of their traffic is 2G/EDGE only right now, so obviously their load is dwarfed by everything else. The iPhone is a significant part of the modernization project. Once implemented, iPhones will work on U1900 at much higher speeds.

- Spectrum is always a limitation. With the software that exists now, you will not be able to do dual carrier split between AWS and PCS. That's supposed to be fixed in the future, but I don't know a timeline on it.

- It depends on a lot of things. In general, I think caps are stupid, especially the way that we handle them. Events (concerts, sporting events, malls @ Christmas) crush us though.

- We're going with remote radios as much as we can, for LTE, UMTS, and GSM.

- LTE will launch with a single 10 MHz channel, 5 up, 5 down.

Update: The T-Mobile AMA source has dropped a few other interesting tidbits, as the AMA has progressed. Among mundane things like equipment failures, copper theft and vandalism are a major source of problems for T-Mobile in some markets, along with the usual kind of things like animal encroachment. In addition, the source uses a Samsung Galaxy S 3, and noted the use of disguised cell sites.

Source: Reddit

Read More ...

Plextor Releases M5 Pro SSD: Say Hello to Marvell 88SS9187 and 19nm Toshiba NAND

This is an announcement we have been waiting for. In our Plextor M3 Pro and M5S reviews, we mentioned that the limits of Marvell's 88SS9174 controller have more or less been reached and it's time to switch to more powerful silicon, and that's exactly what Plextor has done now. Plextor's M5 Pro is the first SSD to publicly use Marvell's new 88SS9187 controller (OCZ's Vertex 4 and Agility 4 use Marvell silicon, but the specific silicon hasn't been confirmed). Marvell released the controller back in March, but as always, it takes time for manufacturers to design a product based around a new controller. Validation alone can take over a year if done thoroughly.

Not only is Plextor using a brand new controller, the M5 Pro is also the first consumer SSD to use Toshiba's 19nm Toggle-Mode MLC NAND.

| Plextor M5 Pro Specifications | |||

| Capacity | 128GB | 256GB | 512GB |

| Controller | Marvell 88SS9187 | ||

| NAND | Toshiba 19nm Toggle-Mode MLC NAND | ||

| Sequential Read | 540MB/s | 540MB/s | 540MB/s |

| Sequential Write | 340MB/s | 450MB/s | 450MB/s |

| 4K Random Read | 91K IOPS | 94K IOPS | 94K IOPS |

| 4K Random Write | 82K IOPS | 86K IOPS | 86K IOPS |

| Cache (DDR3) | 256MB | 512MB | 768MB |

| Warranty | 5 years | ||

| Availability | Mid-August 2012 | ||

Pricing is to be announced but I would expect the M5 Pro to be priced similarly to what the M3 Pro is currently selling for. Exact availability is still unknown but Plextor is saying mid-August 2012 in the press release, so we should see this drive retailing in a few weeks. Our review sample is already on its way here so stay tuned for our review.

Read More ...

NVIDIA Announces Kepler-Based Quadro K5000 & Second-Generation Maximus

Since the initial launch of NVIDIA’s unusual chip stack for Kepler in late March, there has been quite a bit of speculation on how NVIDIA would flesh out their compute and professional products lines. Typically NVIDIA would launch a high-end GPU first (e.g. GF100), and use that to build their high-end consumer, professional, and compute products. Kepler of course threw a wrench into that pattern when the mid-tier GK104 became the first Kepler GPU to be launched.

The first half of that speculation came to rest in May, when NVIDIA has announced their high-end Kepler GPU, GK110, and Tesla products based on both GK104 and GK110. NVIDIA’s solution to the unusual Kepler launch situation was to launch a specialized Tesla card based on GK104 in the summer, and then launch the more traditional GK110 based Tesla late in the year. This allowed NVIDIA to get Tesla K10 cards in the hands of some customers right away (primarily those with workloads suitable for GK104), rather than making all of their customers wait for Tesla K20 at the end of the year.

Meanwhile the second half of that speculation comes to an end today with the announcement of NVIDIA’s first Kepler-based Quadro card, the Quadro K5000.

Read More ...

AMD Introduces FirePro A300 & A320 APUs: Trinity for Graphics Workstations

At its Financial Analyst Day earlier this year, AMD laid out its vision for the future of the company. For the most part the strategy sounded a lot like what AMD was supposed to be doing all along, now with a strong commitment behind it. One major theme of the new AMD was agility. As a company much smaller than Intel, AMD should be able to move a lot quicker as a result. Unfortunately, in many cases that simply wasn't the case. The new executive team at AMD pledged to restore and leverage that lost agility, partially by releasing products targeted to specific geographic markets and verticals where they could be very competitive. Rather than just fight the big battle with Intel across a broad market, the new AMD will focus on areas where Intel either isn't present or is at a disadvantage and use its agility to quickly launch products to compete there.

One of the first examples of AMD's quick acting is in today's announcement of a new FirePro series of APUs. On the desktop and in mobile we have Trinity based APUs. The FirePro APUs are aimed at workstations that need professional quality graphics drivers but are fine with entry level GPU performance.

At a high level the FirePro APU makes sense. Just as processor graphics may eventually be good enough for many consumers, the same can be said about workstation users. Perhaps today is a bit too early for that crossover, but you have to start somewhere.

Going up against Intel in a market that does value graphics performance meets the agile AMD requirement, although it remains to be seen how much of a burden slower scalar x86 performance is in these workstation applications.

AMD's motivation behind doing a FirePro APU is simple: workstation/enterprise products can be sold at a premium compared to similarly sized desktop/notebook parts. Take the same Trinity die, pair it with FirePro drivers you've already built for the big discrete GPUs, and you can sell the combination for a little more money with very little additional investment. Anything AMD can do at this point to increase revenue derived from existing designs is a much needed effort.

There are two FirePro APUs being announced today, the A300 and A320:

| AMD FirePro APUs | |||||

| APU Model | A300 | A320 | |||

| “Piledriver” CPU Cores | 4 | 4 | |||

| CPU Clock (Base/Max) | 3.4GHz / 4.0GHz | 3.8GHz / 4.2GHz | |||

| L2 Cache (MB) | 4 | 4 | |||

| FirePro Cores | 384 | 384 | |||

| GPU Clock | 760MHz | 800MHz | |||

| TDP | 65W | 100W | |||

The competition for these FirePro APUs is Intel's Xeon with P4000 graphics (P4000 is the professional version of the HD 4000 we have on the desktop IVB parts). I haven't personally done any comparisons between AMD's FirePro drivers and what Intel gives you with the P4000, so I'll hold off on drawing any conclusions here, but needless to say that at least from a performance standpoint AMD should have a significant advantage. Given the long history of producing professional graphics drivers, I would not be surprised to see some advantages there as well.

AMD hasn't released any pricing information as the A300/A320 won't be available in the channel. The FirePro APUs are OEM only and are primarily targeted at markets like India where low cost, professional graphics workstations are apparently in high demand.

Read More ...

Apple Releases iOS 6 Beta 4, Removes YouTube.app

Earlier today, Apple released iOS 6 Beta 4 to iOS developers, moving the new iOS release one step closer to launch. The update is available for previous iOS 6 Beta users both over the air and as a standalone download from the developer portal as usual. The version bumps the build number up to 10A5376e, and updates the baseband version to 3.0.0 on the iPhone 4S.

In addition to the usual bugfixes and subtle changes to APIs, iOS 6 Beta 4 removes the Apple-built and maintained YouTube.app from the software bundle. The stock YouTube app has only seen a few updates since release with the original iPhone. The initial YouTube app's purpose was to serve as a gateway for the small catalog of MP4 and 3GP (MPEG-4 and H.264 encoded) format videos in the YouTube catalog, as opposed to FLV video. Much of this was motivated by the need to match YouTube's catalog to the video format compatible with Apple's hardware decode blocks. Since then, nearly every SoC's video decoder can handle H.264 well above even the 1080p YouTube format.

As time has gone on, playing back YouTube videos directly from the web in MP4 has become the new norm, with Google's improved YouTube web player for iOS being the most common workflow. Apple and Google both issued statements to The Verge, noting that Apple's license to distribute the YouTube app has ended, and that Google will build and distribute its own YouTube application through the App Store. The end result is more control for Google over the YouTube experience thanks to the decoupling of YouTube from the OS.

Another subtle change is the inclusion of a WiFi + Cellular data tab under cellular settings on iOS 6 B4. No doubt this enables applications to transact data over cellular when WiFi is spotty. iMessage for example on iOS transacts all data over cellular even when attached to WiFi.

Update: Some readers asked, and interestingly enough the upload to YouTube functionality from either Camera.app or Photos.app remains intact. I tested and was able to upload a video just fine. No doubt Google's YouTube application will extend or replace some of this remaining OS-level functionality.

Read More ...

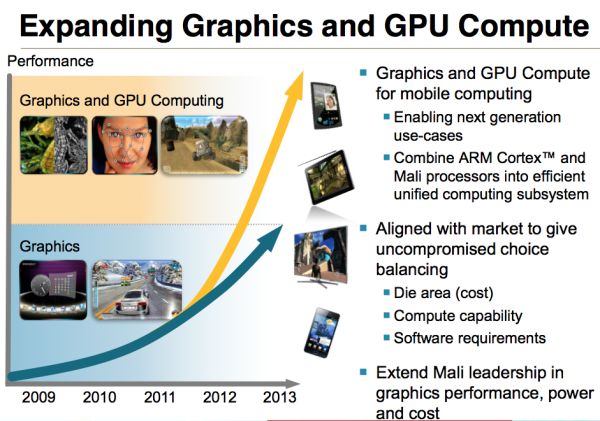

ARM Announces 8-core 2nd Gen Mali-T600 GPUs

In our discrete GPU reviews for the desktop we've often noticed the tradeoff between graphics and compute performance in GPU architectures. Generally speaking, when a GPU is designed for compute it tends to sacrifice graphics performance or vice versa. You can pursue both at the same time, but within a given die size the goals of good graphics and compute performance are usually at odds with one another.

Mobile GPUs aren't immune to making this tradeoff. As mobile devices become the computing platform of choice for many, the same difficult decisions about balancing GPU compute and graphics performance must be made.

ARM announced its strategy to dealing with the graphics/compute split earlier this year. In short, create two separate GPU lines: one in pursuit of great graphics performance, and one optimized for graphics and compute.

Today all of ARM's shipping GPUs fall on the blue, graphics trend line in the image above. The Mali-400 is the well known example, but the forthcoming Mali-450 (8-core Mali-400 with slight improvements to IPC) is also a graphics focused part.

The next-generation ARM GPU architecture, codenamed Midgard but productized as the Mali-T600 series will have members optimized for graphics performance as well as high-end graphics/GPU compute performance.

The split looks like this:

The Mali-T600 series is ARM's first unified shader architecture. The parts on the left fall under the graphics roadmap, while the parts on the right are optimized for graphics and GPU compute. To make things even more confusing, the top part in each is actually a second generation T600 GPU, announced today.

What does the second generation of T600 give you? Higher IPC and higher clock speeds in the same die area thanks to some reworking of the architecture and support for ASTC (an optional OpenGL ES texture compression spec we talked about earlier today).

Both the T628 and T678 are eight-core parts, the primary difference between the two (and between graphics/GPU compute optimized ARM GPUs in general) is the composition of each shader core. The T628 features two ALUs, a LSU and texture unit per shader, while the T658 doubles up the ALUs per core.

Long term you can expect high end smartphones to integrate cores from the graphics & compute optimized roadmap, while the mainstream and lower end smartphones wll pick from the graphics-only roadmap. All of this sounds good on paper, however there's still the fact that we're talking about the second generation of Mali-T600 GPUs before the first generation has even shipped. We will see the first gen Mali-T600 parts before the end of the year, but there's still a lot of room for improvement in the way mobile GPUs and SoCs are launched...

Read More ...

Anand Chandrasekher Joins Qualcomm

Qualcomm has been on a hiring roll lately. Not only did it scoop up AMD's Eric Demers, but today Qualcomm announced that Anand Chandrasekher would be joining as CMO (Chief Marketing Officer). Anand's last major gig was as the Senior VP of Intel's Ultra Mobility Group, where he was responsible for getting Atom into ultra mobile devices (read: UMDs, netbooks, smartphones and tablets). He never quite got to see the latter half of that mission come the fruition as Intel let him go last year.

Obviously Qualcomm doesn't need any help getting its SoCs and baseband chips into smartphones and tablets, but what it does need is guidance on the marketing side. Intel and NVIDIA are both well known for being very aggressive with their marketing. Qualcomm has been getting better, but I suspect Anand's goal will be to bring the company up to parity with their rivals. With nearly 14 years of experience at Intel, including a few years in marketing roles, Anand should have some good knowledge to share with his Qualcomm brethren.

It's a smart move for Qualcomm. I've known Anand for over a decade and he definitely has the experience to help address one of Qualcomm's weaknesses. Now we just have to wait to see what Eric is going to cook up over there...

Read More ...

Khronos Announces OpenGL ES 3.0, OpenGL 4.3, ASTC Texture Compression, & CLU

As we approach August the technical conference season for graphics is finally reaching its apex. NVIDIA and AMD held their events in May and June respectively, and this week the two of them along with the other major players in the GPU space are coming together for the graphics industry’s marquee event: SIGGRAPH 2012. Overall we’ll see a number of announcements this week, and kicking things off will be the Khronos Group.

The Khronos Group is the industry consortium responsible for OpenGL, OpenCL, WebGL, and other open graphics/multimedia standards and APIs. At SIGGRAPH 2012 they are making several announcements. Chief among these are the release of the OpenGL ES 3.0 specification, the OpenGL 4.3 specification, the Adaptive Scalable Texture Compression Extension, and the CLU utility for OpenCL. We'll be diving into each of these announcements, breaking them down into the major features and how they're going to affect their respective projects.

Read More ...

Available Tags:GeForce , GTX , Zotac, , Gigabyte , Samsung , Intel , SSD , Galaxy , Microsoft , Windows , MacBook , AMD , Radeon , FirePro , AnandTech , ASUS , Motherboards , Android , HP , NVIDIA , Toshiba , NVIDIA , Apple , iOS ,

No comments:

Post a Comment