Synology DS2015xs Review: An ARM-based 10G NAS

Synology is one of the most popular COTS (commercial off-the-shelf) NAS vendors in the SMB / SOHO market segment. The NAS models introduced by them in 2014 were mostly based on Intel Rangeley (the Atom-based SoCs targeting the storage and communication market). However, in December, they sprang a surprise by launching the DS2015xs, an ARM-based model with dual 10GbE ports. We were intrigued by the combination of a quad-core Cortex-A15 and 10GbE I/O and promptly requested Synology for a review sample. Do ARM SoCs have the necessary performance to warrant a place in the high-performance xs series? More importantly, is the Annapurna Labs AL514 SoC capable of keeping the 10G ports saturated during operation? Read on to for our review of the Synology DS2015xs to find out.

Read More ...

LG Replaces Android Wear: Adds LTE, GPS and NFC to the Watch Urbane

Today LG pre-announced significant additions to their high-end wearable, the LG Watch Urbane, via a new edition called the LG Watch Urbane LTE. Both devices will officially launch at Mobile World Congress next week. From a feature standpoint, the LG Watch Urbane LTE adds more wireless functionality via the inclusion of LTE, VoLTE (not 3G voice), GPS, and NFC.

These additions dramatically expand LG's ability to cover the movement use case of wearables and places the Watch Urbane LTE alongside the Samsung Gear S as the only devices to include cellular functionality. This provides a safety net when making a fitness excursion, as emergency calls are now possible. LG had this use case in mind specifically as they included a single key press to initiate an emergency call. Additionally, the inclusion of NFC enables mobile payments, although LG has not yet provided details on how this works. Finally, LG has dramatically increased the battery size from 410mAH to 700mAH, which will help immensely with powering the LTE radio. I should note this is the largest battery I have seen to date in a wearable.

From an industry perspective, the most interesting part of this announcement is that LG has ditched Android Wear which was used for the non-LTE edition of the Watch Urbane. As Android Wear does not support NFC payments or cellular, this was a necessity to bring the Watch Urbane LTE to market, but it highlights that device makers like LG and Samsung are not waiting for Google to add functionality. Google needs to improve the pace of Android Wear updates if they want to keep their partners using the platform.

| LG Watch Urbane LTE | LG Watch Urbane | |

| SoC | Qualcomm Snapdragon 400 1.2GHz | Qualcomm Snapdragon 400 1.2GHz |

| Memory | 1GB LPDDR3 | 512MB LPDDR3 |

| Display | 1.3" plastic OLED (320 x 320, 245ppi) | 1.3" plastic OLED (320 x 320, 245ppi) |

| Storage | 4GB eMMC | 4GB eMMC |

| Wireless | LTE, NFC, Bluetooth 4.0 | Bluetooth 4.0 |

| Ingress protection | IP67 | IP67 |

| Battery | 700mAH | 410mAH |

| Sensors | Gyro, accelerometer, compass, barometer, heart rate, GPS | Gyro, accelerometer, compass, barometer, heart rate |

| I/O | Touch screen, buttons, speaker, microphone | Touch screen, buttons, microphone |

| OS | "LG Wearable Platform Operating System" |

Android Wear |

Update: It appears the watch might not run a customized Android distribution but rather something more custom. LG describes it as "LG Wearable Platform Operating System". Other news are reporting this as WebOS derived but nothing has been confirmed from LG. WebOS would be impressive considering we haven't seen a version including VoLTE.

Price and availability remain unknown. Look for additional details as Mobile World Congress 2015 begins next week.

Read More ...

DirectX 12 Performance Preview, Part 3: Star Swarm & Intel's iGPUs

We’re back once again for the 3rd and likely final part to our evolving series previewing the performance of DirectX 12. After taking an initial look at discrete GPUs from NVIDIA and AMD in part 1, and then looking at AMD’s integrated GPUs in part 2, today we’ll be taking a much requested look at the performance of Intel’s integrated GPUs. Does Intel benefit from DirectX 12 in the same way the dGPUs and AMD’s iGPU have? And where does Intel’s most powerful Haswell GPU configuration, Iris Pro (GT3e) stack up? Let’s find out.

As our regular readers may recall, when we were initially given early access to WDDM 2.0 drivers and a DirectX 12 version of Star Swarm, it only included drivers for AMD and NVIDIA GPUs. Those drivers in turn only supported Kepler and newer on the NVIDIA side and GCN 1.1 and newer on the AMD side, which is why we haven’t yet been able to look at older AMD or NVIDIA cards, or for that matter any Intel iGPUs. However as of late last week that changed when Microsoft began releasing WDDM 2.0 drivers for all 3 vendors through Windows Update on Windows 10, enabling early DirectX 12 functionality on many supported products.

With Intel WDDM 2.0 drivers now in hand, we’re able to take a look at how Intel’s iGPUs are affected in this early benchmark. Driver version 10.18.15.4098, these drivers enable DirectX 12 functionality on Gen 7.5 (Haswell) and newer GPUs, with Gen 7.5 being the oldest Intel GPU generation that will support DirectX 12.

Today we’ll be looking at all 3 Haswell GPU tiers, GT1, GT2, and GT3e. We also have our AMD A10 and A8 results from earlier this month to use as a point of comparison (though please note that this combination of Mantle + SS is still non-functional on AMD APUs). With that said, before starting we’d like to once again remind everyone that this is an early driver on an early OS running an early DirectX 12 application, so everything here is subject to change. Furthermore Star Swarm itself is a very directed benchmark designed primarily to showcase batch counts, so what we see here should not be considered a well-rounded look at the benefits of DirectX 12. At the end of the day this is a test that more closely measures potential than real-world performance.

| CPU: | AMD A10-7800 AMD A8-7600 Intel Core i3-4330 Intel Core i5-4690 Intel Core i7-4770R Intel Core i7-4790K |

| Motherboard: | GIGABYTE F2A88X-UP4 for AMD ASUS Maximus VII Impact for Intel LGA-1150 Zotac ZBOX EI750 Plus for Intel BGA |

| Power Supply: | Rosewill Silent Night 500W Platinum |

| Hard Disk: | OCZ Vertex 3 256GB OS SSD |

| Memory: | G.Skill 2x4GB DDR3-2133 9-11-10 for AMD G.Skill 2x4GB DDR3-1866 9-10-9 at 1600 for Intel |

| Video Cards: | AMD APU Integrated Intel CPU Integrated |

| Video Drivers: | AMD Catalyst 15.200 Beta Intel 10.18.15.4098 |

| OS: | Windows 10 Technical Preview 2 (Build 9926) |

Since we’re looking at fully integrated products this time around, we’ll invert our usual order and start with our GPU-centric view first before taking a CPU-centric look.

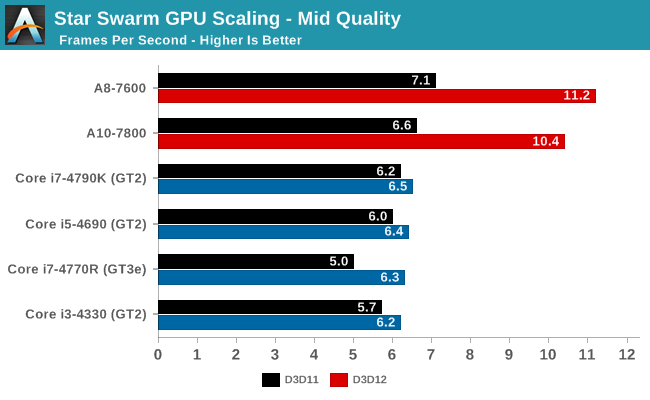

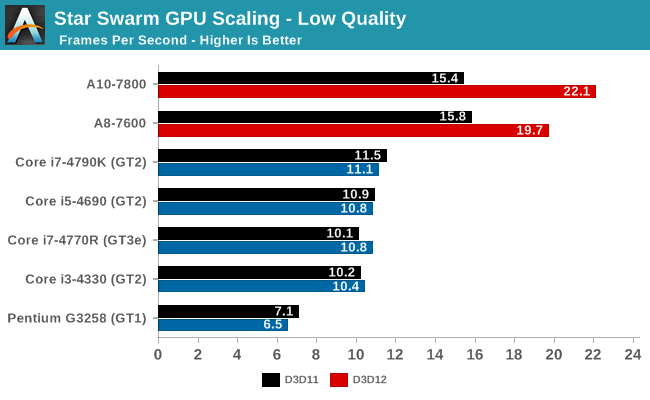

As Star Swarm was originally created to demonstrate performance on discrete GPUs, these integrated GPUs do not perform well. Even at low settings nothing cracks 30fps on DirectX 12. None the less there are a few patterns here that can help us understand what’s going on.

Right off the bat then there are two very apparent patterns, one of which is expected and one which caught us by surprise. At a high level, both AMD APUs outperform our collection of Intel processors here, and this is to be expected. AMD has invested heavily in iGPU performance across their entire lineup, where most Intel desktop SKUs come with the mid-tier GT2 GPU.

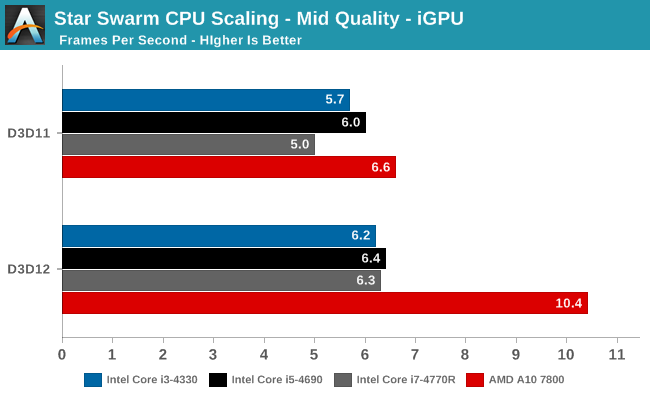

However what’s very much not expected is the ranking of the various Intel processors. Despite having all 3 Intel GPU tiers represented here, the performance between the Intel GPUs is relatively close, and this includes the Core i7-4770R and its GT3e GPU. GT3e’s performance here immediately raises some red flags – under normal circumstances it substantially outperforms GT2 – and we need to tackle this issue first before we can discuss any other aspects of Intel’s performance.

As long-time readers may recall from our look at Intel’s Gen 7.5 GPU architecture, Intel scales up from GT1 through GT3 by both duplicating the EU/texture unit blocks (the subslice) and the ROP/L3 blocks (the slice common). In the case of GT3/GT3e, it has twice as many slices as GT2 and consequently by most metrics is twice the GPU that GT2 is, with GT3e’s Crystal Well eDRAM providing an extra bandwidth kick. Immediately then there is an issue, since in none of our benchmarks does the GT3e equipped 4770R surpass any of the GT2 equipped SKUs.

The explanation, we believe, lies in the one part of an Intel GPU that doesn’t get duplicated in GT3e, which is the front-end, or as Intel calls it the Global Assets. Regardless of which GPU configuration we’re looking at – GT1, GT2, or GT3e – all Gen 7.5 configurations share what’s essentially the same front-end, which means front-end performance doesn’t scale up with the larger GPUs beyond any minor differences in GPU clockspeed.

Star Swarm for its part is no average workload, as it emphasizes batch counts (draw calls) above all else. Even though the low quality setting has much smaller batch counts than the extreme setting we use on the dGPUs, it’s still over 20K batches per frame, a far higher number than any game would use if it was trying to be playable on an iGPU. Consequently based on our GT2 results and especially our GT3e result, we believe that Star Swarm is actually exposing the batch processing limits of Gen 7.5’s front-end, with the front-end bottlenecking performance once the CPU bottleneck is scaled back by the introduction of DirectX 12.

The result of this is that while the Intel iGPUs are technically GPU limited under DirectX 12, it’s not GPU limited in a traditional sense; it’s not limited by shading performance, or memory bandwidth, or ROP throughput. This means that although Intel’s iGPUs benefit from DirectX 12, it’s not by nearly as much as AMD’s iGPUs did, never mind the dGPUs.

Update: Between when this story was written and when it was published, we heard back from Intel on our results. We are publishing our results as-is, but Intel believes that the lack of scaling with GT3e stems in part from a lack of optimizations for lower performnace GPUs in our build of Star Swarm, which is from an October branch of Oxide's code base. Intel tells us that newer builds do show much better overall performance and more consistent gains for the GT3e, all the while the Oxide engine itself is in flux with its continued development. In any case this reiterates the fact that we're still looking at early code here from all parties and performance is subject to change, especially on a test as directed/non-standard as Star Swarm.

So how much does Intel actually benefit from DirectX 12 under Star Swarm? As one would reasonably expect, with their desktop processors configured for very high CPU performance and much more limited GPU performance, Intel is the least CPU bottlenecked in the first place. That said, if we take a look at the mid quality results in particular, what we find is that Intel still benefits from DX12. The 4770R is especially important here, as it’s a relatively weaker GPU (base frequency 3.2GHz) coupled with a more powerful GPU. It starts out trailing the other Core processors in DX11, only to reach parity with them under DX12 when the bottleneck shifts from the CPU to the GPU front-end. The performance gain is only 25% - and at framerates in the single digits – but conceptually it shows that even Intel can benefit from DX12. Meanwhile the other Intel processors see much smaller, but none the less consistent gains, indicating that there’s at least a trivial benefit from DX12.

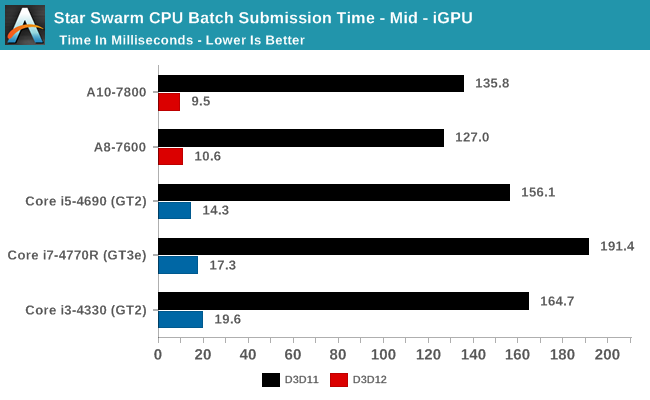

Taking a look under the hood at our batch submission times, we can much more clearly see the CPU usage benefits of DX12. The Intel CPUs actually start at a notable deficit here under DX11, with batch submission times worse than the AMD APUs and their relatively weaker CPUs, and 4770R in particular taking nearly 200ms to submit a batch. Enabling DX12 in turn causes the same dramatic reduction in batch submission times we’ve seen elsewhere, with Intel’s batch submission times dropping to below 20ms. Somewhat surprisingly Intel’s times are still worse than AMD’s, though at this point we’re so badly GPU limited on all platforms that it’s largely academic. None the less it shows that Intel may have room for future improvements.

With this data in hand, we can finally make better sense of the results we’re seeing today. Just as with AMD and NVIDIA, using DirectX 12 has a noticeable and dramatic reduction in batch submission times for Intel’s iGPUs. However in the case of Star Swarm the batch counts are so high that it appears GT2 and GT3e are bottlenecked by their GPU front-ends, and as a result the gains from enabling DX12 at very limited. In fact at this point we’re probably at the limits of Star Swarm’s usefulness, since it’s meant more for discrete GPUs.

The end result though is that one way or another Intel ends up shifting from being CPU limited to GPU limited under DX12. And with a weaker GPU than similar AMD parts, performance tops out much sooner. That said, it’s worth pointing out that we are looking at desktop parts here, where Intel goes heavy on the CPU and light on the GPU; in mobile parts where Intel’s CPU and GPU configurations are less lopsided, it’s likely that Intel would benefit more than they do on the desktop, though again probably not as much as AMD has.

As for real world games, just as with our other GPUs we’re in a wait-and-see situation. An actual game designed to be playable on Intel’s iGPUs is very unlikely to push as many batch calls as Star Swarm, so the front-end bottleneck and GT3e’s poor performance are similarly unlikely to recur. But at the same time with Intel generally being the least CPU bottlenecked in the first place, their overall gains under DX12 may be the smallest, particularly when exploiting the API’s vastly improved draw call performance.

In the meantime GDC 2015 will be taking place next week, where we will be hearing more from Microsoft and its GPU partners about DirectX 12. With last year’s unveiling being an early teaser of the API, the sessions this year will be focusing on helping programmers ramp up for its formal launch later this year, and with any luck we’ll find the final details on feature level 12_0 and whether any current GPUs are 12_0 compliant. Along with more on OpenGL Next (aka glNext), it should make for an exciting show for GPU events.

Read More ...

Western Digital My Cloud NAS Updates Target Prosumers and Small Businesses

Western Digital is no stranger to the NAS market. Their Sentinel series units (based on Windows Storage Server) have targeted business users for quite some time now. The My Cloud consumer series (1- and 2-bay NAS units based on a custom embedded Linux platform) introduced a few years back targets home users. These two product lines cover the two extreme ends of the market for NAS units costing up to $5000. In late 2013, Western Digital launched the My Cloud Expert series with the introduction of the 4-bay WD My Cloud EX4. This was followed by a 2-bay version in March 2014.

It has been almost a year since Western Digital last updated their hardware offerings, but the firmware and user-experience improvements have been coming in periodically (indicating long-term commitment to this market segment). Today, two sets of products are being introduced to cover the whole range of this NAS market segment:

- Updated EXpert Series (EX2100 and EX4100)

- New Business Series (DL2100 and DL4100)

From an external viewpoint, all the NAS units being introduced today come with dual GbE ports and a couple of USB 3.0 ports. Similar to previous generation EX units, the new ones also come with two power adapter inputs.

The EX2100 and EX4100 are one of the first NAS units based on the new Marvell Riverwood platform (ARMADA 385 / 388). These are dual-core Cortex-A9-based SoCs running at up to 1.6 GHz. The 2-bay unit comes with the ARMADA 385 and has 1 GB of RAM, while the 4-bay unit sports the ARMADA 388 and has 2 GB of RAM. The main difference between the ARMADA 385 and 388 is the presence of two vs. four native SATA ports. We will look more into the SoC platform in our dedicated review.

The DL2100 and DL4100 are based on the Intel Rangeley SoCs. Based on the Silvermont Atom cores, these SoCs have been quite popular with COTS NAS vendors over the last year (with Seagate's NAS Pro lineup as well as the Synology DSx15(+) series utilizing them). The 2-bay DL2100 is based on the 2C/2T Atom C2350 running at 1.7 GHz and sports 1 GB of RAM. The 4-bay DL4100 is based on the Atom C2338 and has 2 GB of RAM. The clock speeds and features are similar for both SoCs, though the C2350 has a slightly lower TDP (6W vs. 7W). On the software front, the DL series some with extensive Active Directory support, stressing its business focus.

The updated EX models and the new DL models round up Western Digital's offerings in this market segment. They now have units available for different needs and performance levels. The addition of Linux-based business NAS models help in reducing the costs for the small business market segment.

Western Digital has a number of features (both in hardware and the My Cloud OS) that make it stand out amongst the multitude of offerings from various vendors in this market space:

- Pre-installed OS / pre-configured NAS units, with OS on embedded flash: The pre-configuration is similar to Synology's Beyond Cloud series, but valid for all models in the EX and DL series. In addition, the OS is itself not spread in a replicated manner across all installed disks, but, resides along with the settings in flash memory on the board. One downside is that system migration is not possible (allows RAID roaming, though), but the approach does have its advantages in terms of fast setup.

- Storage scalability using dual NICs: This is a unique feature, allowing units to be daisy chained using the network links. The volumes in the daisy-chained NAS are present / visible through the primary unit's interface. Backups / replication can be easily configured, even though it is not a true high-availability system. The daisy-chained units don't even need to be of the same model.

- Redundant power-supply support: This was one of the unique features in the WD EX2 and EX4 that we reviewed last year. It allows for the NAS to remain in operation even if one of the power adapters were to fail.

- Expandable memory for the prosumer series: The DL series come with 1 GB and 2 GB of RAM for the 2-bay and 4-bay units respectively. However, end-users can opt for their own SO-DIMM modules to increase the memory in these units (up to 6 GB for the DL4100)

- Models with pre-configured disks come with the WD Red drives (6 TB variants included) - this provides consumers with a single point-of-contact for both the NAS unit and the storage media when it comes to support purposes.

The pricing for the various models / capacities is provided in the table below:

| Western Digital My Cloud NAS Introductory MSRPs [ Q1 2015 ] | ||||

| Capacity | EX2100 | EX4100 | DL2100 | DL4100 |

| Diskless | $250 | $400 | $350 | $530 |

| 4 TB | $430 | - | $530 | - |

| 8 TB | $560 | $750 | $650 | $880 |

| 12 TB | $750 | - | $850 | - |

| 16 TB | - | $1050 | - | $1170 |

| 24 TB | - | $1450 | - | $1530 |

Similar to Seagate's NAS and NAS Pro offerings, the updated hardware platforms and the tying together of the NAS and the storage media will help Western Digital expand their already growing presence in this market segment. The existing channel presence will also provide an additional advantage. Performance evaluation of the EX4100 as well as the DL4100 and comparison with other models in this market segment will be available in the reviews slated to go out over the next few days.

Read More ...

Gigabyte 17.3” P37X Gaming Notebook Now in North America

Gigabyte has an interesting line of gaming notebooks these days, including their own brand of P-series laptops as well as the AORUS brand. We’re in the process of reviewing the P35X v3, which packs a GTX 980M into a 0.82” thick 15.6” chassis, and now Gigabyte sends word that they have officially launched the big brother P37X with a 17.3” chassis in the North American market. It’s actually slightly thicker than the P35X, and the design language is very similar as well. That’s either good or bad depending on what you’re looking for in a gaming notebook.

On the one hand it’s generally slimmer (0.9”) and lighter (6.17 lbs.) than competing notebooks from Alienware, ASUS, Clevo, and MSI; however, keeping things cool in a thinner chassis generally means either more noise from the fans, higher temperatures, or both. It’s also either a conservative and subdued looking design, or it’s boring – I tend to like less bling on my laptops, but others are happier with multi-colored keyboard backlighting and a more aggressive industrial design.

In terms of features, all the core elements are essentially the same as the 15.6” model, but the keyboard adds a column of six dedicated macro keys. The top key switches between five banks of macros, so all told that gives you access to 25 macro sets. Besides the GTX 980M GPU, the system also supports Core i7 processors (Haswell series still), up to two 512GB mSATA drives in RAID 0, and two 2.5” drives are available as well. As with most other 17.3” laptops, the display remains a 1080p panel – there just aren’t many other options yet, though we’ve heard 3K/4K may be coming later this year (hopefully?) for 17.3” panels. At least the display is anti-glare and wide viewing angle (IPS most likely, though AHVA is also a possibility)

Amazon and other retailers are carrying the Gigabyte P37X, and the base model comes with i7-4720HQ, GTX 980M 8GB, 8GB system RAM, and a 1TB HDD (no SSDs in the base model, though you can always add your own) for $1999. If you prefer a slightly upgraded build, the Gigabyte P37X-CF2 also has 8GB RAM and an i7-4720HQ, but it includes a 256GB mSATA SSD and a Blu-ray burner for $2499. So yeah, just buy the base model and pick up a pair of 512GB mSATA MX200 SSDs for $440 instead – and if you really want a Blu-ray burner, that can be arranged for the remaining $60. You’ll probably want to upgrade the RAM as well, as 8GB is a bit chintzy on a high-end gaming rig these days.

Despite the odd pricing on the “upgraded” build, it’s good to see additional gaming notebook options, and for those that prefer a more subdued aesthetic the Gigabyte line might be exactly what you’re after. We’ll have the full review of the P35W v3 in the next week or two, so stay tuned.

Read More ...

Samsung Announces 128GB UFS Storage For Smartphones

We've first heard about plans to adopt UFS (Universal Flash Storage) with the announcements of Toshiba and Qualcomm reported over a year ago. While the promised late 2014 schedule seems to have been missed, and we still haven't seen any major product with the technology, it looks like UFS is finally gaining some traction as today Samsung is announcing the mass production of in-house solutions based on the UFS 2.0 standard.

Samsung claims to provide the new embedded memory type in 32GB, 64GB and 128GB capacities. The 128GB model doubles the amount of storage even their biggest eMMC storage solution is able to deliver. It was only last week that Samsung recently released a new eMMC 5.1 based NAND line-up which promised major gains over today's deployed eMMC products.

The UFS solution claims to achieve 19K IOPS (Input/output operations per second) in reads, almost double that of the 11K IOPS their eMMC 5.1 solution is capable of, and 2.7X times what common embedded memory is capable of today. There is also a purported boost to sequential read and write performance to SSD levels, although Samsung doesn't provide any actual figure, so we'll have to wait until we review a device to see what the actual gains are. What should be very interesting is a promised 50% decrease in energy consumption. We're still not very sure on the impact of eMMC power on a smartphone's battery life, but scenarios such as video recording are certain use-cases where a decrease in NAND power could be very beneficial to battery life.

UFS is based on a serial interface as opposed to eMMC's parallel architecture, enabling Full-Duplex data transfer and achieving twice to four times the peak bandwidth (depending on implementation) over the existing eMMC 8-bit interface.

Samsung offers the solution also in an ePoP package, meaning the NAND IC is embedded with the RAM ICs in a PoP package on top of the SoC, a solution already employed in the Galaxy Alpha and Galaxy Note 4. The goal here is to save on precious PCB space in small form factors such as smartphones.

We're looking forward to see in what kind of devices Samsung implements the technology and how it affects their performance and responsiveness.

Source: Samsung Tomorrow

Read More ...

Motorola Announces Moto E (2015): Cortex-A53 + LTE For $149

Today Motorola has announced the launch and immediate availability of the 2015 version of the Moto E, the latest member of the company’s line of low-end smartphones.

The 2015 edition of the Moto E is a pretty hefty upgrade of a phone launched just 9 months ago. In terms of design the new Moto E is generally a bigger, more powerful version of its predecessor, retaining the same rounded plastic design while enlarging the overall body slightly to house the larger 4.5" screen. Meanwhile Motorola has iterated on the 2014’s swappable back covers, with the 2015 featuring the ability to swap in one of the company’s newer grip shells, or the phone’s colored bands can be swapped out separately.

| Motorola's 2015 Low-End Smartphone Lineup | ||||||

| Motorola Moto E (2015) | Motorola Moto E (2014) | Motorola Moto G (2014) | ||||

| SoC | Qualcomm Snapdragon 410 (MSM8916) 4x Cortex A53 @ 1.2GHz Adreno 306 at 400MHz (LTE model XT1527) or Qualcomm Snapdragon 200 (MSM8x10) 4x Cortex A7 @ 1.2GHz Adreno 302 at 400MHz (3G models) |

Qualcomm Snapdragon 200 (MSM8x10) 2x Cortex A7 @ 1.2GHz Adreno 302 at 400MHz |

Qualcomm Snapdragon 400 (MSM8x26) 4x Cortex A7 @ 1.2 GHz Adreno 305 at 450MHz |

|||

| RAM/NAND | 1GB LPDDR3 8GB NAND & MicroSD |

1GB LPDDR2 4GB NAND & MicroSD |

1GB LPDDR3 8/16GB NAND & MicroSD |

|||

| Display | 4.5" 960x540 LCD | 4.3" 960x540 LCD | 5" 1280x720 IPS LCD | |||

| Dimensions | 129.9 x 66.8 x 12.3mm 145g |

124.38 x 64.8 x 12.3mm 142g |

141.5 x 70.7 x 11.0 mm 149g | |||

| Camera | 5MP (2592 х 1944) Rear Facing w/Auto Focus, F/2.2 aperture VGA (640x480) Front Facing |

5MP (2592 х 1944) Rear Facing w/Fixed Focus | 8MP (2592 х 1944) Rear Facing 2MP (1280x720) Front Facing |

|||

| Battery | 2390 mAh (9.08 Whr) | 1980 mAh (7.52 Whr) | 2070 mAh (7.87 Whr) | |||

| OS | Android 5.0 | Android 4.4.2 | Android 4.4.4 | |||

| Connectivity | 802.11 b/g/n + BT 4.0, USB2.0, GPS/GNSS | 802.11 b/g/n + BT 4.0, USB2.0, GPS/GNSS | 802.11 b/g/n + BT 4.0, USB2.0, GPS/GNSS | |||

| SIM Size | Micro-SIM | Micro-SIM (Dual SIM SKU) |

Micro-SIM | |||

The phone is being released in two versions. The first being the LTE model which primarily targets the US market in its LTE frequency bands. There are also a pair of 3G versions, with one again targeted at the US and the other more globally, with the big difference being the US 3G version's support of the 1700MHz AWS frequency bands for HSPA+.

| Motorolla E (2015) Model Breakdown | ||||||

| Region | GSM | UMTS/HSPA+ | LTE | |||

| 4G LTE - US GSM (XT1527) |

850, 900, 1800, 1900 | 850, 1700, 1900 | 2, 4, 5, 7, 12, 17 | |||

| US GSM (XT1511) | 850, 900, 1800, 1900 | 850, 1700, 1900 | - | |||

| Global GSM (XT1505) | 850, 900, 1800, 1900 | 850, 900, 1900, 2100 | - |

|||

Priced at $149, the LTE version features a Qualcomm Snapdragon 410 processor, which supplies both the quad-core Cortex-A53 CPU and the Category 4 LTE modem. The 3G versions will be launching later at $119 – the biggest difference here is the use of a Snapdragon 200 series SoC with a quad-core Cortex-A7 CPU instead of the newer A53 Snapdragon 410. Having received the LTE version from Motorola, for our purposes we’ll be focusing on the LTE version.

Likely the single biggest draw for the 2015 Moto E over the 2014 is the inclusion of LTE support, which is a first for a low-end Motorola phone, and in fact is something even the higher-tier 2014 Moto G did not include. Driven by the 9x25 modem integrated into the Snapdragon 410, this gives the Moto E Cat 4 LTE capabilities along the most common North American bands.

Meanwhile users of either version will also quickly notice the larger screen, which sees a slight bump to 4.5”, up from 4.3” in the 2014 version. Though larger, the resolution though remains entry-level at qHD (960x540) pixels, so pixel density has decreased some compared to the 2014 version. Helping to drive this larger display and to take advantage of the larger phone body is a 2390mAh battery, 410mAh more than in last year's model. Even accounting for the larger screen battery life should be improved over the 2014 version – particularly stand-by time – however we’ll have to give the phone a complete rundown to see what the real-world gains are.

Next to the screen and new to this year’s version is a front-facing VGA (640x480, 0.3MP) camera. The 2014 model skipped out on a camera entirely for cost reasons, and while this camera is of limited use, it should be reasonable enough for selfies and video chat on an entry level phone. Meanwhile the rear facing camera is still 5MP, however it’s now capable of auto focus versus last year’s fixed focus camera. As for video recording, this camera is used to record at 720p30, a significant step up from the 2014’s FWVGA (854x480) recording capabilities.

Storage has also seen a bump up, going from 4GB on-board to 8GB on-board, and users still looking for more can add more storage via microSD. The accompanying RAM on the other hand remains at 1GB, though it’s now LPDDR3 as opposed to LPDDR2.

Finally, the phone is shipping with Android 5.0 Lollipop, making it the first Motorola-branded phone to ship with Android 5.0 out of the factory and joining Motorola’s other phones which recently received the OS as an update. Motorola doesn’t specify whether they’re using a 32-bit or 64-bit version of the OS, however a quick check of the phone finds that it's running the 32-bit version of Android. Which given the fact that the 2015 Moto E is available with both Cortex-A53 and Cortex-A7 based SoCs, it makes sense that the company is sticking to 32-bit throughout.

We will be putting the new Moto E through its paces in the coming weeks, but so far it looks like a solid update to the Moto E lineup. At $149 for the LTE it does end up debuting at $20 more expensive than the previous version in what’s a very price sensitive market, so it will be interesting to see how consumers respond to the higher price. But LTE tends to be a big draw.

Shipping today, US customers can order the LTE version of the phone from Motorola’s website. Meanwhile international customers can look forward to Motorola rolling out the phone to more than 50 countries in the Americas, Europe, and Asia.

Read More ...

Lenovo Vows to Drop "Adware" and "Bloatware" From Its PCs

Pledge comes after Lenovo root certificate to spam firm triggered serious security panic

Read More ...

Windows 10 Adds USB 3.1 for Dual-Role Peripherals, External Display Support

Technology with support inter-peripheral interaction and display-over-USB

Read More ...

FCC Bans Data Discrimination, Defies Comcast, Adopting Net Neutrality Regulation

Vote was 3-2 along party lines; final draft eliminates the "fast lane" concept Wheeler suggested in the early drafts

Read More ...

Finished Apple Watch Expected to be Showcased at"Spring Forward" Mar. 9 Event

Apple may also add Broadwell chip options to the MacBook Pro and Air lines, but the star of the show is expected to be the Apple Watch

Read More ...

Smartphone STD Scanner Dongle Can Detect HIV in Just 15 Minutes

Researchers from Columbia University have created a new mobile HIV and syphilis scanner that can be connected to smartphones

Read More ...

Google Steps up Snub of Adobe Flash, Auto-Converting Flash Ads to HTML5

Flash is fast going the way of the dinosaur both in the mobile space and in the PC internet market

Read More ...

Quick Note: Apple Opens Up iOS 8.3 Beta 2 to Developers

Apple iOS 8.3 beta 2 now available to developers

Read More ...

Cash Grab or Life Saver? NYC Speeding Ticket Cameras Scrutinized in New Report

Public radio station finds that while ticket rates have drastically risen, there have been some small statistical traffic safety gains

Read More ...

StarDock Unveils Start10 Start Menu Replacement for Windows 10

Reskin of Start Menu embraces some, but not all of Microsoft's design directions

Read More ...

Available Tags:LG , Android , Western Digital , Gigabyte , Gaming , Notebook , Samsung , Motorola , Lenovo , Windows , USB , Apple , Smartphone , Google , Adobe , HTML5 , iOS ,

No comments:

Post a Comment