Samsung Galaxy S 5 Review

Samsung is now the undisputed king of the Android smartphone space. It was only a few years ago that the general public referred to every Android phone as a “Droid”. Now, it’s not uncommon for people to refer to every Android device as a “Galaxy”, and it speaks to the level of market penetration that Samsung has achieved with their Galaxy line-up. The Galaxy S series has been a sales hit, and with the initial impressions piece, it was said that the average consumer lives and dies by what’s familiar. Samsung continues to iterate with their Galaxy S line with consistent improvement and little, if no regression from generation to generation. This is where Samsung dominates, as the Galaxy S5 is clear evolution of the Galaxy S3 and S4, but made more mature. To find out more, read on for the full review.

Read More ...

Samsung Releases Standard, EVO and PRO SD Cards

With the rapid decrease of NAND prices in the last few years, the SD card market has more or less become a commodity with very little differentiation. We don't typically note minor product releases but we'll make an exception here given that this one is a bit more significant than the average.

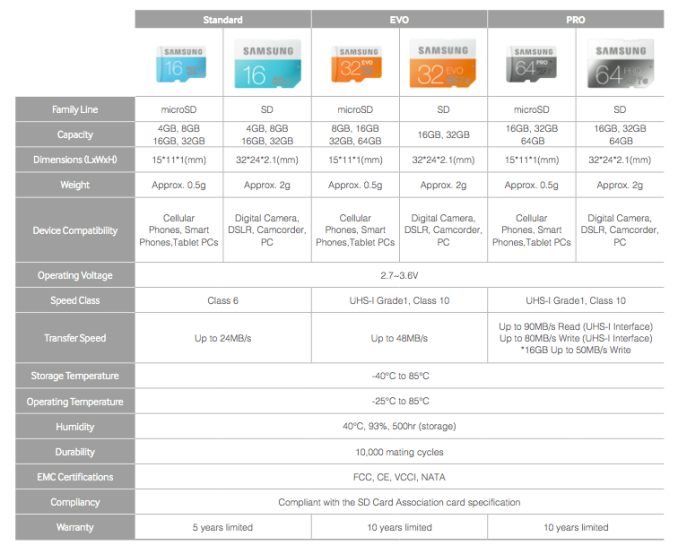

Samsung is revamping their whole SD card lineup at once by simplifying it into three product series: Standard, EVO and the PRO. Those who are familiar with Samsung's SSDs notice that the EVO and PRO brands are adopted from the 840 SSD series, which makes sense given Samsung's success in the SSD space and the brand they've been able to build with the 840 EVO and Pro.

Samsung's goal with the branding is to make it as easy as possible for the customer to select an SD card that fits their needs because oftentimes SD cards are marketed using the class system (like class 10), which may not tell much to an average SD card shopper. Electronics stores tend to have isles of SD cards available and differentiating in that space is extremely hard, so Samsung hopes that its simple branding will help to boost sales. Each brand also has a unique color (turquoise for Standard, orange for EVO and black for PRO) that aims to simplify branding even further and to allure customers (we all like vibrant colors, don't we?).

All

models are available in both microSD and SD form factors, although

available capacities vary as the table above shows. Retail packages with

SD and USB adapters are also available.

As

one would expect, the PRO is of course the fastest offering available

and provides rather impressive transfers rate of up to 90MB/s. Most SD

card use scenarios don't require that high throughputs but as 4K video

is steadily making its way into the hands of consumers, the storage

media has to evolve as well. Some DSLRs can also be picky with SD card

performance and especially professionals like to have the fastest card

available to make sure the SD card isn't bottlenecking the performance

of the DSLR.

At

the low level these are all MLC NAND designs but with varying quality

of NAND being used. In fact only a small portion of the NAND meets the

requirements for SSDs, the rest of the NAND is used in products where

endurance and performance aren't as critical (such as USB drives, SD

cards, eMMC...).

All in all, the SD card market as a whole is quite uninteresting. When you can get a decent SD card for a tenner or two, it's logical that not much time is spent on the purchase decision or research. Simple branding can help with that and the high-end niche for the PRO model does exist, but I still believe that the average buyer will just get the cheapest card available or the one that is recommended by the sales rep.

All in all, the SD card market as a whole is quite uninteresting. When you can get a decent SD card for a tenner or two, it's logical that not much time is spent on the purchase decision or research. Simple branding can help with that and the high-end niche for the PRO model does exist, but I still believe that the average buyer will just get the cheapest card available or the one that is recommended by the sales rep.

Read More ...

NVIDIA GeForce Experience 2.0: Remote GameStream and Notebook Support

Coinciding with today’s launch of the R337 driver, NVIDIA has also updated their GeForce Experience (GFE) software to version 2.0. The updated beta drivers are available for notebooks as well as desktops, but as discussed in our 337.50 article, the actual benefit for single GPUs is limited to specific games. As for GFE 2.0, we’ve discussed many of the updated items in our recent NVIDIA 800M overview, but with the R337 drivers and GFE 2.0 the features become active for all users. The key updates are mostly focused on notebooks, with a few exceptions, but let’s quickly recap.

First, the new GTX 800M cards feature a technology called Battery Boost. I’ve been testing this with a GTX 880M notebook, and while the results vary with the game and settings you choose to run, the short summary is that you can realize gains of 25% to as much as 100% in battery life. A major component in Battery Boost is frame rate targeting, with an adjustable slider going from 20FPS to 50FPS, but NVIDIA is keen to point out that there’s more going on than simple frame rate limits. We’ll have some initial results in a review this week, and we’re working on more extensive analysis of the technology; it does work, however, so if you’re the type of gamer that want to be able to play games while unplugged, Battery Boost will at least get you well past the one hour mark – even on a beefy GTX 880M notebook.

Two more additions to GFE 2.0 are support for GameStream and ShadowPlay on all GTX 800M laptops, as well as GTX 700M and select GTX 600M models. Basically, if you have a Kepler-based GTX notebook GPU, GameStream and ShadowPlay are now available. With many people now moving almost exclusively to notebooks, it makes sense that NVIDIA would extend these features to additional users. While the idea of streaming games from a mobile device to another mobile device might at first seem odd, Battery Boost and graphics hardware in general isn’t at the point where you can play games for hours at a time without an AC adapter, but SHIELD can do that and more with GameStream. You’ll need to turn down a few settings in some titles to achieve smooth frame rates, depending on your laptop GPU, but notebooks are now at the point where 30+ FPS at high detail settings is generally available.

ShadowPlay meanwhile offers the ability to capture at the click of a button the previous chunk of gameplay. The performance hit is negligible, so if you want to share your best gaming moments with friends it can be very convenient. There’s a new feature being added to ShadowPlay as well – for desktop GPUs only right now, though we’ll likely see support for mobile GPUs as well in the future – Full Desktop Capture. This allows users to capture windowed gaming sessions, but it also extends to simply capturing your desktop content even if you’re not running a game. There’s a certain segment of gamers (and games) where playing in a window with the ability to switch to other windows is desired, with MMOs being the most common, so expect to see more “How to…” gaming videos cropping up in the future thanks to ShadowPlay. ShadowPlay is also receiving updates to the encoding settings, allowing the use of custom resolutions, bitrates, framerates, and more.

Finally, GameStream has a new beta feature launching with the new drivers and GFE: Remote GameStream. The idea is to take the concepts from GameStream and the GRID Streaming Beta and merge them to allow users to use GameStream while away from home. The GRID Streaming Beta incidentally was a cool idea that used a custom GPU farm run by NVIDIA to render games and stream them to SHIELD devices, but the quality of the games was somewhat limited (i.e. NVIDIA didn’t want to let everyone run each game at “max” settings). With Remote GameStream, since you’re using your own hardware, you can tune the settings to fully utilize your GPU, potentially allowing 1080p maximum detail gaming on your SHIELD. We haven’t had a chance to test this out yet, and the bandwidth requirement of 5Mbps upstream (from the host system) and 5Mbps downstream (to the SHIELD device) may limit the situations in which Remote GameStream is usable, which is why this is a beta release. Over time, we may see NVIDIA tune the performance to work better with lower bandwidths. Note that Remote GameStream will also require SHIELD Software Update 72, which includes Android "KitKat" 4.4.2 as well as other changes.

And that takes care of the GFE 2.0 update. NVIDIA continues to add titles to the GFE supported list, and they’re now up to more than 150 games (from the initial 80 games GFE launched with). For supported games, with any modern NVIDIA GPU (Kepler or Fermi I believe being the requirement), you can let GFE apply “smart” settings to optimize quality and performance so that you end up with a good gaming experience. Beyond simply helping users tune their quality settings, GFE has become a useful tool for receiving driver updates, recording gaming sessions, streaming games to a SHIELD device, and now helping to improve battery life while gaming on select notebooks. I didn’t think much of GFE when it first launched, but it’s come a long way in only half a year, and the 2.0 release marks a significant milestone for NVIDIA with plenty of new additions still in the works. For those that are interested, the full slide deck from NVIDIA is in the gallery below.

Read More ...

NVIDIA Releases 337.50 Beta Driver, Touts Significant Performance Improvements

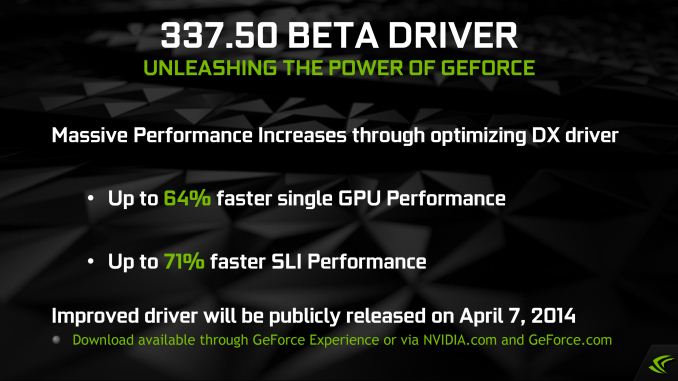

Kicking off what will undoubtedly be a busy week in the world of video cards, NVIDIA has started the week with a new GeForce driver release over on their GeForce website. This latest driver, version 337.50, is the first public release from the new R337 driver branch, and comes roughly two months after the first R334 driver.

In a change of pace reflecting the importance of this driver, NVIDIA will be rolling out the red carpet for R337. While every major driver branch includes its share of bug fixes and performance improvements, NVIDIA is promoting R337 as one of those rare and exceptional performance drivers that we always like to get, one that will significantly improve performance across a range of games. With that fanfare in mind, NVIDIA has briefed the press on these drivers ahead of time and given us a few days to play with them to see just what kind of performance improvements they bring.

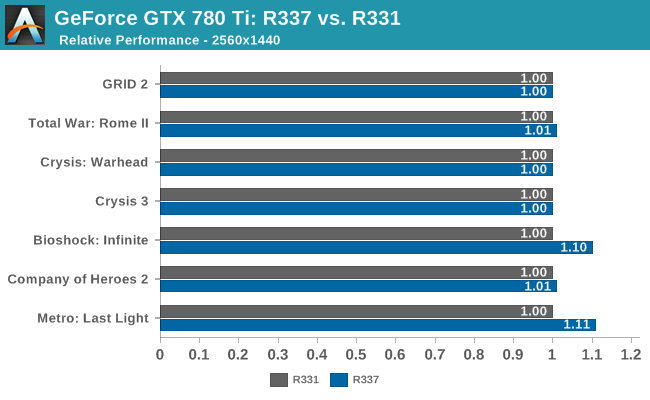

To put this to the test, we’ve updated our benchmarks for our GeForce GTX 780 Ti and plotted those results against the most recent set of comprehensive results we had for that card, which is last year’s R331 driver release.

To no great surprise the actual performance gains are very game specific. We’re seeing anywhere between break-even performance on games like GRID 2 and Crysis 3, to 10%+ gains in games like Metro: Last Light and Bioshock: Infinite. Even in the cases NVIDIA makes sweeping changes to the underpinnings of their drivers, any potential performance improvement is going to hinge on where the bottlenecks are and what games are using the bottlenecked function.

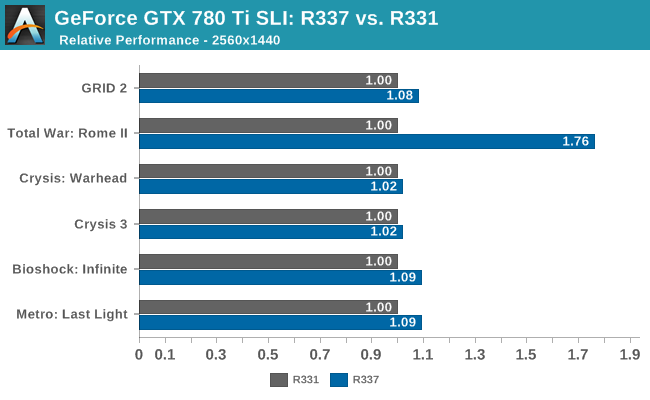

NVIDIA has also put similar degree of effort – if not more – into improving SLI performance.

Unlike our single-GPU results, every SLI-compatible game has seen at least a slight performance increase. This ranges from 2% in Crysis 3 and 9% in Metro, up to 76% for Total War: Rome II. In the case of Rome II NVIDIA’s previous drivers were incapable of getting good multi-GPU scaling out of the game, and with R337 they have apparently finally surmounted Rome’s AFR-unfriendly nature. However it should be noted that for the moment Rome II is suffering from (or causing) graphical glitches on both NVIDIA and AMD multi-GPU setups, so both vendors still have some work to do when it comes to Rome II.

NVIDIA hasn’t released a complete list of games they’re expecting performance improvements in, but from our results and from their press deck it looks like the gains from these drivers are very hit & miss. In our experience the gains from R337 will depend on the game and quite often the settings and features used within that game, and ultimately whether those combinations leave you CPU limited or GPU limited. Otherwise for better or worse some of NVIDIA’s targets for optimization have included relatively popular (but old) benchmark games – titles like Alien vs. Predator and Sniper Elite V2 – a practice that’s hardly unusual, but it means these performance gains may not translate over to to less popular games or games that don’t benchmark well.

Meanwhile for the moment NVIDIA is being rather coy on what they’ve done under the hood to squeeze out these performance improvements out of their now well-developed Kepler GPUs, saying that they’re going to waiting until these drivers are out of beta to talk about what they’ve been doing under the hood. In other words, it looks like it will be few more weeks until we get a chance to see just what NVIDIA has been up to in the last couple of months.

What isn’t under question however is NVIDIA’s motivation, which is the favorable press and the performance gains AMD has picked up as a result of their fledgling Mantle program. As we saw a couple of weeks ago, low-level programming is going to be making a significant resurgence in the PC gaming space through Microsoft’s forthcoming DirectX 12, with Mantle in turn serving as a vanguard of sorts for the concept. In the interim with DirectX 12 development targeting Holiday 2015 games (a preview build is expected this year) NVIDIA needs to make sure they remain competitive with AMD regardless of the technical differences.

The end result has been that NVIDIA has been focusing on Mantle-enabled games for their driver performance improvements, doing what they can to improve performance within the confines of Direct3D 11. This has included Battlefield 4 and Thief so far, and we wouldn’t be surprised if NVIDIA undertook a similar effort for any future high-profile Mantle games. All things considered NVIDIA has an overall hardware performance advantage at the high end, but AMD’s Hawaii products are fast enough that NVIDIA needs to counter Mantle-derived performance improvements if they wish to stay on top, giving NVIDIA a very good reason to keep an eye on AMD and Mantle.

Finally, coinciding with today’s launch of the 337.50 drivers, NVIDIA will also be launching GeForce Experience 2.0. We’ll be looking at that in a separate article, but GeForce Experience 2.0 will bring with it additional features for GeForce desktop and mobile products, including ShadowPlay feature enhancements and official support for ShadowPlay and Battery Boost on mobile products. Meanwhile GeForce Experience 2.0 will also be enabling Gamestream support over the Internet for NVIDIA’s SHIELD handheld, finally allowing users to access home and centralized (GRID) remote gaming over both local networks and the larger Internet.

Read More ...

AMD Launches FirePro W9100

Kicking off this week is the 2014 NAB Show, the National Association of Broadcasters’ annual trade show for broadcast content and technology. The NAB Show is often a launch point for new video products and this year is no exception, with AMD using the show to launch their new FirePro W9100.

First announced last month, the FirePro W9100 is AMD’s new flagship FirePro video card. Based on AMD’s Hawaii GPU, the FirePro W9100 is a fairly straightforward update to AMD’s FirePro lineup, bringing with it the various GCN 1.1 feature upgrades along with Hawaii’s stronger overall performance and greatly improved double precision (FP64) performance.

AMD FirePro W Series Specification Comparison |

||||||

AMD FirePro W9100 |

AMD FirePro W9000 |

AMD FirePro W8000 |

AMD FirePro W7000 |

|||

Stream Processors |

2816 |

2048 |

1792 |

1280 |

||

Texture Units |

176 |

128 |

112 |

80 |

||

ROPs |

64 |

32 |

32 |

32 |

||

Core Clock |

930MHz |

975MHz |

900MHz |

950MHz |

||

Memory Clock |

5GHz GDDR5 |

5.5GHz GDDR5 |

5.5GHz GDDR5 |

4.8GHz GDDR5 |

||

Memory Bus Width |

512-bit |

384-bit |

256-bit |

256-bit |

||

VRAM |

16GB |

6GB |

4GB |

4GB |

||

Double Precision |

1/2 |

1/4 |

1/4 |

1/16 |

||

Transistor Count |

6.2B |

4.31B |

4.31B |

2.8B |

||

TDP |

275W |

274W |

189W |

<150W |

||

Manufacturing Process |

TSMC 28nm |

TSMC 28nm |

TSMC 28nm |

TSMC 28nm |

||

Architecture |

GCN 1.1 |

GCN 1.0 |

GCN 1.0 |

GCN 1.0 |

||

Warranty |

3-Year |

3-Year |

3-Year |

3-Year |

||

Launch Price |

$3999 |

$3999 |

$1599 |

$899 |

||

From a raw specification standpoint AMD is going to be pushing memory bandwidth and memory capacity, and for good reason. The W9100 is outfit with 16GB of memory and will be utilizing Hawaii’s full 512-bit memory bus, giving it more RAM than any prior workstation card and 320GB/sec of memory bandwidth to access that RAM through. Meanwhile on a technical note, from the product details we’ve seen it looks like AMD is using 8Gb GDDR5 memory chips, which would mean the W9100 will be in a 16x8Gb memory configuration.

Also confirmed with today’s launch is W9100’s double precision floating point performance. We had earlier speculated based on AMD’s generalized double precision performance numbers that an unrestricted Hawaii GPU was capable of ½ speed double precision performance, and AMD has since confirmed that. This puts W9100 at 5.24 TFLOPS of single precision performance and 2.62 TFLOPS of double precision performance, making it the first workstation card to offer more than 2 TFLOPS of double precision performance.

Meanwhile as for pricing, AMD has set the MSRP on the W9100 at $3999. Unsurprisingly this is the same price as the W9000 when it launched roughly a year and a half ago, making the W9100 the W9000's replacement in every sense of the word. This also happens to continue the trend of AMD significantly undercutting NVIDIA’s workstation card prices, with the W9100 coming in roughly $1000 below the price of the Quadro K6000.

Wrapping things up, as we mentioned back in our initial look at AMD’s announcement, expect to see AMD heavily push the W9100 on the basis of its memory and compute performance alongside its graphics performance. While traditional graphics-heavy professional applications (e.g. AutoCAD) are still as power hungry as ever, the amount of compute work being generated by these programs is increasing. This goes for both programs using compute in a more straightforward way, and programs leveraging compute for graphics related tasks such as video encoding and image processing. For both of these tasks AMD is banking on their 16GB of VRAM giving them a performance advantage due to the larger working sets such a large memory configuration can hold, in turn allowing them to better utilize the full compute capabilities of the Hawaii GPU. Which on that note, AMD OpenCL users will be happy to hear that AMD has set a release window of Q4 for their OpenCL 2.0 driver for FirePro, a long-awaited release that among other things will introduce dynamic parallelism into OpenCL.

Read More ...

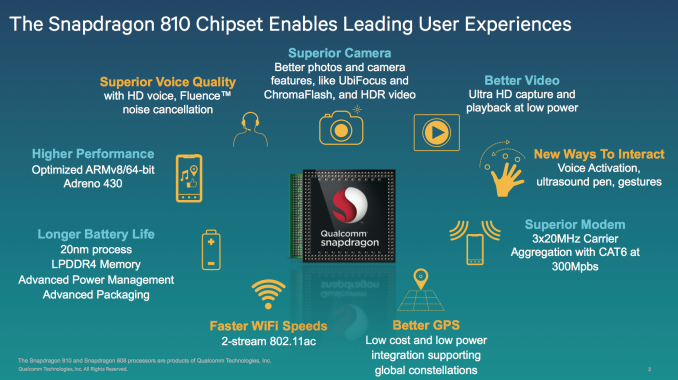

Qualcomm's Snapdragon 808/810: 20nm High-End 64-bit SoCs with LTE Category 6/7 Support in 2015

Today Qualcomm is rounding out its 64-bit family with the Snapdragon 808 and 810. Like the previous 64-bit announcements (Snapdragon 410, 610 and 615), the 808 and 810 leverage ARM's own CPU IP in lieu of a Qualcomm designed microarchitecture. We'll finally hear about Qualcomm's own custom 64-bit architecture later this year, but it's clear that all 64-bit Snapdragon SoCs shipping in 2014 (and early 2015) will use ARM CPU IP.

While the 410, 610 and 615 all use ARM Cortex A53 cores (simply varying the number of cores and operating frequency), the 808 and 810 move to a big.LITTLE design with a combination of Cortex A53s and Cortex A57s. The latter is an evolution of the Cortex A15, offering anywhere from a 25 - 55% increase in IPC over the A15. The substantial increase in performance comes at around a 20% increase in power consumption at 28nm. Thankfully both the Snapdragon 808 and 810 will be built at 20nm, which should help offset some of the power increase.

Qualcomm's 64-bit Lineup |

|||||||

Snapdragon 810 |

Snapdragon 808 |

Snapdragon 615 |

Snapdragon 610 |

Snapdragon 410 |

|||

Internal Model Number |

MSM8994 |

MSM8992 |

MSM8939 |

MSM8936 |

MSM8916 |

||

Manufacturing Process |

20nm |

20nm |

28nm LP |

28nm LP |

28nm LP |

||

CPU |

4 x ARM Cortex A57 + 4 x ARM Cortex A53 (big.LITTLE) |

2 x ARM Cortex A57 + 4 x ARM Cortex A53 (big.LITTLE) |

8 x ARM Cortex A53 |

4 x ARM Cortex A53 |

4 x ARM Cortex A53 |

||

ISA |

32/64-bit ARMv8-A |

32/64-bit ARMv8-A |

32/64-bit ARMv8-A |

32/64-bit ARMv8-A |

32/64-bit ARMv8-A |

||

GPU |

Adreno 430 |

Adreno 418 |

Adreno 405 |

Adreno 405 |

Adreno 306 |

||

H.265 Decode |

Yes |

Yes |

Yes |

Yes |

No |

||

H.265 Encode |

Yes |

No |

No |

No |

No |

||

Memory Interface |

2 x 32-bit LPDDR4-1600 |

2 x 32-bit LPDDR3-933 |

2 x 32-bit LPDDR3-800 |

2 x 32-bit LPDDR3-800 |

2 x 32-bit LPDDR2/3-533 |

||

Integrated Modem |

9x35 core, LTE Category 6/7, DC-HSPA+, DS-DA |

9x35 core, LTE Category 6/7, DC-HSPA+, DS-DA |

9x25 core, LTE Category 4, DC-HSPA+, DS-DA |

9x25 core, LTE Category 4, DC-HSPA+, DS-DA |

9x25 core, LTE Category 4, DC-HSPA+, DS-DA |

||

Integrated WiFi |

- |

- |

Qualcomm VIVE 802.11ac 1-stream |

Qualcomm VIVE 802.11ac 1-stream |

Qualcomm VIVE 802.11ac 1-stream |

||

eMMC Interface |

5.0 |

5.0 |

4.5 |

4.5 |

4.5 |

||

Camera ISP |

14-bit dual-ISP |

12-bit dual-ISP |

? |

? |

? |

||

Shipping in Devices |

1H 2015 |

1H 2015 |

Q4 2014 |

Q4 2014 |

Q3 2014 |

||

The CPU is only one piece of the puzzle as the rest of the parts of these SoCs get upgraded as well. The Snapdragon 808 will use an Adreno 418 GPU, while the 810 gets an Adreno 430. I have no idea what either of those actually means in terms of architecture unfortunately (Qualcomm remains the sole tier 1 SoC vendor to refuse to publicly disclose meaningful architectural details about its GPUs). In terms of graphics performance, the Adreno 418 is apparently 20% faster than the Adreno 330, and the Adreno 430 is 30% faster than the Adreno 420 (100% faster in GPGPU performance). Note that the Adreno 420 itself is something like 40% faster than Adreno 330, which would make Adreno 430 over 80% faster than the Adreno 330 we have in Snapdragon 800/801 today.

Also on the video side: both SoCs boast dedicated HEVC/H.265 decode hardware. Only the Snapdragon 810 has a hardware HEVC encoder however. The 810 can support up to two 4Kx2K displays (1 x 60Hz + 1 x 30Hz), while the 808 supports a maximum primary display resolution of 2560 x 1600.

The 808/810 also feature upgraded ISPs, although once again details are limited. The 810 gets an upgraded 14-bit dual-ISP design, while the 808 (and below?) still use a 12-bit ISP. Qualcomm claims up to 1.2GPixels/s of throughput, putting ISP clock at 600MHz and offering a 20% increase in ISP throughput compared to the Snapdragon 805.

The Snapdragon 808 features a 64-bit wide LPDDR3-933 interface (1866MHz data rate, 15GB/s memory bandwidth). The 810 on the other hand features a 64-bit wide LPDDR4-1600 interface (3200MHz data rate, 25.6GB/s memory bandwidth). The difference in memory interface prevents the 808 and 810 from being pin-compatible. Despite the similarities otherwise, the 808 and 810 are two distinct pieces of silicon - the 808 isn't a harvested 810.

Both SoCs have a MDM9x35 derived LTE Category 6/7 modem. The SoCs feature essentially the same modem core as a 9x35 discrete modem, but with one exception: Qualcomm enabled support for 3 carrier aggregation LTE (up from 2). The discrete 9x35 modem implementation can aggregate up to two 20MHz LTE carriers in order to reach Cat 6 LTE's 300Mbps peak download rate. The 808/810, on the other hand, can combine up to three 20MHz LTE carriers (although you'll likely see 3x CA used with narrower channels, e.g. 20MHz + 5MHz + 5MHz or 20MHz + 10MHz + 10MHz).

Enabling 3x LTE CA requires two RF transceiver front ends: Qualcomm's WTR3925 and WTR3905. The WTR3925 is a single chip, 2x CA RF transceiver and you need the WTR3905 to add support for combining another carrier. Category 7 LTE is also supported by the hardware (100Mbps uplink), however due to operator readiness Qualcomm will be promoting the design primarily as category 6.

There's no integrated WiFi in either SoC. Qualcomm expects anyone implementing one of these designs to want to opt for a 2-stream, discrete solution such as the QCA6174.

Qualcomm refers to both designs as "multi-billion transistor" chips. I really hope we'll get to the point of actual disclosure of things like die sizes and transistor counts sooner rather than later (the die shot above is inaccurate).

The Snapdragon 808 is going to arrive as a successor to the 800/801, while the 810 sits above it in the stack (with a cost structure similar to the 805). We'll see some "advanced packaging" used in these designs. Both will be available in a PoP configuration, supporting up to 4GB of RAM in a stack. Based on everything above, it's safe to say that these designs are going to be a substantial upgrade over what Qualcomm offers today.

Unlike the rest of the 64-bit Snapdragon family, the 808 and 810 likely won't show up in devices until the first half of 2015 (410 devices will arrive in Q3 2014, while 610/615 will hit in Q4). The 810 will come first (and show up roughly two quarters after the Snapdragon 805, which will show up two quarters after the recently released 801). The 808 will follow shortly thereafter. This likely means we won't see Qualcomm's own 64-bit CPU microarchitecture show up in products until the second half of next year.

With the Snapdragon 808 and 810, Qualcomm rounds out almost all of its 64-bit lineup. The sole exception is the 200 series, but my guess is the pressure to move to 64-bit isn't quite as high down there.

What's interesting to me is just how quickly Qualcomm has shifted from not having any 64-bit silicon on its roadmap to a nearly complete product stack. Qualcomm appeared to stumble a bit after Apple's unexpected 64-bit Cyclone announcement last fall. Leaked roadmaps pointed to a 32-bit only future in 2014 prior to the introduction of Apple's A7. By the end of 2013 however, Qualcomm had quickly added its first 64-bit ARMv8 based SoC to the roadmap (Snapdragon 410). Now here we are, just over six months since the release of iPhone 5s and Qualcomm's 64-bit product stack seems complete. It'll still be roughly a year before all of these products are shipping, but if this was indeed an unexpected detour I really think the big story is just how quickly Qualcomm can move.

I don't know of any other silicon player that can move and ship this quickly. Whatever efficiencies and discipline Qualcomm has internally, I feel like that's the bigger threat to competing SoC vendors, not the modem IP.

Read More ...

NVIDIA Shield Gets April 2014 Update

You can now play "Titanfall" on Shield

Read More ...

Texas 17-Year Old Scams Thousands of Android Users With Fake AV App

App hit #1, Google never suspected a 869 KB "antivirus" app by the maker of "Yolo Bilbo Swaggins" might be fake

Read More ...

Qualcomm 808 and 810, 64-bit, 20 nm Chips Will Debut in 2015

New chips add 64-bit ARM processing, 4K display and video support, advanced LTE, and more

Read More ...

Microsoft Brings Live Tiles to Infotainment Systems with "Windows in the Car" Concept

A Windows Phone screen will project its content onto the infotainment display

Read More ...

HTC Bleeds $62 Million USD in Q1 2014, Looks Ahead to Hopeful One M8 Sales

Marketing was cited as the HTC One's failure

Read More ...

Canada Delays F-35 Purchases Until 2018

Critics say this is a move to get the conservative party through the coming elections

Read More ...

Yahoo Seeking Four Original Comedies for Video Push

Yahoo wants four original comedy shows tips source

Read More ...

"Amazon Dash" Scanner Makes it Easy to Order Groceries via AmazonFresh

Amazon unveils new LED scanner making it easier to order via AmazonFresh

Read More ...

Available Tags:Samsung , Galaxy , NVIDIA , GeForce , Notebook , AMD , FirePro , Android , Microsoft , HTC , Yahoo , via ,

No comments:

Post a Comment