The HTC One (M8) Review

HTC remains one of few Android OEMs insanely focused on design. Even dating back to the origins of the One brand in 2012 with the One X and One S, HTC clearly saw design where others were more focused on cost optimization. Only time will tell which is the more viable long term business strategy, but in the interim we’ve seen two generations of well crafted devices from what would otherwise be thought of as a highly unlikely source. With its roots in the ODM/OEM space, HTC is one of very few ODM turned retail success stories that we’ve seen come out of Taiwan. ASUS is the closest and only real comparison I can make.

As its name implies, the goal of the One brand was to have a device that anyone, anywhere in the world could ask for and know they were getting an excellent experience. Although HTC sort of flubbed the original intent by introducing multiple derivatives (One X, One S, One X+), it was the beginning of relief from the sort of Android OEM spaghetti we saw not too long ago. With the One brand, HTC brought focus to its product line.

Last year HTC took a significant step towards evolving the brand into one true flagship device, aptly named the One. Once again there were derivatives (One mini and One max), but the messaging was far less confusing this time around. If you wanted small you got the mini, if you wanted big you got the max, otherwise all you needed to ask for was the One.

With last year’s One (codenamed M7), HTC was incredibly ambitious. Embracing a nearly all metal design and opting for a much lower resolution, but larger format rear camera sensor, the One was not only bold but quite costly to make. With the premium smartphone market dominated by Apple and Samsung, and the rest of the world headed to lower cost devices, it was a risky proposition. From a product standpoint, I’d consider the M7 One a success. A year ago I found myself calling it the best Android phone I’d ever used.

It didn’t take long for my obsession to shift to the Moto X, and then the Nexus 5, although neither delivered the overall camera experience of the One. Neither device came close to equaling HTC on the design front either, although I maintain Motorola did a great job with in hand feel. Although I found myself moving to newer devices since my time with the One last year, anytime I picked up the old M7 I was quickly reminded that HTC built a device unlike any other in the Android space. It just needed a refresh.

This is our review of the new HTC One.

Read More ...

AMD Announces FirePro W9100

In what’s proving to be a busy week for GPU news, AMD has just wrapped up their webcast announcing their next flagship FirePro product. Dubbed the FirePro W9100, AMD’s latest card is their expected refresh of their FirePro product lineup to integrate the company’s recently launched Hawaii family of processors.

As Hawaii itself was a small but important refresh to Tahiti and the GCN architecture, the same can be said of the FirePro W9100 compared to the FirePro W9000. Other than some gaming-exclusive features such as TrueAudio, Hawaii’s biggest changes were the Asynchronous Compute Engine (ACE) additions that are part of GCN 1.1, the wider 4 primitive geometry pipeline, and of course the overall increase in performance and performance per watt compared to Tahiti. So from a technical perspective W9100 stands to be a relatively straightforward improvement to what W9000 has offered thus far.

AMD FirePro W Series Specification Comparison |

||||||

AMD FirePro W9100 |

AMD FirePro W9000 |

AMD FirePro W8000 |

AMD FirePro W7000 |

|||

Stream Processors |

2816 |

2048 |

1792 |

1280 |

||

Texture Units |

176 |

128 |

112 |

80 |

||

ROPs |

? |

32 |

32 |

32 |

||

Core Clock |

? |

975MHz |

900MHz |

950MHz |

||

Memory Clock |

? |

5.5GHz GDDR5 |

5.5GHz GDDR5 |

4.8GHz GDDR5 |

||

Memory Bus Width |

? |

384-bit |

256-bit |

256-bit |

||

VRAM |

16GB |

6GB |

4GB |

4GB |

||

Double Precision |

1/2? |

1/4 |

1/4 |

1/16 |

||

Transistor Count |

6.2B |

4.31B |

4.31B |

2.8B |

||

TDP |

? |

274W |

189W |

<150W |

||

Manufacturing Process |

TSMC 28nm |

TSMC 28nm |

TSMC 28nm |

TSMC 28nm |

||

Architecture |

GCN 1.1 |

GCN 1.0 |

GCN 1.0 |

GCN 1.0 |

||

Warranty |

3-Year |

3-Year |

3-Year |

3-Year |

||

Launch Price |

? |

$3999 |

$1599 |

$899 |

||

Speaking of the memory bus, we don’t have the specific width or clockspeeds there either, but we know that AMD has packed W9100 with a ton of memory. 16GB of memory to be precise, which would be as much memory as Hawaii would be able to handle using current generation 8Gb GDDR5 modules. Compared to even W9000, which was relatively large for its time at 6GB, this is huge for a workstation card.

Meanwhile it’s interesting to note that while AMD hasn’t published clockspeed numbers they have published rough performance numbers for both FP32 and FP64. FP32 is rated for over 5 GFLOPS and FP64 is rated for over 2 GFLOPS. The math on that doesn’t work out conclusively – if it’s anything like NVIDIA’s GK110, then there may be additional power requirements that influence performance under FP64 mode – but these numbers point to Hawaii having 1/2 rate FP64 performance. As a reminder 290X was rated at 1/8, so this would mean Hawaii has a much higher rate than what was enabled on AMD’s consumer cards. In fact this would put Hawaii’s native FP64 rate (as a ratio of FP32 performance) as being higher than any other GPU, the next-closest GPU being NVIDIA’s GK110 at 1/3 rate FP64.

Beyond that, we don’t have any further details on the card at this time. W9100 is not launching today – we hear the launch should be soon after – so availability and pricing have not been released thus far. The best we can do is point to the W9000’s launch price of $3999 as some kind of guidance on where it may come in.

Shifting gears for a bit, while AMD was careful on releasing details ahead of the launch of the W9100, they spent some time going in depth into how the card fits into their long-term plans, and what markets they’re pursuing with the card. FirePro continues to pull double duty as AMD’s workstation graphics card and workstation compute card, centered around the capabilities of OpenCL. So AMD is looking to put together a product that can serve both markets well, especially in the case of cross-over applications that make use of both aspects of the card.

Of those aspects, AMD is definitely pushing the compute side harder this time around as opposed to the FirePro W9000 launch in 2012, as the nature of the market has changed. Traditional graphics-heavy professional applications (e.g. AutoCAD) are still as power hungry as ever – if not more so due to the rise of 4K monitors – and meanwhile the amount of compute work being generated by these programs is increasing. This goes for both programs using compute in a more straightforward way, such as running mechanical simulations, and programs leveraging compute for graphics related tasks such as video encoding and image processing. And for both of these tasks AMD is banking on their 16GB of VRAM giving them a performance advantage due to the larger working sets such a large memory configuration can hold.

On that note, AMD has published a few benchmarks ahead of the launch of the card. But as always, these are best take with a grain of salt as they’re almost certainly best-case numbers for their hardware and software stack.

Moving on, along with the announcement of the W9100, AMD has also announced that they will be introducing a new workstation branding initiative to go with the card. Dubbed the “Ultra Workstation” initiative, the program will have 3 tiers of workstations, indicating how many GPUs they have in them and what kind of tasks AMD is recommending for them based on resource requirements and how well major applications scale to that many GPUs.

All of the Ultra Workstation tiers will have a minimum of 32GB of system memory, at least one 8 core CPU, and of course at least one W9100.

Finally, the launch of the W9100 also brings with it a brief update on AMD’s overall transformation in their product offerings, and their current market share. AMD’s share of the professional GPU market is up to 18%, which is still well off of their heyday years ago, but a solid improvement from where they were at the launch of the W9000. To that end professional graphics and compute continues to represent a major growth opportunity for the company, as the other 82% of that market share belongs to NVIDIA, and stealing even a small part of it would significantly improve AMD’s position. Next to their APU offerings – including semi-custom designs and ARM designs – the discrete GPU market for professional graphics is AMD’s final target for significant growth.

Part of that growth will in turn come from essential OEM wins. AMD’s win of the GPU contract for Apples recently launched Mac Pro is a big deal for the company, as you’d expect, as the Mac Pro is a very prestigious win. It remains to be seen just what it means for sales volumes of AMD FirePro products, but it’s a win that AMD can promote to software developers and potential customers alike.

Read More ...

Asustor AS-304T: 4-Bay Intel Evansport NAS Review

Intel's Evansport NAS platform was meant to take on ARM's dominance in the low to mid-range consumer / SOHO NAS market. We covered it in detail while reviewing the Thecus N2560, a 2-bay solution. How does the platform fare in a unit with four bays? Read on for our report from the evaluation of Asustor's AS-304T to find out.

Read More ...

NVIDIA Updates GPU Roadmap; Unveils Pascal Architecture For 2016

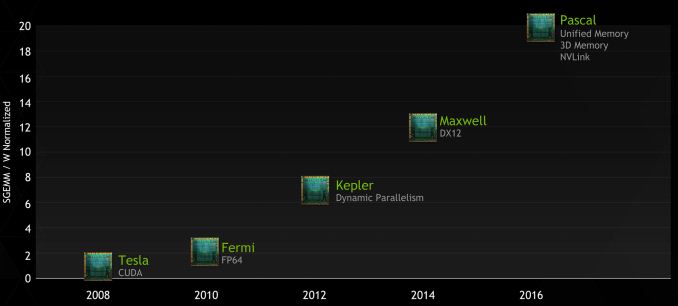

In something of a surprise move, NVIDIA took to the stage today at GTC to announce a new roadmap for their GPU families. With today’s announcement comes news of a significant restructuring of the roadmap that will see GPUs and features moved around, and a new GPU architecture, Pascal, introduced in the middle.

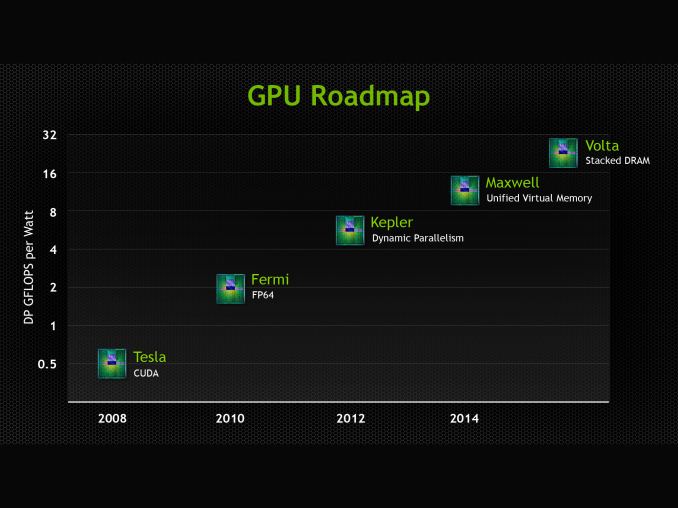

We’ll get to Pascal in a second, but to put it into context let’s first discuss NVIDIA’s restructuring. At GTC 2013 NVIDIA announced their future Volta architecture. Volta, which had no scheduled date at the time, would be the GPU after Maxwell. Volta’s marquee feature would be on-package DRAM, utilizing Through Silicon Vias (TSVs) to die stack memory and place it on the same package as the GPU. Meanwhile in that roadmap NVIDIA also gave Maxwell a date and a marquee feature: 2014, and Unified Virtual Memory.

As of today that roadmap has more or less been thrown out. No products have been removed, but what Maxwell is and what Volta is have changed, as has the pacing. Maxwell for its part has “lost” its unified virtual memory feature. This feature is now slated for the chip after Maxwell, and in the meantime the closest Maxwell will get is the software based unified memory feature being rolled out in CUDA 6. Furthermore NVIDIA has not offered any further details on second generation Maxwell (the higher performing Maxwell chips) and how those might be integrated into professional products.

As far as NVIDIA is concerned, Maxwell’s marquee feature is now DirectX 12 support (though even the extent of this isn’t perfectly clear), and that with the shipment of the GeForce GTX 750 series, Maxwell is now shipping in 2014 as scheduled. We’re still expecting second generation Maxwell products, but at this juncture it does not look like we should be expecting any additional functionality beyond what Big Kepler + 1st Gen Maxwell can achieve.

Meanwhile Volta has been pushed back and stripped of its marquee feature. It’s on-package DRAM has been promoted to the GPU before Volta, and while Volta still exists, publicly it is a blank slate. We do not know anything else about Volta beyond the fact that it will come after the 2016 GPU.

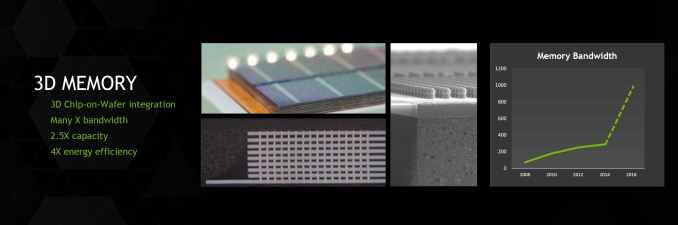

Which brings us to Pascal, the 2016 GPU. Pascal is NVIDIA’s latest GPU architecture and is being introduced in between Maxwell and Volta. In the process it has absorbed old Maxwell’s unified virtual memory support and old Volta’s on-package DRAM, integrating those feature additions into a single new product.

Meanwhile NVIDIA hasn’t said anything else directly about the unified memory plans that Pascal has inherited from old Maxwell. However after we get to the final pillar of Pascal, how that will fit in should make more sense.

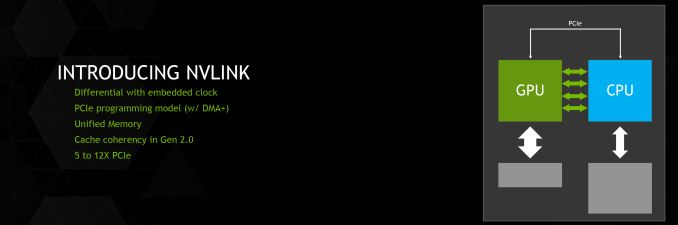

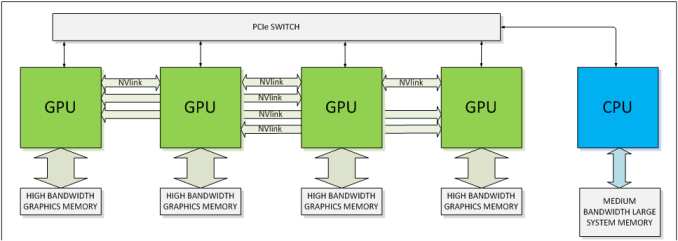

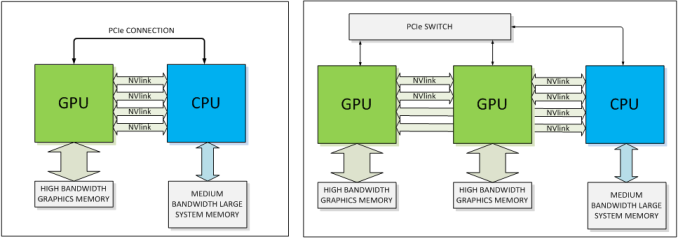

Coming to the final pillar then, we have a brand new feature being introduced for Pascal: NVLink. NVLink, in a nutshell, is NVIDIA’s effort to supplant PCI-Express with a faster interconnect bus. From the perspective of NVIDIA, who is looking at what it would take to allow compute workloads to better scale across multiple GPUs, the 16GB/sec made available by PCI-Express 3.0 is hardly adequate. Especially when compared to the 250GB/sec+ of memory bandwidth available within a single card. PCIe 4.0 in turn will eventually bring higher bandwidth yet, but this still is not enough. As such NVIDIA is pursuing their own bus to achieve the kind of bandwidth they desire.

The end result is a bus that looks a whole heck of a lot like PCIe, and is even programmed like PCIe, but operates with tighter requirements and a true point-to-point design. NVLink uses differential signaling (like PCIe), with the smallest unit of connectivity being a “block.” A block contains 8 lanes, each rated for 20Gbps, for a combined bandwidth of 20GB/sec. In terms of transfers per second this puts NVLink at roughly 20 gigatransfers/second, as compared to an already staggering 8GT/sec for PCIe 3.0, indicating at just how high a frequency this bus is planned to run at.

Multiple blocks in turn can be teamed together to provide additional bandwidth between two devices, or those blocks can be used to connect to additional devices, with the number of bricks depending on the SKU. The actual bus is purely point-to-point – no root complex has been discussed – so we’d be looking at processors directly wired to each other instead of going through a discrete PCIe switch or the root complex built into a CPU. This makes NVLink very similar to AMD’s Hypertransport, or Intel’s Quick Path Interconnect (QPI). This includes the NUMA aspects of not necessarily having every processor connected to every other processor.

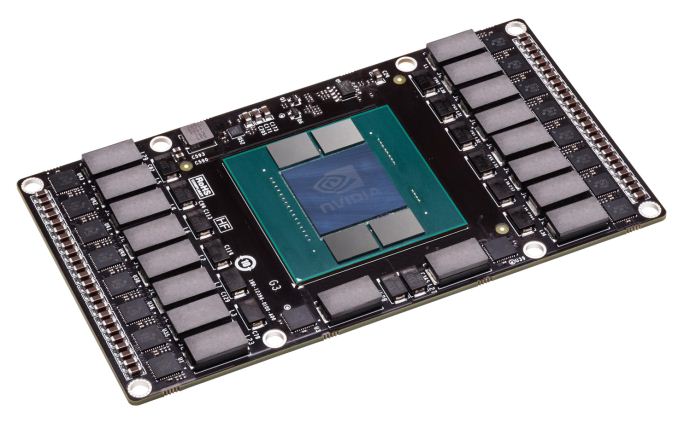

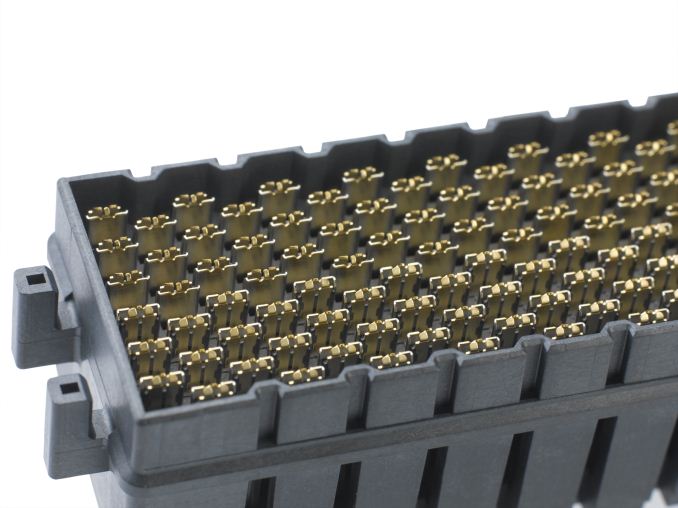

But the rabbit hole goes deeper. To pull off the kind of transfer rates NVIDIA wants to accomplish, the traditional PCI/PCIe style edge connector is no good; if nothing else the lengths that can be supported by such a fast bus are too short. So NVLink will be ditching the slot in favor of what NVIDIA is labeling a mezzanine connector, the type of connector typically used to sandwich multiple PCBs together (think GTX 295). We haven’t seen the connector yet, but it goes without saying that this requires a major change in motherboard designs for the boards that will support NVLink. The upside of this however is that with this change and the use of a true point-to-point bus, what NVIDIA is proposing is for all practical purposes a socketed GPU, just with the memory and power delivery circuitry on the GPU instead of on the motherboard.

NVIDIA’s Pascal test vehicle is one such example of what a card would look like. We cannot see the connector itself, but the basic idea is that it will lay down on a motherboard parallel to the board (instead of perpendicular like PCIe slots), with each Pascal card connected to the board through the NVLink mezzanine connector. Besides reducing trace lengths, this has the added benefit of allowing such GPUs to be cooled with CPU-style cooling methods (we’re talking about servers here, not desktops) in a space efficient manner. How many NVLink mezzanine connectors available would of course depend on how many the motherboard design calls for, which in turn will depend on how much space is available.

One final benefit NVIDIA is touting is that the new connector and bus will improve both energy efficiency and energy delivery. When it comes to energy efficiency NVIDIA is telling us that per byte, NVLink will be more efficient than PCIe – this being a legitimate concern when scaling up to many GPUs. At the same time the connector will be designed to provide far more than the 75W PCIe is spec’d for today, allowing the GPU to be directly powered via the connector, as opposed to requiring external PCIe power cables that clutter up designs.

With all of that said, while NVIDIA has grand plans for NVLink, it’s also clear that PCIe isn’t going to be completely replaced anytime soon on a large scale. NVIDIA will still support PCIe – in fact the blocks can talk PCIe or NVLink – and even in NVLink setups there are certain command and control communiques that must be sent through PCIe rather than NVLink. In other words, PCIe will still be supported across NVIDIA's product lines, with NVLink existing as a high performance alternative for the appropriate product lines. The best case scenario for NVLink right now is that it takes hold in servers, while workstations and consumers would continue to use PCIe as they do today.

Meanwhile, though NVLink won’t even be shipping until Pascal in 2016, NVIDIA already has some future plans in store for the technology. Along with a GPU-to-GPU link, NVIDIA’s plans include a more ambitious CPU-to-GPU link, in large part to achieve the same data transfer and synchronization goals as with inter-GPU communication. As part of the OpenPOWER consortium, NVLink is being made available to POWER CPU designs, though no specific CPU has been announced. Meanwhile the door is also left open for NVIDIA to build an ARM CPU implementing NVLink (Denver perhaps?) but again, no such product is being announced today. If it did come to fruition though, then it would be similar in concept to AMD’s abandoned “Torrenza” plans to utilize HyperTransport to connect CPUs with other processors (e.g. GPUs).

Finally, NVIDIA has already worked out some feature goals for what they want to do with NVLink 2.0, which would come on the GPU after Pascal (which by NV’s other statements should be Volta). NVLink 2.0 would introduce cache coherency to the interface and processors on it, which would allow for further performance improvements and the ability to more readily execute programs in a heterogeneous manner, as cache coherency is a precursor to tightly shared memory.

Wrapping things up, with an attached date for Pascal and numerous features now billed for that product, NVIDIA looks to have to set the wheels in motion for developing the GPU they’d like to have in 2016. The roadmap alteration we’ve seen today is unexpected to say the least, but Pascal is on much more solid footing than old Volta was in 2013. In the meantime we’re still waiting to see what Maxwell will bring NVIDIA’s professional products, and it looks like we’ll be waiting a bit longer to get the answer to that question.

Read More ...

Spotify Introduces $4.99 Premium Subscription for College Students in the US

Spotify

is already one of the most popular music streaming services on the

planet with over 24 million active users, and over a fourth of them (~ 6

million) being Spotify Premium subscribers. Spotify was in the news

back in January when it introduced ad-supported, no-limits streaming on

the desktop and across iOS and Android devices, just in time for the

launch of the Beats Music streaming service.

Today's

announcement is squarely targeted towards the college demographic in the

US, which can now avail an ad-free, unlimited streaming, Spotify

Premium subscription for $4.99 a month as long as they provide proof of

their current enrollment status at a valid educational institute in the

United States. The offer works with both new and existing accounts, and

current Premium subscribers can transition to the discounted plan at the

end of their current billing cycle. So long as as their student

admission status is maintained, the offer can be extended up to a

maximum of three times.I have personally been a huge fan of Spotify and a Premium subscriber for over 2 years now, and would have jumped at such an opportunity, had it presented itself while I was still in college. Those amongst us still lucky to be in college can follow the link below for instant gratification.

Source: Spotify, Terms & Conditions

Read More ...

Android has 97 Percent of Mobile Malware, But Nearly None in the U.S.

But almost all of that comes from third party apps stores in Asia and the Middle East

Read More ...

World Trade Org. to China on Rare Earth Metals: Stop Breaking the Law

... or else we'll do something really bad to you (except that we can't)

Read More ...

New Encryption System "Mylar" Encrypts Data in Browser Before Reaching Server

This will stop websites from leaking data

Read More ...

BlackBerry Executive Ordered to Fulfill 6-Month Resignation Term Before Leaving for Apple

He tried to leave ahead of time despite his contract

Read More ...

F-35 Lightning II Chosen by South Korea as More Software Delays Loom

F-35 program gets some good news and some bad news

Read More ...

Sprint CEO Dan Hesse Tips Nationwide HD Voice Rollout for July

"Voice is still the killer app" -- Sprint CEO Dan Hesse

Read More ...

Volvo "Flybrid" KERS System Promises to Reduce Fuel Consumption by 25%

Volvo testing a prototype of the KERS system right now

Read More ...

Available Tags:HTC , AMD , FirePro , Intel , NVIDIA , GPU , Android , Server , BlackBerry , Apple , CEO , Volvo ,

No comments:

Post a Comment