An Update to Kingston SSDNow V300: A Switch to Slower Micron NAND

If you are a forum active or a recent buyer of Kingston SSDNow V300 SSD, there is a chance that you're aware of its performance issues. In short, users have been reporting lower performance (up to 300MB/s difference in AS-SSD sequential read speed) of drives with 506 firmware pre-installed, which is the version retailers currently sell. I've received numerous emails regarding this issue from readers looking for answers, and now I finally have them.

Like many SSD OEMs, Kingston buys its NAND in wafers and does its own validation and packaging. As a result figuring out the original manufacturer is not possible without the help of Kingston because there are no public data sheets or part number decoders to be found. I've never been a big fan of OEM-packaged NAND because OEMs tend to be more tight-lipped about the specifics of the NAND and it's easier to silently switch suppliers, although I do see the economical reasons (NAND is cheaper to buy in wafers).

So far there's not been much harm from this but I've been fairly certain that someone would sooner or later play dirty and use NAND packaging as a way to mask inferior NAND. Unfortunately that day has come, and as you can guess the OEM in question is Kingston and the product is their mainstream V300 SSD.

The first generation V300 (which was sampled to media) used Toshiba's 19nm Toggle-Mode 2.0 NAND but some time ago Kingston silently switched to Micron's 20nm asynchronous NAND. The difference between the two is that the Toggle-Mode 2.0 interface in the Toshiba NAND is good for up to 200MB/s, whereas the asynchronous interface is usually only good for only ~50MB/s. The reason I say usually is that Kingston wasn't willing to go into details about the speed of the asynchronous NAND they use and the ONFI spec doesn't list maximum bandwidth for the single data rate (i.e. asynchronous) NAND. However, even though we lack the specifics of the asynchronous NAND, it's certain that we are dealing with slower NAND here and Kingston admitted that the Micron NAND isn't capable of the same performance as the older Toshiba NAND.

Comparison of Kingston V300 Revisions |

||

Revision |

Original (no longer available) |

New (currently available) |

Pre-Istalled Firmware |

505A |

506A |

NAND |

Toshiba 19nm Toggle-Mode 2.0 |

Micron 20nm asynchronous |

NAND Interface Bandwidth |

200MB/s |

~ 50MB/s (?) |

AS-SSD Incompressible Sequential Read |

~ 475MB/s |

~ 170MB/s |

AS-SSD Incompressible Sequential Write |

~ 150MB/s |

~ 85MB/s |

AS-SSD Incompressible 4K Read |

~ 20MB/s |

~ 15MB/s |

AS-SSD Incompressible 4K Write |

~ 110MB/s |

~ 65MB/s |

* AS-SSD performance data based on screenshots provided by a reader

I

have to say I'm disappointed. I thought the industry had already

learned from its mistakes and that a switch in NAND supplier shouldn't

be done silently (remember the hullabaloo OCZ caused when they silently

switched from 34nm to 25nm NAND in Vertex 2?).

Kingston assured me that this wasn't an intentional attempt to screw

customers but a strategy decision made in order to stay within the bill

of materials. Kingston was aware that they would have to switch

suppliers at some point and in fact they are now looking for yet another

supplier (likely Toshiba again). Frankly, I don't see the supplier

change as an issue; the problem is that it was done without any notice

and there's no public indication of what sort of NAND you'll get.Kingston did say that they considered updating the name to V305 or similar to distinguish the two but in the end decided against that. In our talks we agreed that it wasn't a very good decision. It's not fair to sample media with one thing and then later start selling something else. Not everyone reads reviews but the buyers who do expect a certain level of performance and it's obvious that they will feel cheated if their unit performs significantly worse. I hope this is just a one-time occasion because that's perhaps excusable, but if this becomes a habit things will start to be fishy. Ultimately, the V300 wasn't a particularly fast SF-2281 SSD when it launched, but with the NAND update it's become quite a bit slower than other alternatives.

Read More ...

Color Gamut in Smartphones: Why Bigger isn't Always Better

Post-MWC, with the launch of at least two of the major high-end flagships in the smartphone space, the basics are becoming increasingly easier to get from OEMs like high DPI displays, the latest SoC, and a plethora of RAM. Therefore, other pieces of the smartphone become increasingly important. One of the most misunderstood parts of the smartphone is a display’s accuracy. Much of this can be chalked up to a lack of existing literature on the subject when it comes to smartphone display quality, which made it easy for subjective evaluation to be the rule.

Of course, even in the PC display space, good displays were incredibly rare because of the race to the bottom for cost. Because reviewers simply didn’t highlight display quality/calibration quality in any objective manner, PC OEMs could cut costs by not calibrating displays and using cheaper panels because people adapted to the color, whether it was accurate or not. The end result was that the early days of the smartphone display race were filled with misinformation, and it has only been recently that smartphone OEMs have started to prioritize more than just contrast and resolution.

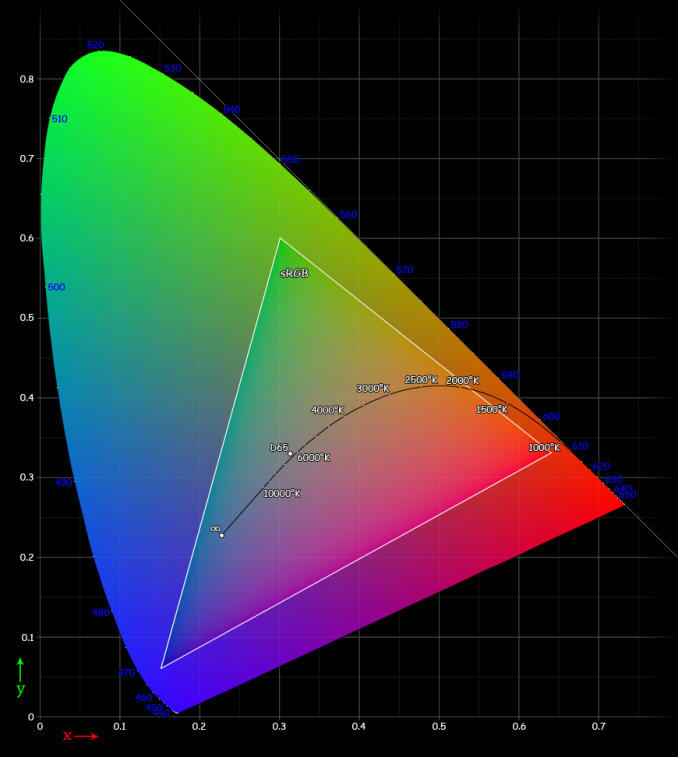

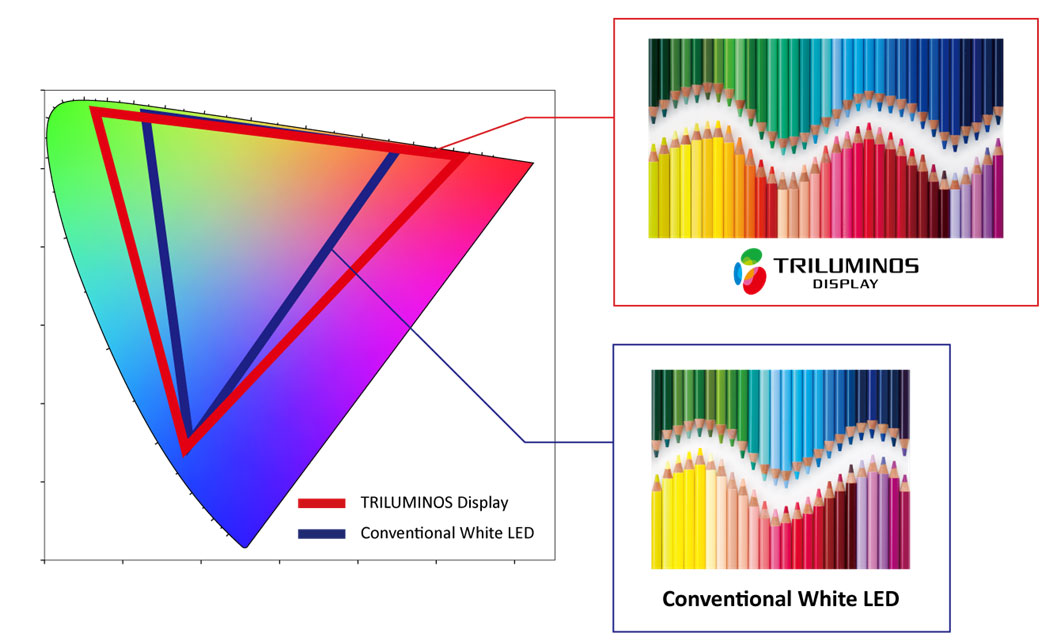

Perhaps one of the greatest misconceptions in evaluating display quality is gamut. Many people associate larger gamut with better display quality, but taking this logic to the extreme results in extremely unrealistic colors. The truth, as always, lies somewhere in between. Too large or too small of a gamut makes for inaccurate color reproduction. This is where a great deal of the complexity lies, as many people can be confused as to why too large of a display gamut is a bad thing. This certainly isn't helped by marketing, which pushes the idea of greater gamut equating to better display quality.

The most important fact to remember is that all of the mobile OSes are not aware of color space at all. There is no true color management system, so the color displayed is solely based upon a percentage of the maximum saturation that the display exposes to the OS. For a 24-bit color display, this is a range of 0-255 for each of the RGB subpixels. Thus, 255 for all three color channels will yield white, and 0 on all three color channels yields black, and all the combinations of color in between will give the familiar 16.7 million colors value that is cited for a 24-bit display. It's important to note that color depth and color gamut are independent. Color gamut refers to the range of colors that can be displayed, color depth refers to the number of gradations in color that can be displayed.

Reading carefully, it’s obvious that at no point in the past paragraph is there any reference to the distribution of said colors. This is a huge problem, because displays can have differing peaks for red, green, and blue. This can cause strange effects, as what appears to be pure blue on one display can be a cyan or turquoise on another display. That’s where standards come in, and that’s why quality of calibration can distinguish one display from another. For mobile displays and PC displays, the standard gamut is sRGB. While there’s plenty to be said of wider color gamuts such as Adobe RGB and Rec. 2020’s color space standards for UHDTV, the vast majority of content simply isn’t made for such wide gamuts. Almost everything assumes sRGB due to its sheer ubiquity.

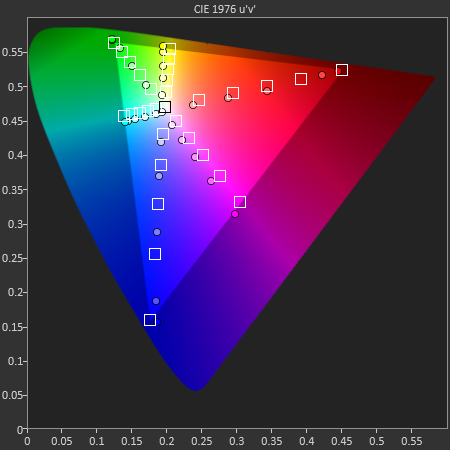

While it may seem that a display with color gamut larger than sRGB would simply mean that sRGB colors were covered without oversaturation, the OS’ lack of colorspace awareness means that this isn’t true. Because the display is simply given commands for color from 0 to 255, the resulting image would have an extra saturation effect. Assuming that the saturation curve from 0 to 255 is linear, not a single color in the image would actually be the original color intended within the color space, and that’s true even within the color space. This is best exemplified by the saturation sweep test as seen below. Despite the relatively even spacing, many of the saturations aren't correct for a target color space.

On the flip side, another issue is when the display is constrained to sRGB, but the OEM applies a compression of saturation at the high end to try and make the colors "pop", even though it too reduces color accuracy of the display, as seen below on all but the magenta saturation sweep.

Ultimately, such quibbles over color gamut and the resultant color accuracy of the display may not be able to override the dominant discourse of subjectively evaluated color in a display, and many people prefer the look of an oversaturated display to that of a properly calibrated one. But within the debates that will undoubtedly take place over such a subject, it is crucial to keep in mind that regardless of personal opinion on display colors, color accuracy is a quantitative, objective analysis of display quality. While subjectively, one may prefer a display that has a color gamut larger than sRGB, objectively, such a display isn't accurate. Of course, including a vivid display profile isn't a problem, but there should always be a display profile that makes for accurate color.

Read More ...

Apple Announces CarPlay: iOS in the Car Arriving in 2014

Earlier this morning Apple officially branded its iOS in the Car initiative as CarPlay. At a high level CarPlay allows iPhone 5/5c/5s users to access certain apps on their phone via an in-car infotainment display. It's effectively iPod integration on steroids. One of the biggest departures from the iPod integration efforts we've seen in previous vehicles is the user interface appears to be consistent across vehicles. Large, iOS 7-styled application icons adorn the UI rather than something that varies by auto maker. There's a virtual home button as well as icons for phone, music, iOS Maps and messages. Apple's CarPlay website lists a handful of apps that are supported by the technology, with the promise of more to come.

Interacting with CarPlay can be done via buttons/knobs or directly by touch (if available). It's important to note that CarPlay likely won't replace the need for checking an expensive box on your car's option list. The OEM still needs to provide the underlying hardware/interface, CarPlay simply leverages the display and communicates over Apple's Lightning cable.

The technology will show up in select new vehicles arriving this year. Ferrari, Honda, Hyundai, Jaguar, Mercedes-Benz and Volvo are launch partners, with the first cars likely being shown off at this year's Geneva Motor Show later this week.

Apple's approach to CarPlay seems to be more integrated than previous efforts, however it's unclear how strict/specific the hardware requirements are for automakers. We'll have to wait and see what actual implementations end up looking like, but I rarely encounter a car maker that seems to "get it" when it comes to integrating a fast and intuitive infotainment system. CarPlay clearly attempts to at least control the Apple side of the interface, I just can't help but wonder if the right solution for revolutionizing compute in vehicles is one step below at the platform level. In fact, if we look at Apple's preferred solution to most problems it almost always involves controlling the complete experience rather than just one portion of it.

Google is of course working on its own in-car solution based on Android. The Open Automotive Alliance is composed of Audi, GM, Honda, Hyundai, Google and NVIDIA. NVIDIA's inclusion implies more of a platform level play. The first Android powered cars will show up later this year.

Read More ...

AMD updates driver and programming tools roadmap for supporting HSA features in Kaveri

In our Kaveri review, we discussed HSA and that Kaveri brings many exciting hardware features such as true CPU/GPU shared memory (hUMA) and others such as heterogeneous queueing (hQ). However, at launch they were not really exposed in the drivers. AMD has now provided an update on the driver roadmap for exposing the hardware features to various compute APIs.

Today AMD is expected to release a beta driver for Windows that exposes some shared memory extensions to OpenCL. Currently, AMD ships an OpenCL 1.2 implementation for Kaveri. OpenCL 1.2 standard by itself does not really expose shared memory features properly but OpenCL 2.0 will have more robust support. AMD does not have a full OpenCL 2.0 driver yet, but today they will be providing some of the 2.0 functionality as extensions in their current OpenCL 1.2 driver. I don't have the details on the exact extensions supported, and I will update the article when I do.

However, OpenCL is only part of the story. Kaveri's promise is that it will provide driver support for HSA software stack which includes components such as a compiler for HSAIL and a HSA runtime. HSA software stack will enable high-level languages and simplified programming models and the exciting HSA developments appear to be happening on Linux first. In Q2 2014, AMD will release a beta HSA software stack for Linux. The Linux HSA stack release will be around the same time as release of Berlin APU for servers and Bald Eagle APU for embedded applications. These are both variations of Kaveri for different markets and in both of these markets Linux plays a very important role.

The HSA runtime stack for Linux will enable compiler writers and low-level library developers to start developing for HSA. The official HSA runtime API specifications are not finalized yet, and this release will be based upon prototype specifications. However, I think the prototype driver will be close enough to the final specifications that it will not matter much to developers.

Most developers are not interested in the base HSA stack and instead will prefer higher-level languages and tools. Several programming languages and tools will be released this year for targeting HSA. First, AMD will release an HSA-enabled version of their Java Aparapi library. Currently Aparapi targets OpenCL 1.2 for all OpenCL capable systems, and the mentioned release will include optimizations specific to HSA enabled systems. The HSA-enabled release of Aparapi is already under development and testing and should be released soon after the HSA stack release.

At some point this year, Multicoreware is expected to release the HSA backend for their C++ AMP implementation for Linux. Finally, AMD also mentioned that an extension of GCC is being worked on with SUSE that compiles C/C++/Fortran OpenMP code to generate code for HSA. I am not sure about the version of GCC and the version of OpenMP supported, or whether this will depend on non-standard directives and I will update the article if I get this information.

To summarize, AMD is continuing to work on exposing Kaveri's differentiating hardware features such as hUMA and hQ to various programming languages and tools. This year we should see the HSA stack and associated tools and languages stabilizing and becoming very usable for Linux developers, especially for server and embedded markets. For Windows, at least applications using OpenCL will be able to tap into some of Kaveri's new hardware features and more options should be coming down the pipeline.

UPDATE: The new Windows driver with preview support for some OpenCL 2.0 type functionality is now downloadable from here. The driver has very specific hardware requirements, such as requiring a A10-7850K in an Asus A88X-PRO motherboard and 8GB of RAM. More details about the requirements and the OpenCL extensions supported can be found in the OpenCL 2.0 preview driver download.

Read More ...

Interacting with HTPCs: IOGEAR and SIIG Options Reviewed

There are many options in the market for users wanting to interact with HTPCs and media streamers. In this short piece, we review the keyboard / trackball / touchpad options from IOGEAR and SIIG and look at how they stack up against one of the most popular products in the category, the Logitech K400.

Read More ...

Twitch Plays Pokémon beats Pokémon

A departure from the normal tech news, but for those not keeping tabs on the phenomenon Twitch Plays Pokemon, it is an interesting social experiment via the Twitch platform. Users are encouraged to help enter commands into the chat box to control around Red from the first generation of the Pokémon games. At the peak, an army of 100,000 users were issuing commands to the in-game character where to go. A multitude of hilariousness has come forth due to this method, as well as frustration as users with the intent of causing havoc also enter the mix (the use of the start button was adjusted, for example).

Aside from issues such as taking six hours to navigate a ledge and accidentally releasing 12 Pokémon in a single day, the extraordinary happened: millions of users at millions of keyboards managed to beat the final sequence. After 22 attempts at the Elite Four, that combination of users powered though. Not only this, but the fear of accidentally starting a new game after the end sequence came to naught.

The final moment was captured in all its glory:

Many thanks to James Croft for the image

The

task took 16 days 7 hours 45 minutes, or 391 hours in total. This is

compared to my individual personal best of 13 hours, to put it into

perspective. The final team of Pokémon were as follows:Omastar, Level 51

Pidgeot, Level 69

Venomoth, Level 39

Zapdos, Level 81

Lapras, Level 30

Nidoking, Level 53

For a brief update of the adventure, this Google document was maintained throughout the journey.

No news as to the next quest in the ‘Twitch Plays’ series, however the channel states that the next journey should start around midday Sunday GMT.

Read More ...

Seasonic S12G 650W Power Supply Review

It has been 16 months since our last power supply review, but the long wait is finally over. We have a new PSU and cases editor, and this is the first of many new PSU reviews to come. The first PSU to hit our new testing lab is the S12G 650W from Seasonic. Seasonic is a company that hardly requires any introduction; they are one of the oldest and most reputable computer PSU designers, manufacturers and retailers. Read on to see how their S12G performs in our updated testing suite.

Read More ...

How We Test PSUs - 2014

After a lengthy hiatus, we're back with a new PSU and case reviewer. As we kick off our revised power supply testing and reviews, we wanted to cover the fundamentals of how we test and what to expect. Some of this is still a work in progress, as we have not gathered all of the equipment we would like to have, and as we move forward we will periodically provide updates to our PSU testing procedures. And with that out of the way, let's discuss how we're going to go about testing power supplies.

Effective testing of a power supply requires far more than just connecting it to a PC and using a $10 multimeter to check the voltage rails. At the very least, it requires specialized (and very expensive) equipment. At this point, most people that actually know a few things about PSUs would say, "Yes, OK, you need an adjustable load and an oscilloscope." While it's true you need those items, you can't simply grab any old adjustable load and oscilloscope. What you really need is very precise, programmable electronic loads with transient testing built-in and an oscilloscope that should comply with exact specifications, among other meters and equipment. Then of course you need to know what you are doing, as it's not simply a matter of connecting a PSU to the equipment and pressing a few buttons; there are exact loading and testing procedures, described in technical papers and guides, that need to be followed.

Programmable DC loads are an absolute necessity if you want to test a power supply. To that end, we acquired two high precision Maynuo M9714 1200 Watt and two Maynuo M9711 150 Watt electronic loads, which will allow us to draw up to 2400 Watts from 12 Volt lines and up to 150 Watts from each of the 3.3 Volt and 5 Volt lines. As these are quick-response programmable models, they will also allow us to perform transient tests in the future.

When testing a power supply, using even the best of multimeters are entirely useless. An oscilloscope is an absolute necessity and not just any oscilloscope. Intel's ATX design guide denotes that the oscilloscope should have a bandwidth of 20MHz; however, things are not nearly as simple as that. Even if you do want to purchase a proper oscilloscope, buying a 20MHz oscilloscope is a mistake. Digital oscilloscopes need to be capable of acquiring samples at least ten times faster than the frequency they are required to resolve. So, you need a 20MHz oscilloscope with a sampling rate of at least 200 MSa/s, and low range or USB connected devices cannot get anywhere close to that number.

There are of course many other minor details but we will not bore you with those. It should suffice to say that for the time being we are using a Rigol DS5042M oscilloscope, which has a bandwidth of 40MHz and a real time sampling rate of 500 MSa/s. Although that sounds impressive, actually even this device is not good enough if you want to perform transient tests properly and it cannot resolve noise out of the ripple of a signal; these are tests we plan to add in the future.

Compared to the above items, testing the efficiency of a PSU is relatively simple, once you know exactly how much power you are drawing from it. Our electronic loads tell us exactly how much power is being drawn at a given time; therefore, we only need a good AC power analyzer to tell us how much power the unit is drawing from the AC outlet. Note the "good" part, as you need a power analyzer capable of displaying true RMS values, as PSUs can generate a great deal of harmonics.

Our Extech 380803 power analyzer does a very good job at reporting the level of power that our PSU requires at any given time. We should note that all testing is being performed with a 230V/50Hz input, delivered by a 3000VA VARIAC for the perfect adjustment of the input voltage. Unfortunately, we cannot perform tests at 110V/60Hz at the moment, as that requires a high output, programmable AC power source. As a rough estimate, conversion efficiency drops by 1% to 1.5% when the input voltage is lowered to 110V/ 60Hz.

Thermal and noise testing are another complex procedure. Thermal testing is relatively simple; we only had to acquire two high precision UNI-T UT-325 digital thermometers. With four temperature probes, we can monitor the ambient temperature, the exhaust temperature of the PSU, as well as the temperature of its primary and secondary heatsinks. Noise testing however cannot be performed while the unit is being tested, as the very equipment that is used to test it generates a lot of noise. Everyone says that it is impossible to keep the unit loaded with the equipment far apart in order to perform noise testing and yes, that truly is impossible. So, it cannot be done, right? Wrong.

One of the basics of the scientific method is that you isolate the problem from a system and resolve it on its own. In other words, instead of trying to do the impossible and measure the noise of a power supply while we are testing it, there is nothing keeping us from using a non-intrusive laser tachometer to record the speed of the fan instead. Then, we can simply test the unit on its own, with the fan hotwired to a small fanless, adjustable DC PSU that we fabricated, taking noise readings with our Extech HD600 for the RPM range of the fan and cross-referencing the two tables. Not quite that difficult, was it? There is a catch however; as the unit will not be powered at the time of sound level testing, the meter cannot record any coil whine noise. Coil whine is clearly audible during testing though and we will make sure to report it if (when) we encounter a PSU whose coils could have used a little bit more lacquer. The background noise of our testing environment is about 30.4 dB(A), which figure resembles a quiet room at night. Equipment noise usually becomes audible when our instrumentation reads above 33.5 dB(A).

In order to facilitate testing power supplies more effectively, we created a test fixture for the connection between the PSU and the testing equipment, as well as a proprietary hot box. The hot box is not much more than a closed case with an air-heating device, which is controlled via a DAQ and our software. It is imperative to heat the air inside the box, not the box itself, in order to create good testing conditions. Admittedly, this self-made contraption is not perfect as it is small and has a very slow reaction rate, but it does work well for the means of simulating the environment inside a computer case. Therefore, testing will be performed at room temperature (maintained at 25 °C) and inside the hotbox (at 45-50 °C). Remember that efficiency certifications are performed at room temperature (25 °C) and a power supply can easily fail to meet its efficiency certification standards inside the hotbox!

As for the testing procedure, there are specific, detailed guidelines on how to perform it. All testing is done in accordance with Intel's Power Supply Design Guide for Desktop Form Factors and with the Generalized Test Protocol for Calculating the Energy Efficiency of Internal AC-DC and DC-DC Power Supplies. These two documents describe in detail how the equipment should be interconnected, how loading should be performed (yes, you do not simply load the power lines randomly), and the basic methodology for the acquisition of each data set. However, not all of our testing is covered and/or endorsed by these guidelines.

There are no guidelines on how transient tests should be performed and the momentary power-up cross load testing that Intel recommends is far too lenient. Intel recommends that the 12V line should be loaded to < 0.1A and the 3.3V/5V lines up to just 5A. We also perform two cross load tests of our own design. In test CL1, we load the 12V line up to 80% of its maximum capacity and the 3.3V/5V lines with 2A each. In test CL2, we load the 12V line with 2A and the 3.3V/5V lines up to 80% of their maximum combined capacity.

Furthermore, it has been suggested that efficiency testing needs to be performed at specific load intervals (20% - 50% - 100%), which is considered to be the normal operating range of a PSU. However, modern systems can easily have their energy demand drop dramatically while idling, which is why we will be testing power supplies starting at 5% of their rated capacity, not 20%. Note that the conversion efficiency of all switching PSUs literally takes a dive when the load is very low, so large drops of >10% are expected and natural.

Any questions or comments on our PSU testing procedures are welcome, and as noted earlier we plan to add and/or improve some of the testing over the coming months with some additional hardware. We will provide an updated article when/if such changes are required.

Read More ...

Intel SSD 730 (480GB) Review: Bringing Enterprise to the Consumers

The days of Intel being the dominant player in the client SSD business are long gone. A few years ago Intel shifted its focus from client SSDs to the more profitable and hence more alluring enterprise market. As a result of the move to SandForce silicon, Intel's client SSD lineup became more generic and lost the Intel vibe of the the X-25M series. With the SSD 730 Intel finally provides an in-house designed consumer drive after a hiatus of a few years. Based on the S3500/S3700 platform, the SSD 730 adopts many enterprise features and brings them to the hands of consumers. Does Intel retake the crown with the SSD 730? Read on and find out!

Read More ...

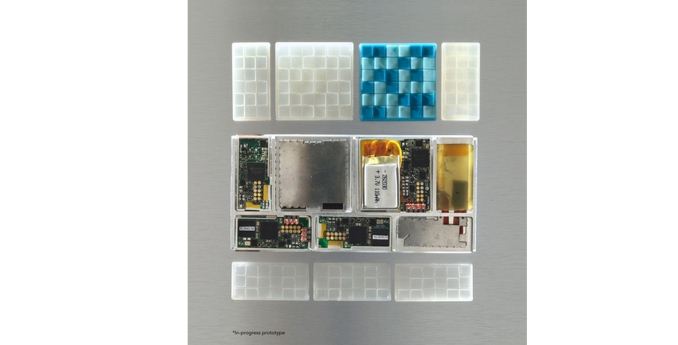

Modular Smartphone Project Ara from Google to Start Development Conferences

Joshua talked about Project Ara (from Motorola at the time) back in October as a campaign that focused on attracting OEM interest into a modular smartphone design. The results of that campaign take the next step forward as Google announces the first set of developer conferences for a modular device.

Headed under the Advanced Technology and Projects (ATAP) division, the platform is meant to be a single hub onto which the user can place their own hardware. This means CPUs, cameras, sensors, screens, baseband, modems, connectivity, storage – the whole gamut. The issue with such a device compounds the effects of going from a managed ecosystem (Apple and several hardware combinations) to a free ecosystem (Android and every hardware combination). Project Ara takes this complexity one stage further, and there has to be a fundamental software base to solve this. Hence ATAP is going to be doing three developers’ conferences in 2014, starting on April 15-16 at the Computer History Museum in Mountain View, California.

Aside from those attending in person, the event will be live webcast with question and answer sessions built into the programme. Due to the early stage of Project Ara, the initial conference is all about the modular system itself, building a device and getting it to work. Coinciding with the first conference, an alpha version of the Module Developers’ Kit should be available.

The other two conferences for 2014 are yet to be announced. Further info on the conference is found at the website projectara.com, to be updated over the next few weeks with more details.

To quote the website:

We plan a series of three Ara Developers’ Conferences throughout 2014. The first of these, scheduled for April 15-16, will focus on the alpha release of the Ara Module Developers’ Kit (MDK). The MDK is a free and open platform specification and reference implementation that contains everything you need to develop an Ara module. We expect that the MDK will be released online in early April.

The Developers’ Conference will consist of a detailed walk-through of existing and planned features of the Ara platform, a briefing and community feedback sessions on the alpha MDK, and an announcement of a series of prize challenges for module developers. The complete Developers’ Conference agenda will be out in the next few weeks.

This first version of the MDK relies on a prototype implementation of the Ara on-device network using the MIPI UniPro protocol implemented on FPGA and running over an LVDS physical layer. Subsequent versions will soon be built around a much more efficient and higher performance ASIC implementation of UniPro, running over a capacitive M-PHY physical layer.

The Developers’ Conference, as the name suggests, is a forum targeted at developers so priority for on-site attendance will reflect this. For others--non-developers and Ara enthusiasts--we welcome you to join us via the live webstream. That said, we invite developers of all shapes and sizes: from major OEMs to innovative component suppliers to startups and new entrants into the mobile space.

Read More ...

Low Level Graphics API Developments @ GDC 2014?

With the annual Game Developer Conference taking place next month in San Francisco, the session catalogs for the conference are finally being published and it looks like we may be in for some interesting news on the API front. Word comes via the Tech Report and regular contributor SH SOTN that 3 different low level API sessions have popped up in the session catalog thus far. These sessions are covering both Direct3D and OpenGL, and feature the 4 major contributors for PC graphics APIs: Microsoft, AMD, NVIDIA, and Intel.

The session descriptions only offer a limited amount of information on their respective contents, so we don’t know whether anything here is a hard product announcement or whether it’s being presented for software research & development purposes, but at a minimum it would give us an idea into what both Microsoft and the OpenGL hardware members are looking into as far as API efficiency is concerned. The subject has become an item of significant interest over the past couple of years, first with AMD’s general clamoring for low level APIs, and more recently with the launch of their Mantle API. And with the console space now generally aligned with the PC space (x86 CPUs + D3D11 GPUs), now is apparently as good a time as any to put together a low level API that can reach into the PC space.

With GDC taking place next month we’ll know soon enough just what Microsoft and its hardware partners are planning. In the meantime let’s take a quick look at the 3 sessions.

DirectX: Evolving Microsoft's Graphics Platform

Presented by: Microsoft; Anuj Gosalia, Development Manager, Windows GraphicsFor nearly 20 years, DirectX has been the platform used by game developers to create the fastest, most visually impressive games on the planet.

However, you asked us to do more. You asked us to bring you even closer to the metal and to do so on an unparalleled assortment of hardware. You also asked us for better tools so that you can squeeze every last drop of performance out of your PC, tablet, phone and console.

Come learn our plans to deliver.

Direct3D Futures

Presented by: Microsoft; Max McMullen, Development Lead, Windows GraphicsCome learn how future changes to Direct3D will enable next generation games to run faster than ever before!

In this session we will discuss future improvements in Direct3D that will allow developers an unprecedented level of hardware control and reduced CPU rendering overhead across a broad ecosystem of hardware.

If you use cutting-edge 3D graphics in your games, middleware, or engines and want to efficiently build rich and immersive visuals, you don't want to miss this talk.

Approaching Zero Driver Overhead in OpenGL

Presented By: NVIDIA; Cass Everitt, OpenGL Engineer, NVIDIA; Tim Foley, Advanced Rendering Technology Team Lead, Intel; John McDonald, Senior Software Engineer, NVIDIA; Graham Sellers, Senior Manager and Software Architect, AMDDriver overhead has been a frustrating reality for game developers for the entire life of the PC game industry. On desktop systems, driver overhead can decrease frame rate, while on mobile devices driver overhead is more insidious--robbing both battery life and frame rate. In this unprecedented sponsored session, Graham Sellers (AMD), Tim Foley (Intel), Cass Everitt (NVIDIA) and John McDonald (NVIDIA) will present high-level concepts available in today's OpenGL implementations that radically reduce driver overhead--by up to 10x or more. The techniques presented will apply to all major vendors and are suitable for use across multiple platforms. Additionally, they will demonstrate practical demos of the techniques in action in an extensible, open source comparison framework.

Read More ...

Elop to Lead Devices and Studios at Microsoft: This Means Xbox

News spreading online from a leaked memo point former Nokia CEO Stephen Elop to become the new lead at Microsoft’s Devices and Studios division. This appointment puts Elop in charge of all games and hardware for the Xbox platform, Microsoft Surface and all the game developments by Microsoft owned studios. This comes on the back of Satya Nadella being named as the post-Ballmer Microsoft CEO, for which Elop was quoted as being in the running.

Elop replaces Julie Larson-Green who is taking on a new role as Chief Experience Officer for the Applications and Services group, which includes user experiences on Office, Skype and Bing.

No current timeline as to the handover, although the wording would seem to suggest it is almost immediate, with a short period while Elop moves into the role.

Read More ...

A3Cube develop Extreme Parallel Storage Fabric, 7x Infiniband

News from EETimes points towards a startup that claims to offer an extreme performance advantage over Infiniband. A3Cube Inc. has developed a variation of the PCIe Express on a Network Interface Card to offer lower latency. The company is promoting their Ronniee Express technology via a PCIe 2.0 driven FPGA to offer sub-microsecond latency across a 128 server cluster.

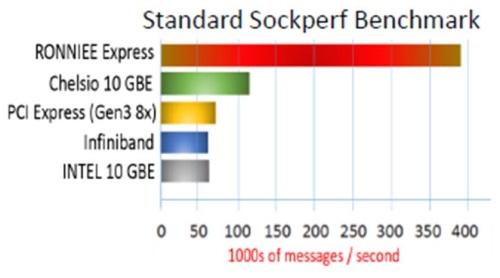

In the Sockperf benchmark, numbers from A3Cube put performance at around 7x that of Infiniband and PCIe 3.0 x8, and thus claim that the approach beats the top alternatives. The PCIe support of the device at the physical layer enables quality-of-service features, and A3Cube claim the fabric enables a cluster of 10000 nodes to be represented in a single image without congestion.

The aim for A3Cube will be primarily in HFT, genomics, oil/gas exploration and real-time data analytics. Prototypes for merchants are currently being worked on, and it is expected that two versions of network cards and a 1U switch based on the technology will be available before July.

The new IP from A3Cube is kept hidden away, but the logic points towards device enumeration and the extension of the PCIe root complex of a cluster of systems. This is based on the quote regarding PCIe 3.0 incompatibility based on the different device enumeration in that specification. The plan is to build a solid platform on PCIe 4.0, which puts the technology several years away in terms of non-specialized deployment.

As many startups, the process for A3Cube is to now secure venture funding. The approach to Ronniee Express is different to that of PLX who are developing a direct PCIe interconnect for computer racks.

A3Cube’s webpage on the technology states the fabric uses a combination of hardware and software, while remaining application transparent. The product combines multiple 20 or 40 Gbit/s channels, with the aim at petabyte-scale Big Data and HPC storage systems.

Information from Willem Ter Harmsel puts the Ronniee NIC system as a global shared memory container, with an in-memory network between nodes. CPU/Memory/IO are directly connected, with 800-900 nanosecond latencies, and the ‘memory windows’ facilitates low latency traffic.

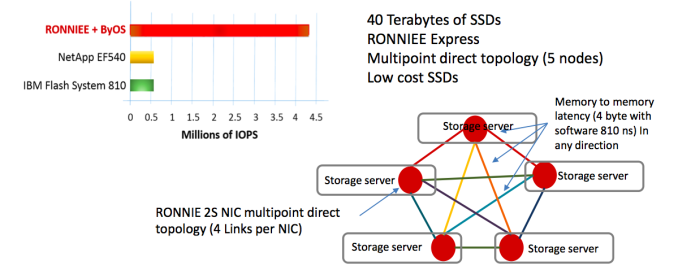

Using A3cube’s storage OS, byOS, and 40 terabytes of SSDs and the Ronniee Express fabric, five storage nodes were connected together via 4 links per NIC allowing for 810ns latency in any direction. A3Cube claim 4 million IOPs with this setup.

Further, in interview by Willem and Anontella Rubicco shows that “Ronniee is designed to build massively parallel storage and analytics machines; not to be used as an “interconnection” as Infiniband or Ethernet. It is designed to accelerate applications and create parallel storage and analytics architecture.”

Source: EETimes, A3Cube, willemterharmsel.nl.

Read More ...

SanDisk launches 128GB microSD at MWC

Today, SanDisk is the first to launch the long-awaited (by some) 128GB microSD card, and it seems to be a class 10, UHS-1 card. While there are no benchmarks that I have seen, it's likely that this won't be as fast as the Extreme and Extreme Ultra/Pro microSD brands, which would mean that this is likely only for bulk media storage that only requires sequential reads. Amazon is already selling them for 120USD, for those that don't want to wait.

Read More ...

NVIDIA's Tegra Note 7 LTE and Tegra 4i Devices: Hands On

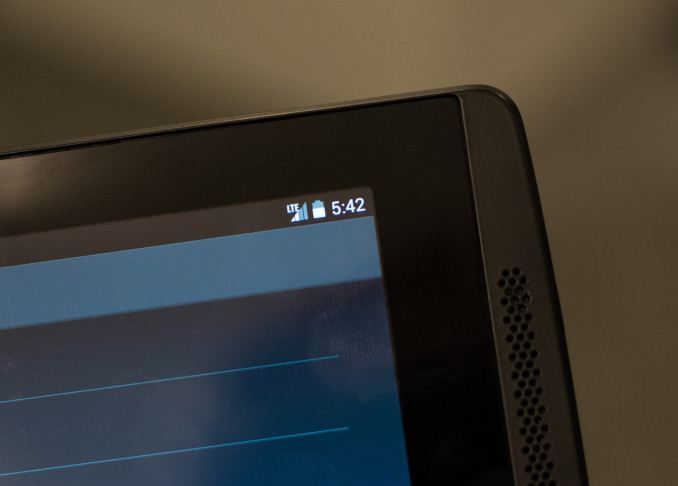

Yesterday I spent some time with NVIDIA where I played with the newly announced Tegra Note 7 LTE. Internally the $299 Note 7 LTE is identical to the WiFi-only version, but with the inclusion of a NVIDIA i500 mini PCIe card.

As many of you noticed in our announcement post of the Tegra Note 7 LTE, there is an increase in weight for the LTE version. It turns out the added weight is because the Note 7 LTE actually gets a slightly redesigned chassis that's a bit more structurally sound. The main visual change is on the back cover which now looks more 2013 Nexus 7-like.

The Tegra Note 7 LTE was able to connect and transact data on a live LTE network. NVIDIA tells me that devices will be available sometime in Q2 and will ship fully unlocked. NVIDIA did add that the final list of bands supported might change.

NVIDIA also had the Wiko WAX, which is one of the first (if not the first) retail Tegra 4i device. The WAX features a 4.7" 720p display, 8MP rear facing camera and obviously NVIDIA's Tegra 4i. NVIDIA expects availability in Europe beginning in April.

Read More ...

Western Digital My Cloud EX4 and LenovoEMC ix4-300d Home NAS Units Review

The consumer Network Attached Storage (NAS) market has seen tremendous growth over the past few years. As the amount of digital media generated by the average household increases, the standard 2-bay NAS is no longer sufficient. Today, we are going to take a look at two different 4-bay solutions, the Western Digital My Cloud EX4 and the LenovoEMC ix4-300d. Both of them use ARM-based Marvell SoC platforms and target the home consumer / SOHO markets. Read on to find out how these products stack up against each other.

Read More ...

Samsung's Exynos 5422 & The Ideal big.LITTLE: Exynos 5 Hexa (5260)

Samsung announced two new mobile SoCs at MWC today. The first is an update to the Exynos 5 Octa with the new Exynos 5422. The 5422 is a mild update to the 5420, which was found in some international variants of the Galaxy Note 3. The new SoC is still built on a 28nm process at Samsung, but enjoys much higher frequencies on both the Cortex A7 and A15 clusters. The two clusters can run their cores at up to 1.5GHz and 2.1GHz, respectively.

The 5422 supports HMP (Heterogeneous Multi-Processing), and Samsung LSI tells us that unlike the 5420 we may actually see this one used with HMP enabled. HMP refers to the ability for the OS to use and schedule threads on all 8 cores at the same time, putting those threads with low performance requirements on the little cores and high performance threads on the big cores.

The GPU is still the same ARM Mali-T628 MP6 from the 5420, running at the same frequency. Samsung does expect the 5422 to ship with updated software (drivers perhaps?) that will improve GPU performance over the 5420.

Exynos 5 Comparison |

||||||||

SoC |

5250 |

5260 |

5410 |

5420 |

5422 |

|||

Max Number of Active Cores |

2 |

6 |

4 |

4 (?) |

8 |

|||

CPU Configuration |

2 x Cortex A15 |

2 x Cortex A15 + 4 x Cortex A7 |

4 x Cortex A15 + 4 x Cortex A7 |

4 x Cortex A15 + 4 x Cortex A7 |

4 x Cortex A15 + 4 x Cortex A7 |

|||

A15 Max Clock |

1.7 GHz |

1.7GHz |

1.6GHz |

1.8GHz |

2.1GHz |

|||

A7 Max Clock |

- |

1.3GHz |

1.2GHz |

1.3GHz |

1.5GHz |

|||

GPU |

ARM Mali-T604 MP4 |

ARM Mali-T624 (?) |

Imagination PowerVR SGX544MP3 |

ARM Mali-T628 MP6 |

ARM Mali-T628 MP6 |

|||

Memory Interface |

2 x 32-bit LPDDR3-1600 |

2 x 32-bit LPDDR3-1600 (?) |

2 x 32-bit LPDDR3-1600 |

2 x 32-bit LPDDR3-1866 |

2 x 32-bit LPDDR3-1866 |

|||

Process |

32nm HK+MG |

28nm HK+MG (?) |

28nm HK+MG |

28nm HK+MG |

28nm HK+MG |

|||

The more exciting news however is the new Exynos 5 Hexa, a six-core big.LITTLE HMP SoC. With a design that would make Peter Greenhalgh proud, the Exynos 5260 features two ARM Cortex A15 cores running at up to 1.7GHz and four Cortex A7 cores running at up to 1.3GHz. The result is a six core design that is likely the best balance of performance and low power consumption. HMP is fully supported so a device with the proper scheduler and OS support would be able to use all 6 cores at the same time.

The 5260 feels like the ideal big.LITTLE implemention. I'm not expecting to find the 5260 in many devices, but I absolutely want to test a platform with one in it. If there was ever a real way to evaluate the impact of big.LITTLE, it's Samsung's Exynos 5260.

Samsung didn't announce cache sizes, process node or GPU IP for the 5260. Earlier leaks hinted at an ARM Mali T624 GPU. Samsung's release quotes up to 12.8GB/s of memory bandwidth, which implies a 64-bit wide LPDDR3-1600 interface.

Read More ...

Motorola Press Event at MWC: New Watch and Moto X Coming

Despite Motorola’s current state of flux regarding their acquisition by Lenovo, there are plenty of products in the pipeline. At the press event this evening, Rick Osterloh made a couple of interesting statements. The first is the position of wearables, and that Motorola is in the process of developing a smartwatch. The focus for the watch will be making it stylish – their current issue with current devices is the lack of style and finding a device that people actually want to wear. Motorola will try and fix this.

Also, for the briefest of moments, the panel was asked regarding the next version of the Moto X. The answer was simply ‘sometime in the summer’.

Read More ...

MWC 2014: Motorola Press Event Live Blog

Ian and I are currently sitting here waiting for the start of the event, stay tuned!

Read More ...

AMD Catalyst 14.2 Beta Drivers Now Available

Just a bit over 3 weeks since the release of AMD’s Catalyst 14.1 beta drivers, AMD is back again with their first update to the Catalyst 14 series with the 14.2 betas. A direct continuation of the 14.1 betas (driver branch 13.35), these drivers contain a number of bug fixes for 14.1. Furthermore these drivers will also be AMD’s launch drivers for Thief, which is being released today.

As far as Thief is concerned, Thief is one of AMD’s showcase titles for their GCN marquee features, designed to showcase both Mantle and TrueAudio. Unfortunately, in something that’s becoming a trend with AMD joint projects, the actual GCN features aren’t in the launch version of Thief. Instead they will be added in a patch in March (hopefully), which this driver lays the groundwork for by enabling TrueAudio and providing the Mantle functionality Thief will need. In the meantime these are still the recommended drivers for Thief’s Direct3D renderer, serving as the validated launch drivers for that game along with the first driver set to provide a complete Crossfire profile.

Meanwhile for bug fixes, the focus is primarily on Mantle bugs, though a few Direct3D/OpenGL bugs are also covered.

Hangs and stuttering resolved for the Mantle codepath in Battlefield 4; users are now encouraged to try mGPU

Multi-GPU frame pacing in Battlefield 3 and Battlefield 4 is now enabled for non-XDMA configurations running resolutions >1600p.

Mantle: Multi-GPU configurations (up to 4 GPUs) running Battlefield 4 are now supported

We fixed Minecraft! Sorry about that, builders.

Intermittent hangs and crashes should be resolved in 3D applications

Thief: Crossfire Profile update and performance improvements for single GPU configurations

Dual graphics DirectX 9 application issues have been resolved

Resolves corruption issues seen in X-plane

Video Compression Engine (VCE) enabled for compatible GPU and APU products (e.g. GCN-based SKUs). Hardware-accelerated encode of H.264 now possible for 1080p60 content

Video decode (UVD) performance and efficiency improvements for the AMD R9 290/290X, R9 260X, “Kaveri” and “Kabini”

X-Video hardware video acceleration via the GLAMOR library now supported

2D acceleration via the GLAMOR library now enabled by default

Substantial improvements to overall video transcode times for hardware-accelerated transcoding apps

Tiling support now enabled on all GCN-based products

Substantial OpenGL feature level upgrade to v4.3

Major contributions to the Linux kernel 3.14 to improve dynamic power management, DisplayPort robustness and power efficiency on all GCN-based hardware

New programming guides and register specifications released for HD 5000, 6000, 7000 and R9/R7 Series GPUs. This will enable volunteer and professional developers to contribute to the X.ORG OSS Radeon driver, and can facilitate porting to other non-Linux platforms.

As always, you can grab the Catalyst 14.2 beta driver from AMD’s driver download page. The driver weighs in at 287MB.

Read More ...

The AnandTech Podcast: Episode 28 - MWC 2014 Mobile Show

After popular demand, here is our Mobile World Congress 2014 Mobile Show in podcast form.

Recorded live from Mobile World Congress 2014, on this episode Anand Shimpi & Brian Klug discuss all things mobile. Anand & Brian kick things off with a discussion recapping our recent expose on Imagination's PowerVR Rogue architecture, before segueing with a discussion of SoC core counts and core wars on both the CPU and GPU side. Anand and Brian then get into the ARM Coretex-A53 and its adoption in high core count designs, Qualcomm's Snapdragon 600 series, and then what Intel is up to at MWC, including their Merrifield SoC announcements. Anand and Brian then wrap things up with a discussion of Samsung's just-announced flagship smartphone, the Galaxy S5, and their brief impressions on various aspects of it, including the sensors, UI, and the use of both Qualcomm and in-house Exynos SoCs in various S5 models.

Featuring Anand Shimpi & Brian Klug

iTunes

RSS - mp3, m4a

Direct Links - mp3, m4a

Total Time: 38 minutes 51 seconds

Outline h:mm

Imagination PowerVR Rogue Architecture Discussion - 0:00

SoC Core Count Wars - 05:50

ARM Cortex-A53 & Qualcomm Snapdragon 600 Series - 06:35

Intel Merrifield & Moorefield - 11:45

Reference platforms, top to bottom? - 17:20

LTE Modems - 19:12

Samsung Galaxy S5 - 21:54

Samsung Touchwiz - 31:10

Fingerprint & Heartbeat Sensors - 34:35

S5 Exynos 5 Version - 38:03

SoC Core Count Wars - 05:50

ARM Cortex-A53 & Qualcomm Snapdragon 600 Series - 06:35

Intel Merrifield & Moorefield - 11:45

Reference platforms, top to bottom? - 17:20

LTE Modems - 19:12

Samsung Galaxy S5 - 21:54

Samsung Touchwiz - 31:10

Fingerprint & Heartbeat Sensors - 34:35

S5 Exynos 5 Version - 38:03

Read More ...

The AnandTech Mobile Show - MWC 2014 Edition

Earlier this morning (Barcelona time) Brian and I sat down in front of a camera and recapped some of the major news at MWC. We talked about the new Snapdragon 610/615, IMG's Series 6XT architecture disclosure, Intel's Merrifield and Moorefield as well as our hands on experience with Samsung's Galaxy S 5.

Check it all out in the video below!

Read More ...

Western Digital Targets Surveillance Storage Market with Purple Hard Drives

Western Digital's campaign to delineate hard drive market segments and the accompanied colour-based branding has proved to be very popular. The Red drive lineup for the SMB / SOHO NAS market and the Black drives for the enthusiast segment have been greeted with good market acceptance. Today, Western Digital is expanding this initiative with the Purple branding for hard drives targeting the growing surveillance market (NVRs and NAS units dedicated to recording feeds from IP cameras).

Background

All SATA hard drives fulfill the same basic functionality of storing data on platters. Most hard drives use the same hardware circuitry (except for enterprise drives which have features such as RAFF - Rotary Accelerated Feed Forward - that require extra sensors on the drive to better handle vibrations in storage arrays). The scope for differentiation boils down to the firmware. The SATA protocol has a number of optional features intended to improve performance for specific scenarios. One example is NCQ (Native Command Queuing), which allows for reordering of commands to improve efficiency. Another example is the ATA Streaming Command Set, which targets A/V setups by providing features targeting video payloads. Drives targeting the A/V and surveillance markets can optimize the firmware around this optional specification.History

Western Digital has been serving the DVR / STB / surveillance storage market with the WD-AV GP product line for quite some time now. Hardware-wise, these drives happen to be the same as the Green drives, but the difference happens to be in the firmware. WD's ATA Streaming Command Set implementation under the SilkStream tag supports up to 12 simultaneous HD streams.Most NVRs are based off NAS platforms in RAIDed environments. Since the WD-AV-GP drives were not specifically geared for use in NAS devices, it makes sense for WD to develop firmware optimized for video surveillance storage using the Red drives as the hardware base. The result is the WD Purple lineup.

Features and Specifications

The Purple hard drives (WDxxPURX) are dedicated to service the surveillance market. Similar to the WD-AV GP lineup, these are also built for 24x7 operation. The SilkStream tag for the optimized firmware in the WD-AV GP lineup is replaced by AllFrame in the WD Purple. The optimizations work with the ATA Streaming Command Set to improve playback performance and reduce errors / frame loss. While SilkStream was advertised to support up to 12 simultaneous HD streams, AllFrame is designed to support up to 32 HD cameras / channels. Obviously, advantage of the AllFrame technology is possible only with the host controller support. It goes without saying that the appliance vendor must be in the compatibility list provided by WD in order to take advantage of the surveillance-specific features in the Purple drives.Similar to the Red drives, these come with the IntelliPower feature (rotational speed of 5400 rpm) and TLER enabled. IntelliPower enables WD to provide best-in-class power consumption numbers with the Purple drives. There is no RAFF support on-board, though, as these are meant for table-top appliances with 1 to 8-bays. The drives are rated for workloads up to 60 TB/yr. For reference, recording 4 x 1080p30 streams for 365 days requires 200 TB of storage. SD streams come with lower storage cost. In any case, putting the WD Purples in RAID with multiple drives should easily keep workloads for each drive under 60 TB/yr. For SMB / SME surveillance setups requiring up to 64 cameras, WD suggests the use of Se drives, while mission-critical applications are advised to utilize the more powerful Re drives.

The 3.5" Purple drives come in 1, 2, 3 and 4 TB capacities. WD has a number of launch partners including, but not limited to, PELCO, HikVision, Synology and QNAP.

In other news, Seagate also announced the Surveillance HDD yesterday, targeting the surveillance market. Unfortunately, we weren't briefed extensively about it, but the specs seem similar to that of the Purple lineup (except for the addition of RV - rotational vibration - sensors for operating in multi-drive environments, which seems similar to RAFF technology).

Read More ...

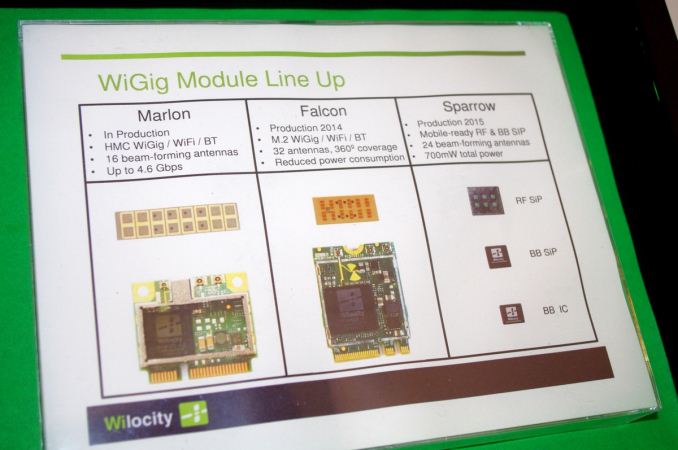

MWC 2014: Wilocity brings WiGig 802.11ad to Smartphones with Wil6300 Chipset

News just dropped through from Wilocity about its new Wil6300 chipset announced at Mobile World Congress. The Wil6300 chipset is quoted as the world’s first 802.11ad ‘WiGig’ multi-gigabit WiFi chipset for smartphones. Wilocity are stating a peak speed of 4.6 Gbps over an 802.11ad network, equivalent to 8-antenna based WiFi. So while 802.11ad can have limited range (10m with no walls) due to the 60 GHz frequency band used, there is scope in home and work user environments for faster in-network data streaming, such as video.

Wilocity has a history of WiGig development – we covered their desktop PC 802.11ad development back at Computex 2012 and sales figures for Wilocity number more than a million since February 2013. In the mobile space, this new chipset is built on a 28nm process and power use is expected in the 200-300mW range in use. While idle, power consumption is rated at sub 1mW, and the 700mW total power quoted above is for pure file transfer.

I had a chance to pop by the Wilocity booth at MWC2014. Its 802.11ad demo was working with devices from their partners, namely a Dell tablet streaming to a box with 2K content and showing file transfer between two 802.11ad enabled tablets:

For the mobile phone demonstration, a Samsung phone was retrofitted with Wilocity's 2014 Falcon platform and peak statistics of were being shown:

Wilocity’s development cycle puts mobile based 802.11ad in the 2015 space. They are working with ‘the usual players’ in the market. The module we were shown implemented 802.11n and 802.11ad in the same device, and I was quoted that with economies of scale the price of the 802.11ad module could be brought down to that of 802.11ac. Wilocity is also using beamforming to help improve quality at extended ranges, with up to 50 meters being tested and confirmed, albeit at lower speeds. The main issue is walls, which they are working on.

Read More ...

Nokia launches the X, X+, and XL at MWC

Nokia X

Nokia

has finally launched the long-rumored Nokia X running Android, and it's

well worth going over Nokia's first Android phones. The first phone is

the Nokia X, which is an MSM8225-platform device, with a dual core 1 GHz

Cortex A5 inside and Adreno 203 GPU. There's 4GB of internal storage

and 512 MB of RAM. The display is a WVGA IPS panel at 4" in size, and

there's a 1500 mAh, 3.7V battery inside of the phone. The camera is a

3MP, fixed focus unit with a 1/5" sensor size and F/2.8 aperture, and

video recording is limited to FWVGA. Needless to say, this is a budget

device, and at 89 EUR or so, it could be an interesting device.On the software side, the Nokia X runs Android 4.1.2 with Nokia's skin on top that makes it look like Windows Phone.

Nokia X+

The

Nokia X+ is effectively identical to the Nokia X, the sole difference

that I have seen is that the X+ has 768MB of RAM, and will go on sale

for 99 EUR.

Nokia XL

The

Nokia XL is slightly different from either the X or the X+. Battery

capacity goes up to 2000 mAh, the camera is upgraded to a 5MP module

with 1/4" sensor and adjustable (auto) focus. The display is also made

to 5", but it remains the same WVGA resolution with IPS display

technology. It will cost 109 EUR.All of these phones also have Dual SIM capabilities, and will have Nokia's suite of applications at launch.

Nokia X Development

In

the Nokia Developer Keynote today, the platform for Nokia X was

explained at a high level in order to answer a number of questions that

were asked since the recent announcement. Simply put, the extra Nokia

layer over the base modifiable Android system should not interfere with

Android mobile development. App developers will have to submit their

apps to the Nokia X store, but Nokia expects 99% of all apps to work

straight away. A system is set up such that any developer APK can be

checked online in a few minutes – upload and get an answer if it will

work. Nokia Store validation will take a little longer when the app is

submitted (Lunagames stated that Highway Hei$t took a day). Also of note

was the discussion regarding strategy.Due to Nokia X positioning itself in emerging markets as a user’s first/second smartphone, and in regions where users might not have access to credit cards, the focus is on carrier billing. Nokia are providing a module for APK development to help enable this.

Read More ...

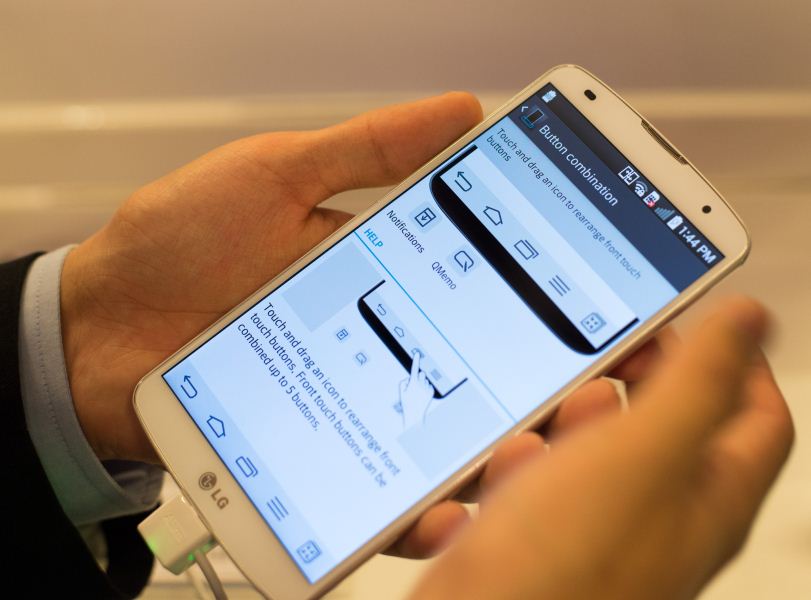

LG G Pro 2 and G2 Mini launched: Analysis and Hands-On

LG G Pro 2

At

MWC, LG has also announced a few new devices, some that fit in with

their previous cadence of releases, and others that are something new.

To start with the expected, the successor to the LG G Pro was announced,

and it's a worthy successor to the G Pro and a close cousin to the LG

G2. It uses a mildly updated version of the Snapdragon 800, the

Snapdragon 800 (8974AB), and shares much of the industrial design and

material feel with the LG G2, which includes the back-side mounted

buttons, the curved back cover, and the same 13MP camera module with OIS

for better low light shots.Of course, there's also plenty that has changed. The display, while still the same 1080p resolution and IPS technology, is now a 5.9" size, which puts it firmly in phablet territory. While it may be large, LG is also claiming a 77.2% screen to frame ratio, which means that it isn't quite as big as a One max in the hand, and more in line with the Note 3. It's also well worth saying that the G Pro 2 has a matted texture that immensely improves in hand feel. The G Pro 2 also continues the G Pro's hardware line with a removable battery and microSD slot, which is why there isn't a curved battery, which is definitely a big reason why the G Pro 2 doesn't have a significantly larger battery than the G2 despite its much larger size, as seen below.

Outside of the hardware upgrades, LG also added some interesting new features. The Knock Code feature basically takes KnockOn and adds the equivalent of a pattern unlock to it, which is a logical next step. LG is emphasizing that this feature will be on all phones launching in 2014, although it's not clear whether this feature would be backported to phones like the LG G2. The Natural Flash feature is also a software feature that uses a photo with the flash off to appropriately set the white balance of the photo taken with a flash. OIS+, another software feature for the camera means EIS (software stabilization) is added on top of the OIS to better filter out unintended motion. While not as effective as increasing the quality of the OIS module due to the need to remove the ghosting image from the sensor, adding EIS will always be more effective than OIS alone.

Also, based on initial use of a demo unit, the G Pro 2 can now have a multitasking button on the navigation bar as seen above, although it's not possible to make the menu key behave as on an AOSP device with software buttons. I really do like the new finish, which has a grippy finish that is miles ahead of the G2 in feel.

The camera is also good, but a lot of the new camera features like the refocus functionality are very much designed for static scenes only, as a shaky hand or any background movement will cause noticeable change in the photo from one level of focus to another, and becomes most obvious when the "all-in-focus" option is selected, which creates ghosting, much like HDR does on fast-moving objects. In a fast and shaky test, it also made for odd results as the first photo was less detailed (due to blur) than the next closer focal point. This is effectively a fact of life when it comes to any phone that doesn't do this functionality in hardware with a Lytro-style camera, so the Galaxy S5 will also have this issue. I also thought that such a function would have more gradations to levels of focus to allow for fine-tuning out of focus photos, but there's only around four different levels of focus between infinity and the minimum possible.

LG also continues to include the manual focus slider for situations where auto-focus fails, which should be included by more OEMs.

One of the most immediate things I also noticed was that LG continues to fill half of the notification space with things like QSlide, quick settings, and other various extra items. I question the wisdom of doing so, but as far as I recall, most of the unnecessary items can be hidden to restore some level of sanity.

LG G2 Mini

LG

also announced the G2 mini, a smaller version of the LG G2. While it's

similar, it's more in the same vein of the G Pro 2 when it comes to the

overall material feel, which is an evolution of the LG G2, so the shape

is largely the same, although there's no word on whether it retains the

curved battery. The hardware spec is definitely not at the same level at

the G2, but it's tough to say whether the value proposition is gone.

The SoC goes from the Snapdragon 800 to Snapdragon 400, or Tegra 4i.

This would mean either a quad core Cortex A7 for all but Latin America,

which gets the Tegra 4i which has a quad core Cortex A9r4 at 1.7 GHz.

Both versions have LTE, which means it's the MDM9x25 block in the

Snapdragon version as a part of MSM8926, and Icera i500 in the Tegra 4i

part. Unfortunately, the only demo units available were the MSM8x26

parts, as seen below.Outside of the SoC, the camera goes down to 8MP, but it's unsure whether the sensor is 1/3" or 1/4". The display is definitely smaller at 4.7" with a qHD panel, which would mean the resolution is below both the GS4 Mini and the One Mini.

Read More ...

AMD Announces Embedded Radeon E8860 GPU

For the integrated market, there are several levels of capability that manufacturers need to consider. This is a market driven by sales, thus OEMs that require specific resources are usually catered for. Thus despite the fact that AMD have an aggressive APU line up on the embedded side (and have the embedded related warranties and support), there is scope for something more powerful. This is the purpose of the E8860.

The E8860 is a 37W multi-chip-module FCBGA part, with the package measuring 37.5mm x 37.5mm. The GPU has a PCIe 3.0 interface and implements 640 SPs at 625 MHz. The GPU uses GCN similar to the HD7000 series, and is paired with 2GB of GDDR5 at 1125 MHz (4.5 GHz effective). Aside from the usual DX11.1, OpenCL 1.2 and OpenGL 4.2 compatibility we normally see with this GCN, AMD offer a variety of SKUs to cater for the following display output requirements:

AMD E8860 MXM 3.0 (A) + 5 DisplayPort

AMD E8860 PCIe + 2x DVI + mDP

AMD E8860 PCIe + 5x mDP

AMD E8860 PCIe + 4x mDP LPX

Performance is officially listed as achieving P2689 in 3DMark-11 when paired with an AMD R-464L APU. So the big question here is if the E8860 can be paired with either a BGA or socketed APU in dual graphics mode. On the consumer side at least, this could result in some nice GPU performance if an APU could be paired with something like this, leaving a PCIe x8 slot for other devices. It could even act as a mid-range part in the laptop space, although 37W will need to be catered for, or a mini-ITX motherboard where the other PCIe lanes are used for SATA controllers for extra storage.

Due to the use in the embedded market, interested parties will need to contact their local AMD representative for pricing and information.

Source: AMD

Read More ...

Samsung Announces Galaxy S5: Initial Thoughts

Every year Samsung launches a new Galaxy S flagship smartphone, and as always, Samsung puts the best platform that can be bought in their devices. The Galaxy S5 is no exception, as the MSM8974AC, or Snapdragon 801, powers the Galaxy S5. The 8974AC is the 2.45 GHz bin of the MSM8974AB, a slightly massaged MSM8974 that first launched with the LG G2 and other devices in the summer of 2013. As a recap, the MSM8974AB increases the clock speed of the Hexagon DSP to 465 MHz from 320 MHz, and the LPDDR3 RAM clocks go from 800 MHz to 933 MHz. What really matters though, is that GPU goes from 450 MHz to 578 MHz from 8974 to 8974AC. I definitely have to point to Anand's piece on the Snapdragon 801 for anyone that wants to know more.

The other portion of the hardware story is the camera, which is probably one of the biggest areas for OEMs to distinguish themselves from the pack. Samsung seems to be playing it safe this year with a straight upgrade from 13MP to 16MP by increasing sensor size, and pixel size remains at 1.12 micron side edge length. It is notable that the camera sensor seems to be in a 16:9 aspect ratio, which would make it possible for both photos and videos to keep the same interface without odd reframing effects when going from photo preview to camcorder functionality. Optics are effectively unchanged from the Galaxy S5, as the focal length in 35mm equivalent remains at 31mm, the aperture remains at F/2.2. The one area where there could be a notable improvement is the promised ISOCELL technology, which physically separates pixels better to reduce quantum effects that can lead to lower image resolution and also increases dynamic range, although this will require testing to verify the claims made by Samsung. Samsung has also added 4K video recording for this phone and real time HDR to extend the dynamic range of the camera.

The Galaxy S5 has 2GB of RAM, also not too surprising given the 32-bit ARMv7 architecture of the 8974AC.

The display is a 1080p 5.1” panel, which makes this phone around the same size as the LG G2. Samsung has definitely improved AMOLED, but first impressions are unlikely to tell much when it comes to the quality of calibration and other characteristics of the device. In all likelihood, this will continue to use an RGBG pixel layout in order to improve aging characteristics as the various subpixels age at differing rates. I would expect max brightness to increase, although this may only show in very specific conditions such as extended sunlight exposure and low APL scenarios.

The industrial design seems to be an evolution of the Note 3, with a texture that looks similar to that of the Nexus 7 2012. However, whether the stippled texture will actually avoid the long-term issue of a slimy/oily feel is another question that will have to be answered after the hands-on. While we're still on the point of the hardware design, the Galaxy S5 is IP67 rated, which is why the microUSB 3.0 port has a cover for water and dust resistance.

The fingerprint sensor is a swipe-based one, and Brian has voiced displeasure over swipe sensors like those found in the One max. I personally think that there could be some issues with ergonomics, as Samsung places the home button very close to the edge of the phone, which would make it rather difficult to swipe correctly over the home button, especially if the device is being used with one hand.

As always, Samsung has included removable battery and a microSD slot for those that need such capabilities, although now that Samsung is following Google guidelines regarding read/write permissions, the utility of the microSD slot could be much less than previously expected. For the battery, things are noticeably different as Samsung has gone with a 3.85V chemistry compared to the 3.8V chemistry previously used by the Galaxy S4. With a battery capacity of 10.78 WHr, this means that it has 2800 mAh. For reference, the Galaxy S4 had a 9.88 WHr battery with 2600 mAh.

As always, Samsung has put TouchWiz on top of their build of Android that will ship with the Galaxy S5, and it mostly looks the same. There are definitely some new features though like My Magazine, which seems to be a way of presenting multiple sources of information using a scrollable list of tiles with images on them.

There might be a trend here in the paragraphs, and while some may see it as a tic, it’s probably more representative of the consistency that Samsung is bringing to the table. “As always” means that people know what to expect, and while it may not be nearly as exciting to the tech press, average people live and die by what’s relatively familiar, not what’s new and exciting. The addition of new features and consistent improvements to performance without compromise relative to the previous generation is definitely something to be applauded, and with review units, hopefully it will be possible to see how the GS5 stacks up against the competition.

At any rate, the phone will launch with blue, black, white, and gold colors. It launches April 11 in 150 countries.

Read More ...

GIGABYTE G1.Sniper Z87 Review

The G1 series from GIGABYTE has seen a recent expansion of late – from the previous socket to new chipset releases, GIGABYTE is now attempting to provide a model at almost every reasonable price point in the market. The purpose of this is to provide a gaming platform for any budget, using gaming features such as OP-AMP, USB-DAC.UP and Gain Boost. At the mid-point of the spectrum is the G1.Sniper Z87, which we are reviewing today.

Read More ...

HTC Launches Desire 816 at MWC

Today, HTC seems to be delivering on their promise for a stronger focus on the 150-300 USD market segment by launching the Desire 816, a phablet with a 5.5" 720p display and a Snapdragon 400, along with dual front facing speakers with an amplifier on each speaker. For now, it seems that HTC is quite tight-lipped on software, as they only state that the 816 runs "Android with HTC Sense", although based upon the press images it's clear that the hardware buttons have been removed and it may be the beginning of a trend for HTC's 2014 devices. While many are likely to object over the bezel on the bottom, it seems that this may be an unavoidable bezel, as the One, One max, LG G2, Nexus 5, and other phones all have the glass bezel area around as tall as the one that looks to be on the Desire 816. Based on the photos that I've seen for the HTC One's digitizer, it seems that the area must be used for digitizer connectors, but capacitive buttons will fit in that area.

Of course, specs are effectively the most important part of midrange phones when it comes to placing the kind of value that they have, so I made a table to summarize the key points:

HTC Desire 816 |

|

Display |

5.5" 720p LCD |

SoC |

MSM8928, Snapdragon 400, 1.6 GHz quad Cortex A7 |

RAM |

1.5 GB |

Rear Camera |

13MP f/2.2, 1080p HD recording |

Front Camera |

5MP f/2.8 720p HD recording |

WiFi |

802.11 b/g/n |

Storage |

8GB + microSD |

Battery |

2600 mAh, 3.8V, 9.88 WHr |

WCDMA Bands |

850/900/2100 MHz (Band 5, 8, 1) |

LTE Bands |

EMEA: 800/900/1800/2600 MHz (Band 20, 8, 3, 7) Asia: 900/1800/2100/2600 MHz (Band 8, 3, 1, 7) 700 MHz (Band 28) for Taiwan, Australia |

SIM Size |

NanoSIM |

Read More ...

Two More Microsoft Executives Leaving the Company

Tony Bates and Tami Reller are next to go

Read More ...

India's Karbonn to Add Android Split Personality to Windows Phone

New devices will be targeted at the Indian, Middle Easter, and Southeast Asian developing markets

Read More ...

UPDATE: Apple's iOS Comes to Your Car via CarPlay Infotainment System, iPhone 5 and up Supported

Apple brings iOS to your dashboard

Read More ...

Apple Hopes to Up Device Launches with Hiring Binge in Asia

Apple is hiring engineers and managers from its competition in Asia

Read More ...

Samsung Adds Faux Leather to Its $399 New Chromebook 2

Samsung announces new 13.3", 11.6" Chromebooks

Read More ...

Cortana Voice Assistant Coming to Windows Phone in New 8.1 Update

She'll be represented as a circle that asks, "Need Something?"

Read More ...

Microsoft Launches Site to Tell Clueless Customers if They're Running XP

Site functions as advertised, is built on Metro

Read More ...

Four U.S. States Fighting Over Tesla Motors' Gigafactory Location

All four states want the factory in their respective borders

Read More ...

Video Leak Confirms 3D Camera, Larger Screen Coming to HTC One M8

3D camera and Sense 6.0 are onboard, camera app crashes make a troubling return

Read More ...

With Windows XP Support Ending Soon, Microsoft Uses Pop-ups to Encourage Upgrades

Microsoft REALLY wants you to upgrade to Windows 8.1

Read More ...

Ellen DeGeneres' Star-studded "Selfie" Briefly Crashes Twitter During Oscar Broadcast

Ellen crashes Twitter thanks to a gaggle of friends and some crafty product placement

Read More ...

Facebook Kills Popular Messenger App for PCs

Android and iPhone are Facebook's priorities, other platforms are second class citizens

Read More ...

Mt. Gox Bitcoin CEO Can't Stifle Grin as he Bows in Apology for Bankruptcy

"We have to let it go. And then we'll buy more." says a Japanese investor after losing a tenth of his Bitcoins

Read More ...

Now That It's Done, Who Profits Off the Vodafone/Verizon Deal?

UK and U.S. taxmen collect a cut of the proceeds, as do the banks

Read More ...

Report: Microsoft Considering Offering Free “Windows 8.1 with Bing”

New version of Windows 8.1 would be free or "very low cost"

Read More ...

British Surveillance Agency GCHQ Bulk Collects, Stores Yahoo Webcam Images

About 3 percent to 11 percent of images contained nudity or other graphic images

Read More ...

Ford: We're "Not Married to Microsoft" for Sync Gen. 3

Gen. 3 OS choice will be critical given beating Sync 2 led to in quality rankings

Read More ...

Google Plans to Launch Modular Smartphone via "Project Ara" Early Next Year

It should have a working prototype in weeks

Read More ...

2/27/2014 Daily Hardware Reviews

DailyTech's roundup of hardware reviews from around the web for Wednesday

Read More ...

Tesla Motors' Gigafactory to Help Push Out Half Million EVs/Year in 2020

The giant Gigafactory will span 500-1000 acres of land

Read More ...

"Boeing Black" Secure, Self-Destructing Android Smartphone Gets Official

Boeing offers details on its new secure Android smartphone

Read More ...

Drone Ships May Replace Manned Standard Cargo Ships in the Future

Crewless ships would be able to carry more cargo and burn less fuel

Read More ...

China's BYD Auto CEO Says Tesla's Model S a "Rich Man's Toy"

The Model S will sell for $121,300 USD in China

Read More ...

Microsoft Reportedly Keeping Nokia, Lumia Brands

Nokia logos will continue making their way onto phones despite the acquisition

Read More ...

Woman Claims She Was Assaulted, Robbed for Wearing Google Glasses

Some dispute her account, while others say that patrons of the "punk bar" were justified if they assaulted her

Read More ...

Apple Patches Serious SSL Exploit That Could Have Exposed OS X, iOS Users to NSA Snooping

SSL-breaking bug was present for the last year and a half, but is fortunately finally fixed on both platforms

Read More ...

BlackBerry CEO Says He'd Sell BBM for $19B --- But He's Not Crazy

Laughs aside, John Chen is making smart and critical moves to save his company

Read More ...

2/26/2014 Daily Hardware Reviews

DailyTech's roundup of hardware reviews from around the web for Wednesday

Read More ...