GeForce GTX Titan Two-Way SLI Scaling: PCIe 2 vs. PCIe 3

Back when I had Origin’s tri-Titan equipped Genesis in house, I received a few requests for benchmarks with PCIe 3 enabled. Because we tested the system with its default out of the box settings, the system was configured by default to only use PCIe 2 since NVIDIA does not officially support PCIe 3 on SNB-E based systems. As a result there was some interest in whether PCIe 3 would improve performance at all compared to PCIe 2, due to the high amount of PCIe bus traffic generated by three GTX Titan cards working together. IVB/Haswell is of course limited to 16 PCIe3 lanes, whereas if PCIe 3 is enabled SNB-E systems have more than twice that with a combined total of 40 lanes, giving SNB-E a large bandwidth advantage.

As it turned out, the Intel DX79SR used in the Genesis supported PCIe 3, but only on the 2 full-fledged x16 slots. The third x16 slot (electrically x8) won’t operate beyond PCIe 2 speeds ever after forcing it in NVIDIA’s drivers, which is unfortunately a bit of a double whammy since it’s the slot that’s already lacking in bandwidth. That dashed our hopes of a PCIe 3 vs. PCIe 2 comparison for tri-SLI Titans, and as a result the subject never reached print.

However in the last couple of weeks I’ve received several requests for two-way SLI Titan/780 PCIe performance benchmarks, which we did happen to collect while looking into tri-SLI and merely never published. So with that in mind we’re going to finally publish that data, since there’s clearly more interest in the matter than I initially expected.

As a heads up this data is a bit stale; it was taken back in April with NVIDIA’s 314.22 drivers. So the primary goal here is to compare two-way SLI Titan performance on PCIe 2 versus PCIe 3.

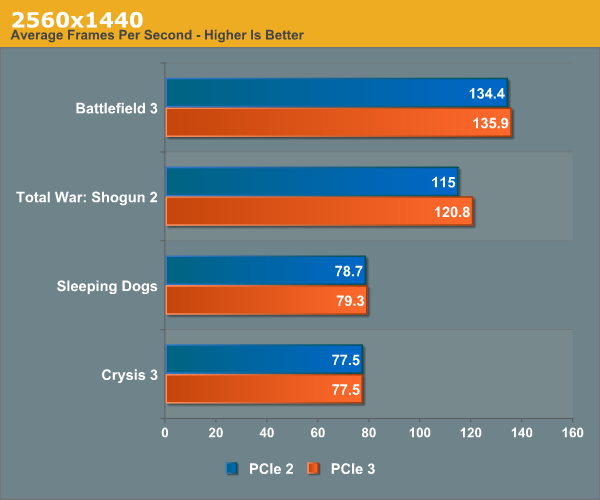

At 2560x1440 there’s a very little change. The only game to see any kind of notable improvement is Total War: Shogun 2, which picks up 5%, though this is going from 115fps to 120fps. Otherwise PCIe 3 does not confer a distinct performance advantage here.

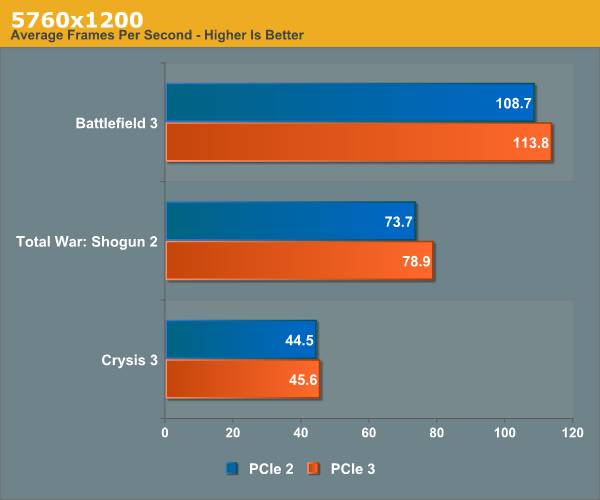

At 5760 things become more interesting, although again the performance gains aren’t particularly huge. Every game benefits at least marginally from PCIe being enabled; Total War: Shogun 2 once again shows the greatest benefit at 7%, while Battlefield 3 picks up 5%, and Crysis 3 a hair over 2%.

Admittedly this is a small sample set, but it does paint a distinct picture of the PCIe bus being a bottleneck when using surround modes. NVIDIA has never gone into great detail on how their surround mode works, but based on this and previous data we’ve long assumed that they’re shuffling a significant amount of data around via the PCIe bus in order to keep the cards in sync and to give each card the portion of the frame buffer it will be responsible for sending to its attached display(s). This is as opposed to 2560x1440 and other single monitor modes, where the master card merely needs to collect completely frames and there’s no further redistribution involved.

In any case, while NVIDIA does not officially support PCIe 3 on SNB-E systems, between the Genesis and our own testbed we’ve yet to run into an issue in using it. Consequently we can’t see any good reason not to enable it, especially in surround mode setups. Based on our data the performance gains aren’t going to be huge (at least not in two-way SLI), but it’s free performance being left on the table. At the very least it’s worth a shot, since NVIDIA makes it easy to turn PCIe 3 support on and off.

Read More ...

Samsung's ATIV Book 9 Plus: 3200 x 1800 Display in an Ultrabook

Earlier tonight Samsung introduced a convertible featuring a 13.3-inch 3200 x 1800 display that runs both Windows 8 and Android. If the convertible form factor is a little too weird for you, Samsung also introduced an Ultrabook with the very same display.

Like many of the Ultrabooks announced in Taipei, the ATIV Book 9 Plus is a marriage of MacBook Air and rMBP. You get a form factor that's very similar to the 13-inch MBA, but with a display that's clearly aimed at the more expensive rMBP. Where the ATIV Book 9 Plus falls in pricing will be very interesting to see.

Internally, there's a familiar refrain: Core i5-4200U (Haswell ULT), DDR3L and an SSD. Like the ATIV Q, the SSD in this case is one of Samsung's own - the MZNTD128HAGM, a 6Gbps SATA M.2 drive. The machine ships with an integrated 55Wh battery and weighs 1.39 kg. Samsung claims up to 12 hours of battery life.

The notebook looked good in person. I'm a fan of the hidden SD card reader with spring loaded door. Just like we saw with the ATIV Q, the ATIV Book 9 Plus was running Windows 8 but I fully expect that it's meant for Windows 8.1's improved handling of high DPI displays.

Read More ...

Samsung Galaxy NX Camera: Hands On

When Samsung launched the Galaxy Camera, I remember Brian telling me that it might not be the best point and shoot, but it's absolutely the directions cameras need to go in. After playing with the Galaxy Camera, I couldn't agree more. Cameras need to be connected, as sharing is such an important part of the whole point of taking photos. At tonight's Premiere 2013 event in London, Samsung unveiled its next flagship connected camera: the Galaxy NX Camera.

The Galaxy NX Camera is the first Android camera to support interchangeable lenses. As its name implies, the Galaxy NX Camera is fully compatible with all currently available Samsung NX lenses. The Galaxy NX Camera features a 20.3MP APS-C sensor (Update: Brian tracked down the exact sensor).

Internally there's a 1.6GHz quad-core SoC with a dedicated ISP. The platform runs Android 4.2.2 and supports LTE as well.

The big thing for me? We finally have an Android device that exposes full manual controls. Shutter speed, aperture and ISO are all adjustable just like on a traditional camera. While the layout took some getting used to, the frustrating lack of control from most camera experiences on Android just wasn't there. The combination of software flexibility and the ability to use good lenses will make this yet another step in the right direction.

Taking photos was just as natural as on any other mirrorless camera. The 4.8" display looks good and there's even a live level indicator to help make sure your shots come out straight. Start-up/shutdown time are impacted by the simple fact that the whole thing runs Android. I can't wait to get one of these in Brian's hands to see what he thinks once review samples are available. Modern smartphones have done a tremendous job of pulling focus away from traditional PCs for mobile computing, it's very clear that the traditional camera market is set to be disrupted by these portable powerhouses even more going forward.

Read More ...

Hands On: Samsung Galaxy S 4 Active & Mini

While I was in Taiwan, Samsung announced the Galaxy S 4 Active - a ruggidized version of the Galaxy S 4. To simply call it ruggedized however is not doing the design justice. The SGS4 Active is IP67 certified, meaning it's fully sealed against dust and submergible in 1 meter of water for up to 30 minutes. The resulting design is a bit bigger than the standard Galaxy S 4, but in hand feel is actually not bad at all - especially when you consider what you get with the added dimensions. I noticed the added width in my time with the device. Thickness and weight were tought to gauge as the device was tethered to the demo table. Going by the numbers alone however, it's not all that substantial.

The finish is a bit different than the SGS4, and you get three physical buttons on the front instead of just one. The touch screen won't work under water, which is why you get additional physical buttons on the Active model.

Display resolution and PPI remain unchanged, but the Active ditches Super AMOLED in favor of a standard TFT LCD - which will be a plus for many I suspect. The only other change is in the camera department. The Active loses the 13MP sensor and sticks with an 8MP rear facing camera. The SoC is still the same quad-core 1.9GHz Qualcomm Snapdragon 600 (APQ8960AB) from many variants of the SGS4.

SGS4A vs SGS4 Comparison |

|||||

SGS4 |

SGS4 Active |

||||

Length |

136.6 mm |

139.7 mm |

|||

Width |

69.9 mm |

71.3 mm |

|||

Thickness |

7.9 mm |

9.1 mm |

|||

Weight |

130 g |

151 g |

|||

Display |

5.0-inch 1080p SAMOLED |

5.0-inch 1080p TFT LCD |

|||

Camera |

13 MP with LED Flash |

8 MP with LED Flash |

|||

I also had the opportunity to play with the Galaxy S 4 mini, a 4.3" qHD version of the Galaxy S 4 with a Qualcomm MSM (dual-core 1.7GHz Snapdragon 400 with Adreno 305). I've found myself agreeing with Brian and Vivek quite a bit: 4.3-inches is really the ideal smartphone display size for me. The iPhone 5 is still a bit too small, and the SGS4 is a bit too big.

Read More ...

AMD Takes Samsung, Kabini in the ATIV Book 9 Lite & ATIV One 5 Style - Hands On

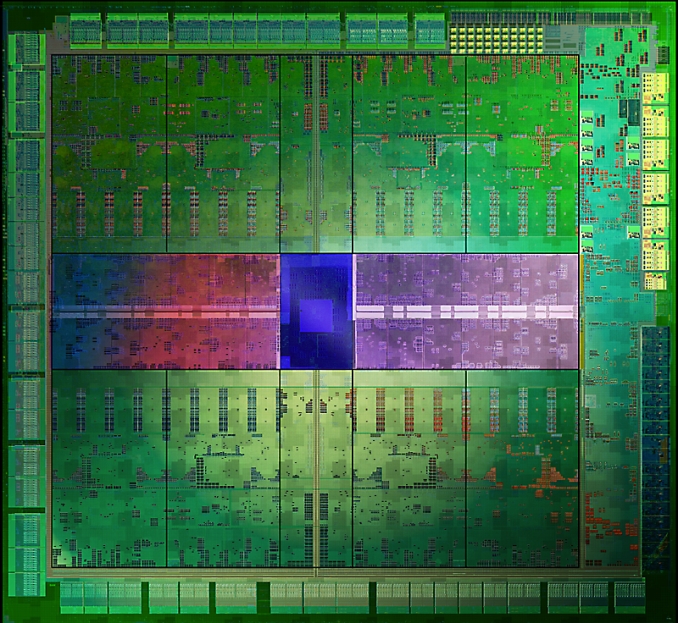

Samsung was curiously quiet on talking about specs with a couple of its newly announced PCs, and now we know why. Both the ATIV Book 9 Lite (affordable ultraportable) and ATIV One 5 Style (21.5-inch all-in-one) both feature AMD Kabini SoCs. As a refresher we're talking about four AMD Jaguar cores alongside an AMD GCN based GPU, all on a single die.

The ATIV Book 9 Lite features a 13.3-inch 1366 x 768 display, and will be available in touch and non-touch variants. The demo systems at Samsung's event had 4GB of DDR3 and a 128GB LiteOn SSD, although it's not clear if all shipping machines will use SSDs. We also don't know clock speeds at this point. In person the machine looks good and feels solid. We don't have any indication of pricing or availability yet unfortunately.

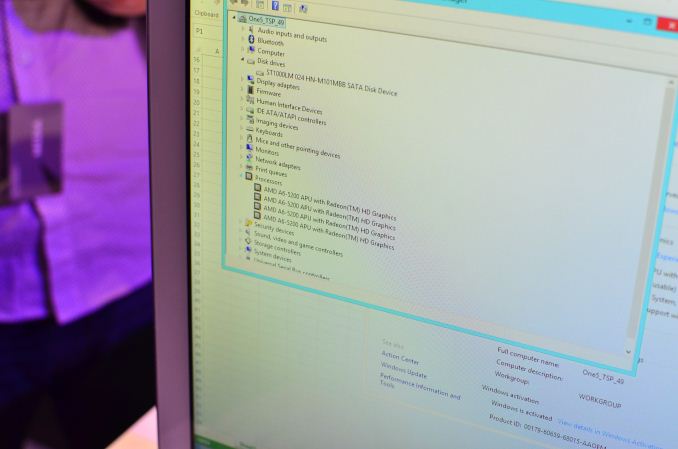

The ATIV One 5 Style on the other hand makes less of an attempt to hide the SoC vendor inside. The touch-enabled all-in-one features AMD's A6-5200 with four Jaguar cores running at up to 2.0GHz. The demo systems all featured hard drives. The 21.5-inch display features a 1920 x 1080 resolution and comes touch enabled.

The Galaxy S 4 styling makes the jump to an all-in-one relatively well. The big deal with Kabini really boils down to pricing. In my editorial on the topic I mentioned that if OEMs can take Kabini's cost savings and in turn give the end user a better overall experience (e.g. better display, storage, etc...) then AMD will have a real winner on its hands. None of the Kabini based systems at Samsung's Premiere 2013 event looked or felt cheap at all, and in some cases they did have features that I wouldn't have otherwise expected on a value machine (E.g. SSD in the ATIV Book 9 Lite). All that remains is for Samsung to deliver on the pricing front.

Read More ...

Samsung ATIV Q Hands On: 3200 x 1800 13.3" Tablet Running Windows 8 and Android

We just finished playing with Samsung's newly announced ATIV Q, a convertible tablet that runs both Windows 8 and Android 4.2.2. The display is the main attraction. The 13.3" panel features a 3200 x 1800 resolution (276 PPI). Although some of the screen shots from Samsung's presentation of the ATIV Q showed Windows 8.1 running, the demo units themselves ran vanilla Windows 8 and as a result had to rely on traditional Windows DPI scaling. I fully expect Windows 8.1 to make this 3200 x 1800 13.3" panel usable through new OS X-like DPI scaling upon its release.

Despite having to light 5.76 million pixels, the ATIV Q seemed bright indoors. The demo tablets were running at max brightness to begin with, which was comfortable (but not too bright at all). I'd be very curious to test outdoor brightness performance.

Internally the demo ATIV Q features a Core i5-4200U (Haswell ULT, dual-core + Hyper Threading, 2.6GHz max turbo, 3MB L3, Intel HD 4400). The demo systems featured 4GB of DDR3L. Powering the system is an integrated 47Wh battery.

The dual-OS functionality is what you'd expect: Android runs in a VM on top of Windows 8. Networking, storage and CPUs are all virtualized resources. Virtualization is the only way to enable Samsung's instant switching between Windows 8/Android on a single set of hardware. The switching process itself is pretty quick as Android is treated like another application running on Windows 8. Performance within Android seemed good enough, the UI wasn't butter smooth however. I'm not all that sure about the benefits of running Android on top of full blown Windows 8, but the option is there. There's even a dedicated key on the keyboard to switch between OSes.

Although the ATIV Q has a large surface area for a tablet, the overall design feels very light and portable. Lifting the display up to reveal the integrated keyboard is simple enough. The keyboard itself feels decent, although there's no room for a standard trackpad so you're left with a little nub that is reminiscent (but no where near as functional) as what you'd find on an old ThinkPad. You glide your finger over the nub to move the mouse, with slim physical buttons at the edge of the keyboard for left/right click. Touching the display is definitely the way to go, but the ATIV Q absolutely needs Windows 8.1 style DPI scaling in order to make UI widgets in desktop mode better for touch.

Hidden in the display hinge is a USB 3.0 port, micro HDMI and a micro SD card reader.

I'm a big believer in convertibles. I don't know that anyone has gotten it perfect with design yet, but it's very good to see everyone trying. Battery life is a big unknown, as is pricing - that display can't be cheap. Given its light weight construction, the ATIV Q seems like it could actually be a very compelling option.

Read More ...

Samsung Premiere 2013: Galaxy & ATIV London Launch, Live Blog

We just got seated for Samsung's Galaxy & ATIV event in London, the event should begin in 5 minutes - check back here for live updates!

Read More ...

SilverStone Raven RV04 Case Review

A string of delays have kept the most radical entry in SilverStone's already unconventional Raven series from making its debut, and even now it's only materializing in limited quantities. Was it worth the wait?

Read More ...

NVIDIA Shield Gets a Launch Date and a Price Cut: June 27th for $299

Earlier this morning NVIDIA sent out a press release offing an update on the launch of their forthcoming handheld console, Shield. When Shield was first made available for pre-orders back in May, NVIDIA announced that the console would ship in late June with a price tag of $349. Today’s press release serves as an update for that, giving shield a proper launch date and a new price.

Shield’s official launch date will be a week from now, June 27th, meeting NVIDIA’s original goal of shipping in late June (if only just). This is presumably for pre-orders; we don’t have any idea on what the launch quantity is like, or consequently whether there will be any Shield consoles available for “walk-in” sales on the 27th.

NVIDIA SHIELD |

||||

Shield |

||||

SoC |

NVIDIA Tegra 4 - 1.9 GHz |

|||

Display |

5-inch 720p "Retinal" Display |

|||

RAM |

2 GB LPDDR3 |

|||

Wireless Connectivity |

2x2:2 802.11a/b/g/n WiFi + BT 3.0, GPS |

|||

Storage |

16 GB NAND, microSD Expansion |

|||

I/O |

microUSB 2.0, mini-HDMI, 3.5mm headphone |

|||

OS |

Android 4.2.1, Updates from NVIDIA |

|||

Price |

$299.00, Shipping June 27th |

|||

Wrapping things up, we’ll have a full review on Shield after it launches next week. So in the meantime stay tuned.

Read More ...

Rumors on Ivy Bridge-E: 3 SKUs, September Release?

A performance enthusiast always wants to know what is coming next. This morning HardwareLuxx published a rather interesting and official looking Intel slide detailing information about the upcoming enthusiast platform, Ivy Bridge-E. While we cannot confirm the legitimacy of the slide, it does follow several patterns we had been assuming for a while.

Firstly the launch date is a little surprising. Initially we have all been discussing October/November, but this slide puts the launch squarely at the beginning of September, between the 4th and the 11th. This is around the same date as IDF San Francisco, held on the 10-12th September.

As with previous launches, there will be a strict NDA date for media to publish results and demonstrations. Judging by what is written there does not seem to be much room for an upgrade to new chipsets, meaning that X79 is still the platform of choice at this time. I would not mind seeing an X89 with a full set of SATA 6 Gbps and USB 3.0 in the near future.

Alongside release details, CPU-World has posted information on the processor SKUs which are expected to be released. The top SKU is to be an i7-4960X, featuring 6 cores (12 threads) at 3.6 GHz which turbos up to 4 GHz and a total of 15MB L3 cache. This is going to be our top end SKU, which normally retails for $999-$1099. Below this is the i7-4930K, also 6 cores (12 threads) but set at 3.4 GHz with turbo up to 3.9 GHz and 12MB L3 cache. The final SKU should be the more interesting – the i7-4820K. The –K moniker suggests this part is unlocked, but unfortunately it is only a quad core (8 threads) part with 10MB L3 cache.

Ivy Bridge-E SKUs (predicted) |

|||||

|---|---|---|---|---|---|

SKU |

Cores / Threads |

Speed / Turbo |

L3 Cache |

TDP |

Memory |

i7-4820K |

4/8 |

3.7 GHz / 3.9 GHz |

10 MB |

130 W |

DDR3-1866 |

i7-4930K |

6/12 |

3.4 GHz / 3.9 GHz |

12 MB |

130 W |

DDR3-1866 |

i7-4960X |

6/12 |

3.6 GHz / 4.0 GHz |

15 MB |

130 W |

DDR3-1866 |

Source: HardwareLUXX, CPU-World

Read More ...

LG to include Snapdragon 800 MSM8974 in next G Series Smartphone

Hot off the heels of the Snapdragon 800 benchmarking event in San Francisco, LG has dropped a press release by noting that its next G series smartphone will include the aforementioned SoC. Although LG didn't call it out explicitly, this is undoubtedly the Optimus G2 which we've heard rumblings about, successor to last year's Optimus G which included Snapdragon S4 Pro APQ8064.

LG mentions 75 percent better performance and tight integration with Snapdragon 800 in its future G series smartphone. No word on availability or when the announcement will drop, but I expect that will come later this fall.

Source: LG

Read More ...

NVIDIA to License Kepler and Future GPU IP to 3rd Parties

Earlier today NVIDIA announced that it would begin licensing its Kepler GPU architecture to 3rd parties. This is a sensible next step for NVIDIA, but an unprecedented one among the two remaining discrete PC GPU suppliers.

Note that what NVIDIA is announcing today is contrary to AMD’s semi-custom approach to SoC production. AMD is offering to build (semi) custom tailored silicon to customer needs, while NVIDIA is taking a more ARM-like approach and offering its GPU IP to 3rd parties for integration on their own. In other words, NVIDIA is looking to compete with ARM and Imagination Technologies rather than AMD or Qualcomm.

In addition to its GPU architecture, NVIDIA is now also open to licensing its visual computing patents to 3rd parties. The visual computing patent portfolio includes all of NVIDIA’s 5500 patents in the area, as well as CUDA.

NVIDIA views its IP licensing business as additive rather than in lieu of its current GPU and SoC businesses. Time will tell whether or not this ends up being the case, but it’s quite obvious that at NVIDIA’s current size it wouldn’t be able to go after all GPU markets on its own - enabling others to do so makes a lot of sense from that perspective.

It Doesn’t End with Kepler: Future NVIDIA GPUs to Be Licensed

I

asked NVIDIA about future GPU architectures beyond Kepler, and the

answer was pretty awesome: future GPU architectures will be available to

licensees at the time of tape out by NVIDIA. Licensees can choose

whether or not to adopt an architecture right away or wait for any

potential revisions, similar to what ARM does with its cores (e.g. Tegra

4i uses a later revision of the Cortex A9 core). This move has huge

implications. Theoretically a licensee could bring an NVIDIA GPU to

market before NVIDIA itself, although that does seem pretty unlikely.

What we could see however is a licensee introduce a GPU configuration

that NVIDIA had no intentions of bringing to market.The model makes a lot of sense and expands NVIDIA’s role in the computing world beyond its life in PCs. In the PC space, NVIDIA built discrete GPUs that system integrators (and end users) put in their machines. In the post-discrete world where SoCs rule the landscape, NVIDIA believes it can be just as relevant by doing the same. The difference here is instead of NVIDIA building cards out of its GPUs and selling them, the SoC manufacturer would be responsible for all integration. It’s the same playbook, just modified to deal with the new world around it.

Targets for Integration

NVIDIA

is quick to point out that Kepler is its first mobile to high-end GPU

architecture. Capable of scaling from smartphones (Logan/Tegra 5 next

year) to supercomputers (Titan), Kepler is inherently very flexible and

makes a lot of sense as NVIDIA’s first target for its IP licensing

program.Although mobile is an obvious fit for Kepler licensing, NVIDIA hopes its GPUs will be used in new markets as well. NVIDIA’s refrain sounds quite similar to AMD’s. Neither knows where the next big market will be, but both want to be prepared for it when the time comes. It won’t be too long before smartphones and tablets reach their own Ultrabook moments when performance becomes good enough for the majority of the market and attention shifts elsewhere. When that happens, NVIDIA (and AMD, and others) believe that opportunities for continued growth will appear in new markets (e.g. TVs, wearables, other connected compute devices).

The Compute Connection

Although it’s clear that the greatest point of interest with today’s announcement revolves around getting NVIDIA’s GPU designs and graphics IP into new products, at a high level perspective NVIDIA has made it clear they’re licensing their visual computing technology, and that this isn’t just a play for graphics. As part of keeping themselves open to new markets, NVIDIA has told us that they’re essentially willing to do whatever makes financial sense as far as licensing goes, with both compute and graphics on the table. So at the same time as licensing out their graphics technology, NVIDIA has also opened the door to licensing out CUDA and their other GPU compute innovations if the price is right.This can lead to several possibilities, ultimately relying on who’s interested and what market they represent. At a most basic level, licensing an NVIDIA GPU will get the buyer CUDA – binary compatibility and all – thanks to the fact that this would be the same hardware CUDA already runs on. However in the new “anything is possible” licensing system of NVIDIA, CUDA could also be licensed out separately. Device makers who simply want to add CUDA support to their devices, either to take advantage of some of the runtime’s unique functionality or merely to enable easier porting from existing NVIDIA systems, can now license the necessary CUDA IP from NVIDIA. The GPU computing market is still very young with a number of competing technologies, but thus far based on actual usage CUDA has proven to be a front runner compared to more widely supported (and open) environments such as OpenCL, so while NVIDIA is still trying to bring further users onto CUDA, they also have a CUDA user base they can leverage today.

The most obvious avenue for any potential CUDA licensing would be HPC users looking for greater integration beyond today’s CPU + GPU setups we see in systems like Titan. However NVIDIA is also pursuing this with forthcoming SoCs like Logan and further products integrating their Denver CPU, so it’s not a market that’s being ignored by NVIDIA. On the other hand more novel uses of GPU compute in the embedded space, encompassing everything from TVs to automotive to traditional appliances, are areas that have been identified as potential growth avenues for GPU computing by NVIDIA and other GPU firms in the past, not all of which NVIDIA is directly serving right now. In all of these cases licensing can focus on CUDA, or even more broadly just licensing specific NVIDIA compute technologies that would be useful to include in these products; even obscure technologies like Kepler’s low-overhead soft-ECC implementation could potentially be of value as a licensed technology.

NVIDIA Can Now Go After Apple & Samsung Business

The cynic in all of us can point to NVIDIA’s struggles with getting Tegra 4 into devices and out the door as motivation behind wanting to license its GPU IP. Beating Qualcomm has proven to be very difficult. Even Intel has had a wonderfully difficult time of making its way into the mobile space. So is that what this licensing play is all about? To an extent, perhaps.Had Tegra 4 been out and available, I think it’s safe to say that the SoC would likely have been used in at least some previous Tegra 3 design wins. Tegra 4i will hope to do the same for smartphones. I see no reason for these businesses to stop, but I think it’s quite obvious that there’s a huge gap between where the Tegra business is today and where Qualcomm is.

By licensing its GPU IP, NVIDIA opens itself up to additional customers (and revenue) that otherwise wouldn’t have considered it. I doubt Apple would ever use an off-the-shelf Tegra SoC, but NVIDIA can now compete for Apple SoC business alongside Imagination Technologies. Should Apple decide to one day drop Intel altogether and bring all of its CPU design in house, it now has a GPU vendor it can license cores or technologies from - just like it does with ARM. The exact same goes for Samsung.

Both Apple and Samsung have histories of licensing GPU IP from Imagination. NVIDIA now has a chance of going after that business.

The same could be said at the other end of the spectrum. The mobile SoC wars we saw unfold over the past few years are about to heat up in the server market. Where integration of high performance GPU architectures makes sense in servers, NVIDIA now has an offering to those that are interested.

GPUs Today, LTE Tomorrow?

NVIDIA

isn’t officially announcing plans to license its Icera modem IP, but

I’m told that’s the next logical step. NVIDIA is investing handsomely in

Tegra 4i and its modem architectures, but similar to its GPU business -

in order to address a much larger market, it will have to consider

licensing that IP.Final Words

Although unexpected from a timing perspective (we had no hint that NVIDIA was going to drop this on us today), NVIDIA’s move to license its GPU IP is very sensible. All growth markets where compute is concerned are moving forward with high levels of integration. For NVIDIA to not only remain relevant in the broader world but also grow with it, it must have a strategy in place for markets where integration is required.Where those new markets are, and ultimately what this means for NVIDIA’s financials is beyond the scope of our analysis - it’s simply the right (only?) move.

Read More ...

Snapdragon 800 (MSM8974) Performance Preview: Qualcomm Mobile Development Tablet Tested

We’ve written about Snapdragon 800 (MSM8974) before, for those unfamiliar, this is Qualcomm’s new flagship SoC with four Krait 400 CPUs at up to 2.3 GHz, Adreno 330 graphics, and the latest modem IP block with Category 4 LTE. Qualcomm is finally ready to show off MSM8974 performance on final silicon and board support software, and invited us and a few other publications out to San Francisco for a day of benchmarking and poking around. We looked at MSM8974 on both the familiar MSM8974 MDP/T, a development tablet used both by Qualcomm and 3rd parties to develop drivers and platform support, and the MSM8974 MDP phone, both of which have been publicly announced for some time now.

The tablet MDP is what you’d expect, an engineering platform designed for Qualcomm and other third parties to use while developing software support for features. Subjectively it’s thinner and more svelte than the APQ8064 MDP/T we saw last year, but as always OEMs will have the final control over industrial design and what features they choose to expose. Display is 1080p on the tablet and 720p on the phone, a bit low considering the resolutions handset and tablet markers are going for (at least 1080p on phone and WQXGA on tablets) so keep that in mind when looking at on-screen results from benchmarks. Read on for our full Snapdragon 800 performance preview.

Read More ...

Building a mini-ITX Haswell System with ASUS [video]

For our final installment, JJ put together a bunch of components for a mini-ITX Haswell build and took us through his build process. The motherboard itself is a Z87-I Deluxe, an upcoming mini-ITX Z87 board from ASUS. Also in the video you'll see JJ install ASUS' mini-ITX optimized GeForce GTX 670 DC Mini card. Finally, the chassis is pretty cool - it's the Lian Li PC-Q30.

Read More ...

Ask AnandTech: Tablets at Work, How Important is Backwards Compatibility?

Last week you guys did an awesome job with the discussion around the role of tablets in the workplace. There are a good number of you who have already embraced tablets for work, or who at least see the potential for the form factor at work if other hardware requirements are met. Now comes the next level, and honestly a question that I'm asked quite often when meeting with manufacturers. As far as work tablets are concerned, how important is backwards compatibility with existing x86/Windows applications?

The question obviously lends itself to a Windows 8 vs. Windows RT debate, but it's actually even bigger than that. We're really talking about Windows 8 vs. Windows RT or Android or iOS in the workplace.

While the previous question could definitely influence future design decisions, your answers here help answer more fundamental questions of what OSes to support for OEMs looking to play in the enterprise/business tablet space.

Respond in the comments below!

Read More ...

NVIDIA @ ISC 2013: CUDA 5.5 Released & More

As the 2013 International Supercomputing Conference continues this week, product and technology announcements continue to trickle out of the show. NVIDIA of course is no stranger to this show, and coming off their success with Titan last year are ever increasing their presence to try to capture a larger share of the lucrative HPC market. To that end NVIDIA is releasing several announcements this morning that we wanted to briefly cover.

The big news out of ISC 2013 for NVIDIA is that CUDA 5.5 is now out of private beta and onto its public release candidate. Though CUDA 5.5 is just a point release for CUDA, it does bring several significant changes for developers. The biggest change of course is that this is the first version of CUDA to offer ARM support, going hand in hand with the launch of the Kayla development platform and ahead of next year’s launch of NVIDIA’s Logan SoC.

CUDA on ARM is a significant point of interest for NVIDIA for a couple of reasons. On the consumer side of things NVIDIA is hoping to ultimately leverage CUDA for compute on SoC based devices, similar to what they have done in the PC space over the last half-decade. For the ISC crowd however the focus is on what this means for NVIDIA’s HPC ambitions, as an ARM based HPC environment is something that can be powered exclusively by NVIDIA processors, as opposed to today’s common scenario of pairing Tesla compute cards with x86 AMD and Intel processors. Though if nothing else, in the more immediate future this is NVIDIA ensuring they aren’t left behind by the continuing growth of ARM device sales.

Along with bringing ARM support to CUDA, CUDA 5.5 also introduces cross compilation support to the toolkit, allowing ARM binaries to be built either natively on ARM systems or much more quickly on faster x86 systems. Other changes include several different improvements in MPI and HyperQ, such as MPI workload prioritization and HyperQ gaining the ability to receive jobs from multiple MPI processes on Linux systems.

Finally, on a broader view CUDA 5.5 will also be bringing some small but important changes that most developers will see in one way or another. On the development side of things NVIDIA is rolling out a new guided performance analysis tool for use with their Visual Profiler tool and with the Nsight Eclipse Edition IDE in order to help developers better identify and resolve performance bottlenecks. Meanwhile on the deployment side of things NVIDIA is finally rolling out a static compilation option, which should simplify the distribution of CUDA appliations, allowing the necessary CUDA libraries to be statically linked in applications, rather than relying solely on dynamic linking and requiring that the necessary libraries be bundled with the application or the CUDA toolkit installed on the target computer.

Moving on, along with the CUDA 5.5 announcements NVIDIA is also using ISC to showcase some of the latest projects being developed with NVIDIA’s GPUs. NVIDIA’s major theme for ISC is neural net computing, with a pair of announcements relating to that.

On the academic front, Stanford has put together a new cluster to model neural networking for researching how the human brain learns. The 16 server cluster is capable of modeling a 11.2 billion parameter neutral network, which is 6.5 times bigger than the second largest such network, a 1.7 billion parameter model put together by Google in 2012. The cluster is the basis of a separate paper being released this week for the International Conference on Machine Learning, which is simultaneously taking place this week in Atlanta.

Meanwhile on the business front, Nuance, the company behind speech recognition software such as the Dragon series, is being tapped for ISC as a neural network case study. Nuance has used neural networking techniques for years as the basis of the machine learning systems their software uses to train itself, and more recently the company has begun integrating GPUs into that work. Specifically, the company is now using NVIDIA’s GPUs to accelerate the training process, cutting down the amount of time needed to train a model from weeks to days, and in turn allowing the company to experiment with new many more models in the same period of time. The ultimate result being that the company can test and refine models more frequently, with these refined models becoming the basis of future products.

Finally, although Titan is no longer the #1 supercomputer in the world – having been bumped down to merely #2 on the latest Top500 list – word comes from NVIDIA that Titan has finally passed all of its necessary acceptance tests. As is typical for supercomputers, they are unveiled and listed before undergoing full acceptance testing, which means final acceptance may not come until months later. In the case of Titan some unexpected issues were discovered with the PCIe connectors on its motherboards, with excess gold in the connectors leading to solder issues. The issue was repaired in April, and Titan was resubmitted for an acceptance testing pass, which as of last week it has since passed and finally entered full production.

Read More ...

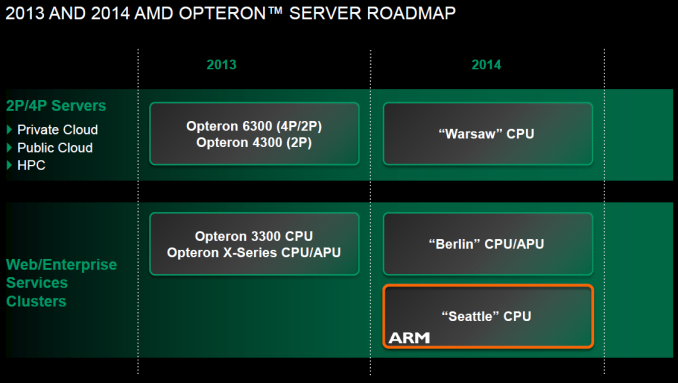

AMD Evolving Fast to Survive in the Server Market Jungle

There are two important trends in the server market: it is growing and it is evolving fast. It is growing fast as the number of client devices is exploding. Only one third of the world population has access to the internet, but the number of internet users is increasing by 8 to 12% each year. Most of the processing happens now on the server side (“in the cloud”), so the server market is evolving fast as the more efficient an enterprise can deliver IT services to all those smartphones, tablets and PCs, the higher the profit margins and the survival chances.

And that is why there is so much interest in the new star, the “micro server”. Today, AMD has laid out some ambitious plans for this part of the server market.

Read More ...

MSI GT70 Dragon Edition Notebook Review: Haswell and the GTX 780M

Coinciding with Intel's launch of Haswell, NVIDIA updated their mobile GPUs with a bomb of a part: a fully enabled GK104. Today we have MSI's flagship gaming notebook, the GT70 Dragon Edition, featuring three SSDs in RAID 0, a Haswell quad core, and a GeForce GTX 780M.

Read More ...

BenQ XL2720T Gaming Monitor Reviewed

On the very first monitor review I did for AnandTech, I skipped over the input lag tests. I didn’t have a CRT I could use for a reference, and as someone that isn’t a hard-core gamer themselves, I wasn’t certain how much overlooking them would really be missed. Well, I was wrong, and I heard about it as soon as it was published. Since that initial mistake I’ve added two CRT monitors to the testing stable and tried to find the ideal way to test lag, which I’m still in search of.

To serve the large, and vocal, community of hard core gamers, there are plenty of monitors out there that directly target them. One such display is the BenQ XL2720T, a 120 Hz LCD that’s also used in many sponsored gaming tournaments. Beyond its gaming pedigree, I was interested to see if it also performed well as a general purpose display, or if it really is just designed for a small subset of the market.

Read More ...

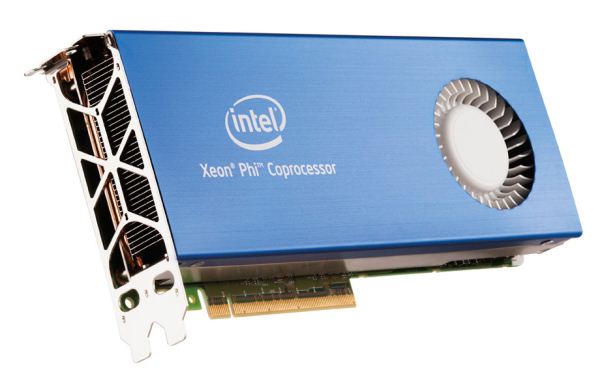

June 2013 Top500 List Published: Xeon Phi Takes Top Spot

Kicking off this week is the International Supercomputing Conference in Leipzig, Germany, one of the two major supercomputing/high performance computing conferences of the year. There will be several announcements coming out of ISC this week – a few of which we’ll see later this week – but perhaps the most visible announcement that comes out of ISC is the annual summer refresh of the Top500 supercomputer list.

With that in mind, the June 2013 Top500 list has been released, and as is usually the case a new supercomputer has entered the fray at the top of the list. The latest addition and new #1 supercomputer is the Chinese National University of Defense Technology's Tianhe-2 supercomputer, which hits the list with a measured Linpack performance of 33.86PFLOPS, nearly double the previous #1, Oak Ridge National Laboratory’s Titan. Like Titan, Tianhe-2 is another hybrid system, using a custom interconnect to tie together numerous systems containing both traditional Intel Xeon CPUs and Intel’s Xeon Phi co-processors.

Top500 Top 5 Supercomputers |

||||||

Supercomputer |

Architecture |

Performance (Rmax, TFLOPS) |

Power Consumption (kW) |

Efficiency (MFLOPS/W) |

||

Tianhe-2 |

Xeon + Xeon Phi |

33862.7 |

17808 |

1901.5 |

||

Titan |

Opteron + Tesla |

17590.0 |

8209 |

2142.7 |

||

Sequoia |

BlueGene/Q |

17173.2 |

7890 |

2176.5 |

||

K Computer |

SPARC64 |

10510.0 |

12660 |

830.1 |

||

Mira |

BlueGene/Q |

8586.6 |

3945 |

2176.5 |

||

Looking at the latest data, what’s particularly astounding about Tianhe-2 is simply how large it is. Placing on the Top500 list requires both efficiency and brute force, and in the case of Tianhe-2 there’s an unprecedented amount of brute force in play. The official power consumption rating for Tianhe-2 is 17.8 megawatts, more than double Titan’s 8.2MW. Even going back several years, the second largest machine to hit the Top500 list – and thus far the only other machine over 10MW – is the K supercomputer, at 12.6MW. Simply put, from a power perspective Tianhe-2 is nearly 50% larger than any previous Top500 computer.

When it comes to efficiency the matter won’t technically be settled until the formal unveiling of the Green500 list later this week, but since it’s just a derivation of the Top500 it’s fairly easy to calculate. To that end while Tianhe-2 isn’t quite as efficient as the BlueGene/Q and Tesla systems that sit in the top 5, both of which are just over 2,100 MFLOPS/watt, at 1901 MFLOPS/watt Tianhe-2 is at least in the same general category and generation as these other systems. With all of these supercomputers interconnects play a big part in performance, so for a system as large as Tianhe-2 that’s especially true. Unfortunately very few details have been published about the custom interconnect Tianhe-2 uses, so there’s very little to say on the matte other than that whatever it is works well enough to scale well over Tianhe-2’s 16,000 nodes.

Wrapping things up, with the introduction of Tianhe-2 this marks the second hybrid system in as many editions of the list to take the top spot. Although hybrid systems have been in the Top500 for a few years now, they have continued to become more popular due to the high efficiency and density of GPUs and GPU-like processors. Though straight CPU systems such as BlueGene/Q and its ilk are by no means out of the running, it will be interesting to see if this is part of a larger trend. According to the Top500 list the number of hybrid systems is actually down – from 62 on the last list to 54 now – but on the other hand the continued improvement and increasing flexibility of GPUs and other co-processors, not to mention the fact that now even Intel is involved in this field, means that there’s more effort than ever going into developing these hybrid systems. So whether hybrids will continue to push out straight CPU systems at the top of the list will be an interesting thing to keep an eye on over the next couple of years.

Read More ...

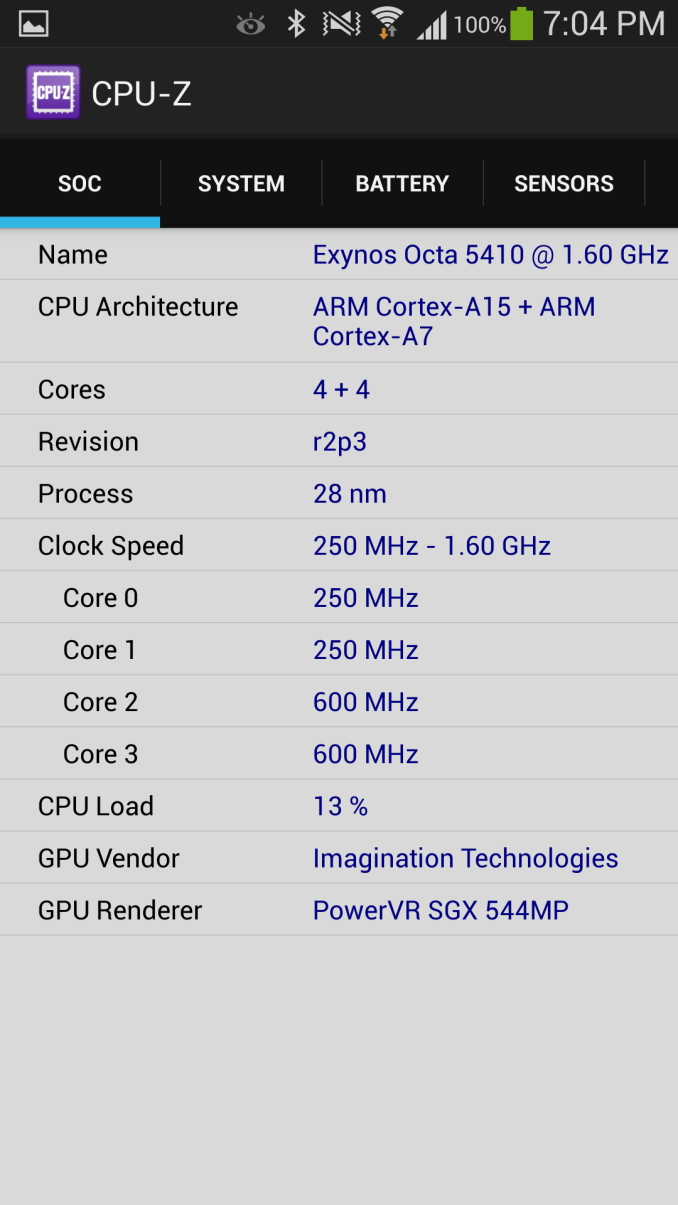

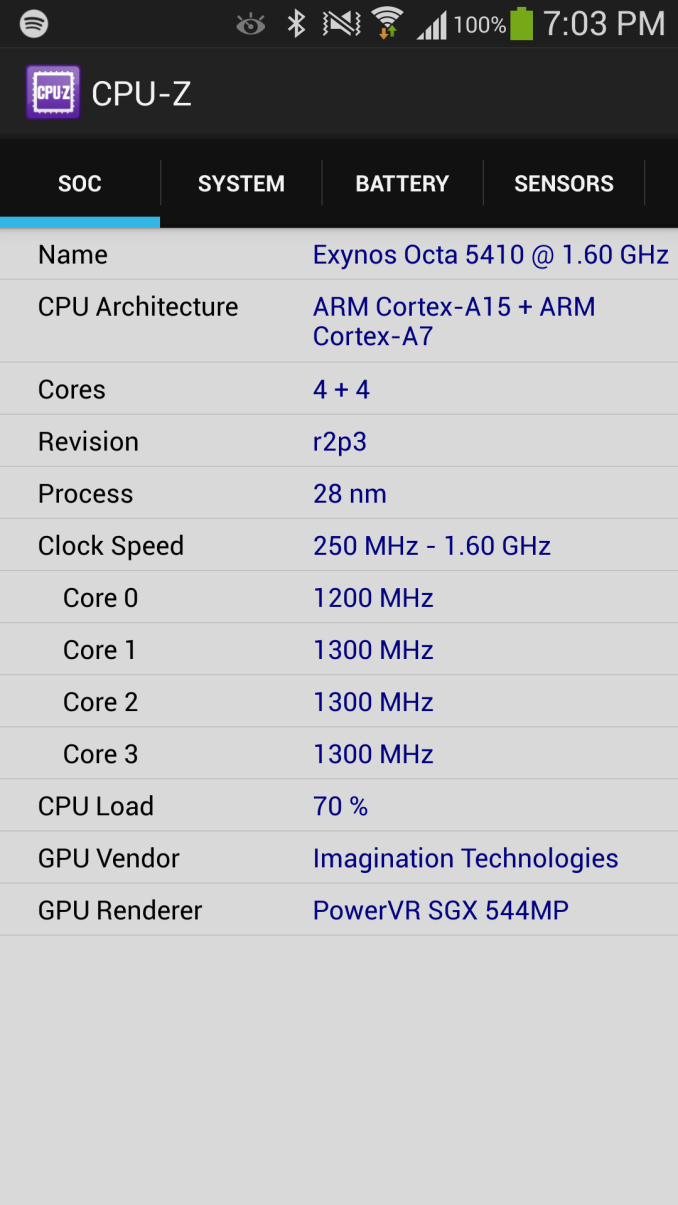

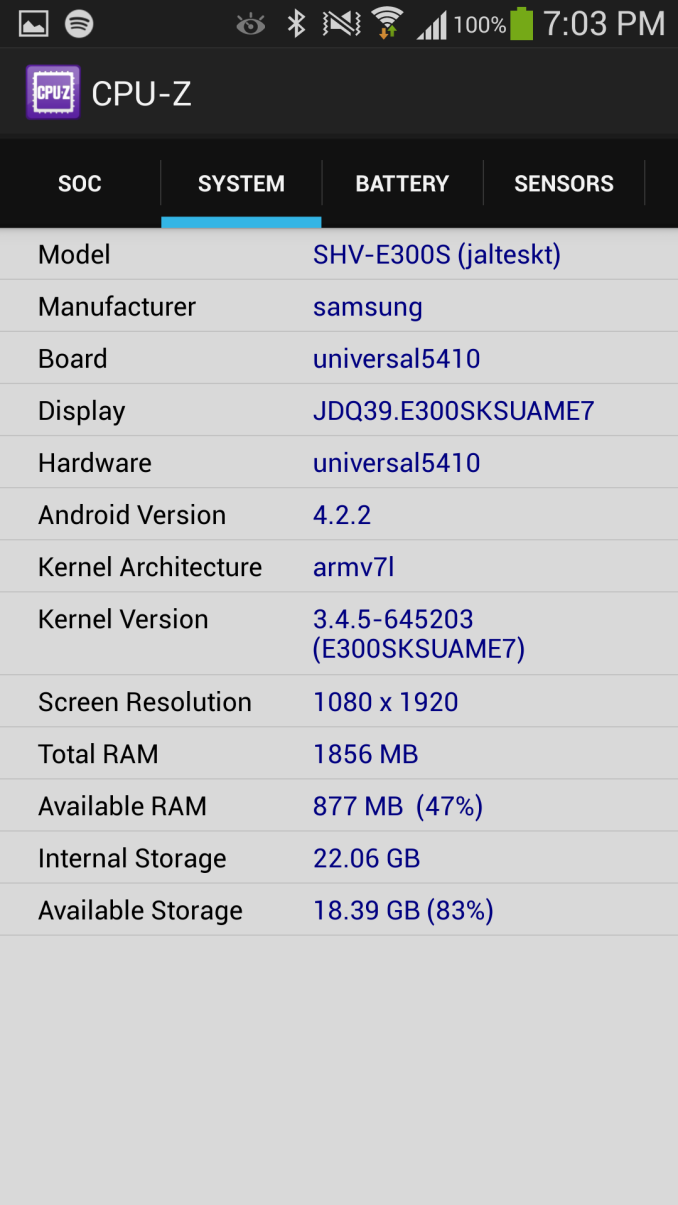

CPUID's CPU-Z Arrives on Android via Google Play

About three years ago, I remember one of the biggest problems I had while sorting out phones was figuring out what SoCs were inside them. Manufacturers weren't yet open to disclosing what silicon was inside, and there wasn't any SoC messaging or branding from any of the numerous silicon vendors. There was a pervasive sense of contentedness everywhere you turned with the current model where what was inside a handset was largely a black box. I remember wishing for a tool like CPU-Z for Android so many times, and I remember trying to explain to someone else just how dire the need was for something like it.

Today vendors and operators are considerably less opaque about what's inside their devices (proving yet again that the 'specs are dead' line is just false hyperbole), but unless you know where to look or who to ask it's still sometimes a mystery. For end users and enthusiasts, there remained the need for something beyond lots of searching, pouring through kernel sources, or kernel messages (dmesg) on devices.

We now have CPU-Z for Android from CPUID, and it works just like you'd expect it to if you're familiar with it on the desktop. There's the SoC name itself, max and min clocks, current clocks for the set of cores, and the other platform details exposed by Android. There are other apps that will get you the same data, but none of them organize it or present it as well as CPU-Z does now.

It isn't perfect (for example, I wish 'Snapdragon 600' devices would show APQ8064T and APQ8064AB when appropriate), but virtually every device I've run it on has popped up with the appropriate SoC and clocks inside. It's clear to me that CPUID is doing some device fingerprinting to figure out what particular silicon is inside, which is understandable given the constraints of the Android platform and the sandbox model. Right now however, CPU-Z for Android is quite the awesome tool.

Source: CPUID, Google Play

Read More ...

UPDATED! AMD Announces FX-9590 and FX-9370: Return of the GHz Race

AMD Announces FX-9590 and FX-9370: Return of the GHz Race

Today at E3 AMD announced their latest CPUs, the FX-9590 and FX-9370. Similar to what we’re seeing with Richland vs. Trinity, AMD is incrementing the series number to 9000 while sticking with the existing Piledriver Vishera architecture. These chips are the result of tuning and binning on GlobalFoundries’ 32nm SOI process, though the latest jump from the existing FX-8350 is nonetheless quite impressive.

The FX-8350 had a base clock of 4.0GHz with a maximum Turbo Core clock of 4.2GHz; the FX-9590 in contrast has a maximum Turbo clock of 5GHz and the FX-9370 tops out at 4.7GHz. We’ve asked AMD for details on the base clocks for the new parts, but so far have not yet received a response; we're also missing details on TDP, cache size, etc. but those will likely be the same as the FX-8350/8320 (at least for everything but TDP).

6/13/2013 Update: We have now received the most important pieces of information from AMD regarding the new parts. The base clock on the FX-9590 will be 4.7GHz and the base clock of the FX-9370 will be 4.4GHz, so in both cases it will be 300MHz below the maximum Turbo Core speed. The more critical factor is also the more alarming aspect: the rumors of a 220W TDP have proven true. That explains why these parts will target system integrators first, and the FX-9000 series also earns the distinction of having a higher TDP, but it also raises some serious concerns. With proper cooling, there's little doubt that you can run a Vishera core at 5.0GHz for extended periods of time, but 220W is a massive amount of power to draw for just a CPU.

To put things in perspective, the highest TDP part ever released by AMD prior to the FX-9000 series is the 140W TDP Phenom II X4 965 BE. For Intel, the vast majority of their chips have been under 130W, but a few chips (e.g. Core 2 Extreme QX9775, Core i7-3970X, and most of the Xeon 7100 series PPGA604 parts back at the end of the NetBurst era) managed to go above and beyond and hit 150W TDPs. So we're basically looking at a 76% increase in TDP relative to the FX-8350 to get a 19% increase in maximum clock speed. It's difficult to imagine the target market for such a chip, but perhaps a few of the system integrators expressed interest in a manufacturer-overclocked CPU.

For those who remember the halcyon days of the NetBurst vs. Sledgehammer Wars, the irony of AMD pimping the “first commercially available 5GHz CPU” can be a bit hard to take. Yes, all other things being equal (cache sizes, latency, pipeline depth, power use, etc., etc…), having a higher core clock will result in better performance. The stark reality is that all other things are almost never “equal”, however, which means pushing clocks to 5GHz will improve performance over the existing FX-8000 parts but clock speed alone isn’t enough. AMD continues to work on their next generation architecture, Steamroller, which will debut later this year in the Kaveri APUs as a 28nm part, but in the interim we have to make do with the existing parts.

As we covered extensively last week, Intel has just launched their latest Haswell processors, and on the desktop we’re seeing relatively small performance gains. That’s somewhat interesting as this is a “Tock” in Intel’s Tick-Tock cadence, which means a new architecture and that usually means improved performance. However, similar to the last Tock (Sandy Bridge), Haswell is more of a mobile-focused architecture, which means performance gains on the CPU are minor but power and battery life gains can be significant, especially in lighter workloads. Also similar to the “Tock” when we moved from Clarkdale to Sandy Bridge, the jump in graphics performance with the HD 5000 series parts (and even more so with the Iris and Iris Pro parts) can be quite large relative to Ivy Bridge.

So Intel has been relatively tame on the CPU performance increases this time around and for they’ve focused on reducing typical power use and improving graphics. Meanwhile AMD’s answer on their high-end desktop platforms is…more clock speed. We’ll have full reviews of the new parts in the future, as the new CPUs are not yet available, but given the ability of Vishera to overclock quite easily to the 4.8-5.2GHz range on air-cooling (and 8GHz+ with exotic overclocking methods!) the higher Turbo Core speeds were inevitable.

We could also talk model numbers and question the need to increment from the 8000 series to the 9000 series when nothing has really changed this time around—the more sensible time to make that jump should have been when Vishera first launched, at least from the technology side of things. It would also be nice to see more of a unification of model numbers in AMD’s product stack, as we currently have FX-4000, FX-6000, FX-8000, and now FX-9000 parts all built on the Zambezi/Bulldozer and Vishera/Piledriver architectures. FX-4000 (two modules/four cores), FX-6000 (three modules/six cores), and FX-8000 (four modules/eight cores) made sense, but FX-9000 breaks that pattern. At present there are no updates being announced for the FX-4000 and FX-6000 families, but those will likely come. Will they be FX-5000 and FX-7000 parts now, or will they remain 4000/6000? If AMD were to use an Intel-style naming convention, Bulldozer was 1st Generation, Piledriver is 2nd Generation, and ahead we still have Steamroller (3rd Generation) and Excavator (4th Generation), but they’ve chosen a different route.

Whatever the name of the part, more than ever it’s important to know what you’re actually getting in terms of hardware before making a purchase—that holds true for AMD CPUs, APUs, and GPUs, but it also applies to Intel’s CPUs and NVIDIA’s GPUs, never mind the variety of ARM SoCs out there. The FX-9000 series is now AMD’s highest performance four module/eight core processor for their AM3+ platform, but it’s an incremental improvement from the FX-8000 series in the same way that the Radeon HD 8000 series is an incremental improvement on the HD 7000 GCN offerings. At least on the AMD CPU side of things we can generally go by the “higher numbers are better” idea, but that won’t always be the case.

AMD did not reveal pricing details on the new parts, and the press release says these new CPUs will “be available initially in PCs through system integrators”. They may replace the existing FX-8350 and FX-8320 eventually, but they will initially launch at a higher price depending on how AMD and their partners feel they stack up against the competition.

Read More ...

Synology DS1812+ 8-bay SMB / SOHO NAS Review

Synology recently refreshed their 8-bay SMB / SOHO NAS lineup with the DS1813+. Based on the same platform as the DS1812+ (Atom D2700), it added two extra network ports. However, due to the similarity in the underlying platform, the performance in most cases can be expected to be similar to last year's version, the DS1812+.

A number of Intel Atom D27xx-based NAS systems have been evaluated in our labs, even though we formally reviewed only one earlier this year, the LaCie 5big NAS Pro. The Thecus N4800 has made its appearance in a some benchmarks presented in our SMB / SOHO NAS testbed article. We are waiting for a firmware update to complete our 5big NAS Pro review, but, in the meanwhile, we have results from our evaluation of the Synology DS1812+ which was sent to our labs last year. In our experience, Synology manages to tick all the right boxes for the perfect consumer NAS (except for the pricing factor). Does the DS1812+ carry things forward, or do we have something to complain about? Read on to find out.

Read More ...

N-trig DuoSense Pen2: Who Needs a Stylus?

With the dawn of capacitive touch displays and the iPhone, iPad, iPod Touch, etc., some might think the day of the stylus is past. N-trig has been around since 1999 working on stylus hardware, and they disagree. Just what can you do with a stylus that you can't do with capacitive touch? Well, art, legible notes, and a real signature are three things that come to mind. Other than that? Yeah, capacitive touch works pretty well, doesn't it? Read on for our thoughts on N-trig's latest stylus, the DuoSense Pen2.

Read More ...

Ask AnandTech: Tablets at Work, What are Your Experiences?

The tablet market has grown tremendously over the past few years. What started as a content consumption device for consumers has transformed into a device that has started to pull sales away from traditional notebooks. The obvious next step for tablets is towards the enterprise and business users.

As my usage models tend to be a bit unusual, when tasked with finding out how people use tablets for work my initial thought was to go to you all directly. So, how do you or could you use use tablets for work? What possibilities do you see for tablet use in work going forward? Respond with your thoughts in the comments, a lot of eyes will be watching this discussion and you could definitely help shape design decisions going forward.

Read More ...

Overclocking Haswell on ASUS' 8-Series Motherboards [video]

After giving us a tour of ASUS' 8-series Haswell motherboards and its updated UEFI interface, JJ took us through overclocking a Core i7-4770K using ASUS' new software and UEFI tools. We get a good look at how auto-overclocking works, as well as what settings to pay attention to when manually overclocking Haswell.

Read More ...

Samsung Announces Galaxy S4 Zoom - 16 MP, Zoom, Makes Calls

Samsung's Galaxy camera came out almost a year ago, and it roughly mimicked the specs of an international SGS3 but included a unique camera system and body. Although the device couldn't make phone calls, it included cellular connectivity and was arguably the best in the first of a limited number of connected cameras competing with it. After many whispers, Samsung has announced the Galaxy S4 Zoom, an updated version of its connected camera line with a display and front face emulating the SGS4 but topped with another 16 MP camera system.

Camera Emphasized Smartphone Comparison |

|||||||

Samsung Galaxy Camera (EK-GC100) |

Nikon Coolpix S800c |

Nokia PureView 808 |

Samsung Galaxy S4 Zoom |

||||

CMOS Resolution |

16.3 MP |

16.0 MP |

41 MP |

16.3 MP |

|||

CMOS Format |

1/2.3", 1.34µm pixels |

1/2.3", 1.34µm pixels |

1/1.2", 1.4µm pixels |

1/2.3", 1.34µm pixels |

|||

CMOS Size |

6.17mm x 4.55mm |

6.17mm x 4.55mm |

10.67mm x 8.00mm |

6.17mm x 4.55mm |

|||

Lens Details |

4.1 - 86mm (22 - 447 35mm equiv) F/2.8-5.9 21x zoom + OIS |

4.5 - 45.0mm (25-250 35mm equiv) F/3.2-5.8 |

8.02mm (28mm 35mm equiv) F/2.4 |

4.3 - 43mm (24-240 mm 35mm equiv) F/3.1-F/6.3 10x zoom + OIS |

|||

Display |

1280 x 720 (4.8") |

854 x 480 (3.5") |

640 x 360 (4.0") |

960 x 540 (4.3") |

|||

SoC |

Exynos 4412 (Cortex-A9MP4 at 1.4 GHz with Mali-400 MP4) |

ARM Cortex A5(?) |

1.3 GHz ARM11 |

1.5 GHz Exynos 4212 |

|||

Storage |

8 GB + microSDXC |

1.7 GB + microSDHC |

16 GB + microSDHC |

8 GB + microSDHC |

|||

Video Recording |

1080p30, 480p120 |

1080p30 |

1080p30 |

1080p30 |

|||

OS |

Android 4.1 |

Android 2.3.6 |

Symbian Belle |

Android 4.2 |

|||

Connectivity |

WCDMA 21.1 850/900/1900/2100, 4G, 802.11a/b/g/n with 40 MHz channels, BT 4.0, GNSS |

No cellular, WiFi 802.11b/g/n(?), GPS |

WCDMA 14.4 850/900/1700/1900/2100, 802.11b/g/n, BT 3.0, GPS |

WCDMA 21.1 850/900/1900/2100, 4G LTE SKUs, 802.11a/b/g/n with 40 MHz channels, BT 4.0, GNSS |

|||

Last time around Samsung made things easy by supplying the sensor size, it's easy enough however to verify that the S4 Zoom is using the same 1/2.3" 16 MP sensor by going off of crop factor (5.64 crop factor for a 1/2.3" format sensor * 4.3 mm focal length, gives us their own published 24 mm focal length in 35mm-effective numbers). Likewise the availability of some photos published by a few websites with access to the hardware makes it easy to verify the same captured photo size of 4608 x 3456. I'm not surprised that Samsung kept sensor the same size given the desire to get the package thinner, but I find myself wishing that this did include a larger one for better indoor and low light sensitivity. There's thankfully still OIS (Optical Image Stabilization) onboard. The change in thickness also accordingly comes with a slightly higher F/# at the widest and most telephoto points, from F/2.8 to F/3.1 wide open, and F/5.9 to F/6.3 at telephoto. There's no way around the fact that on paper the S4 Zoom is a bit of a step down compared to Galaxy Camera, but it is thinner.

Of course, the real benefit is that it's a connected camera running Android 4.2 and including GNSS, 802.11n dual band WiFi, BT 4.0, NFC, and a 1.9 MP front facing camera. The biggest change of course is that unlike the Galaxy Camera, Galaxy S4 Zoom is capable of making voice calls directly. I could see myself sticking a SIM in Galaxy S4 Zoom and using it as a hybrid smartphone plus point and shoot device for sure, I just wish it was a step up over Galaxy Camera on the camera side of things. For that we'll have to wait and see if a Galaxy Camera 2 appears.

Source: Samsung

Read More ...

Computex 2013: ECS launches a Gaming Range: GANK, AGGRO and KILLSTEAL

One of the more esoteric showcasing at Computex was from ECS. In recent chipset and processor launches more and more motherboard companies are jumping on the bandwagon for a range of gming oriented motherboards (either part of the main motherboard stack or separate). ECS has sought the services of a branding agency and developed their Z87 range under the heading of GANK.

The word GANK, which I had no idea what it meant, is from the realm of MMORPGs, meaning ‘gang kill’. Under this range ECS will launch a few motherboards building on the Golden and Extreme ranges of the last generation.

The top board of the range will be the Z87H4-A3X Extreme, which offers an x8/x4/x4 + x4 PCIe layout for up to two-way SLI and four-way CrossfireX. Rather oddly it does not have an onboard VGA power connector, suggesting that ECS are attempting to draw 300W through the main 24-pin ATX power connector.

It is interesting to note that the Machine and Domination motherboards (for Extreme and Golden respectively) both contain Thunderbolt controllers.

The other ranges are for different segments – AGGRO for AMD and KILLSTEAL for the next Intel enthusiast range (Ivy Bridge-E) launched later this year.

For the main range of motherboards, ECS are styling them clear broad colors and listing them under the Essentials, Deluxe or Pro branding:

Also on the ECS stand we had the entrants for ECS’ ‘MODMEN’ competition, encouraging case modders from around the world to compete for a cash prize. They were certainly impressive!

Read More ...

Intel SSD DC S3500 Review (480GB): Part 1

We always knew that Intel would build a standard MLC version of its flagship S3700 enterprise SSD, and today we have that drive: the Intel SSD DC S3500.

Read More ...

FAA Expected to Ease Gadget Rules for Takeoffs, Landings

New draft recommendations say some devices, like e-readers, could be used the entire flight

Read More ...

Alleged "Disgruntled Microsoft Employee" Says Used Games are Killing Industry

Rant takes issue with Microsoft backing down from its most strict DRM plans for the Xbox One

Read More ...

Tesla's Battery Swap Only Takes 90 Seconds, Costs $60-$80

Tesla plans to deploy stations later this year

Read More ...

Samsung Launches ATIV Ultrabook with 3200x1800 Display, 12-Hour Battery Life

Samsung launches new portable and desktop computers

Read More ...

Vehicle Backup Camera Regulations Facing an 18-Month Delay

Backup camera regulations delayed again

Read More ...

Sponsored Post: LogMeIn Reaches Out to the DailyTech IT Superheros with a Free Trial (no credit card required!)

Editor's Note: From time to time we offer our sponsors an opportunity to share new product announcements and other information with our readers. These articles are designated as “Sponsored Posts” to differentiate them from our regular content.

Read More ...

FCC Chair Nominee Wants to Fully Legalize Phone Unlocking

Tom Wheeler turns against his former employer, the CTIA

Read More ...

Pirate Bay Co-Founder Warg is Sentenced to Two Years in the Brig

Computer specialist was found guilty of hacking crimes and illegal online money transfers

Read More ...

2014 Honda Accord Hybrid to Achieve 49 MPG City, 45 MPG Highway

The new Honda Accord Hybrid is rated at 47 mpg combined

Read More ...

Study: Mars had Atmospheric Oxygen Before Earth

Mars had materials rich in oxygen about 4 billion years ago

Read More ...

Google Threatened to Change Privacy Policy by French Watchdog or Pay Fines

Google has three months to do so

Read More ...

Researchers Make Durable Battery From Wood, Salt, and Tin Nanofoil

Durable batteries are large, but cheap -- perfect for grid storage

Read More ...

FTC Will Probe "Patent Troll" Problem, But Won't Sue Anyone

Trolls say they serve a valuable roll protecting inventors and business partners

Read More ...

Officials: F-35 Joint Strike Fighter Program is on Track but Key Milestones Remain

F-35 software block and high-tech helmet still pose challenges

Read More ...

Intel Joins Alliance for Wireless Power

A4WP gains support from an industry giant

Read More ...

Yahoo Criticized over Plan to Recycle Dormant User Accounts

Critics say recycling of old accounts could lead to identity theft

Read More ...

Available Tags:GeForce , GTX , Samsung , Galaxy , AMD , Windows 8 , Tablet , Windows , Android , NVIDIA , Ivy Bridge , Rumors , LG , Smartphone , GPU , ASUS , CUDA , Server , MSI , Notebook , BenQ , Gaming , Xeon , via , Google , Motherboards , Gaming , Intel , SSD , Microsoft , Google , Intel , Wireless , Yahoo ,

No comments:

Post a Comment