Besides the official reveal, there will be a live discussion with Blizzard developers Chris Metzen, Dustin Browder, and Jeff Chamberlin, along with Community Manager Kevin Johnson. Viewers will be able to participate in the discussion segment by sending questions via Twitter or Vine (#Vengeance). Hopefully this time, we'll see the engine use more than two CPU cores.

Update: If you missed the trailer, you can now watch it on YouTube.

Read More ...

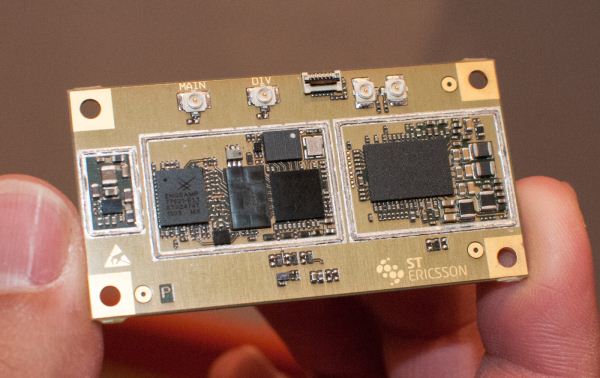

A Look at ST-Ericsson's THOR M7450 Category 4 LTE Modem with Carrier Aggregation

Earlier this morning we stopped by ST-Ericsson to talk about their SoCs and modem platforms, and took a look at their new Thor M7450 baseband which includes both support for 10 MHz + 10 MHz carrier aggregation to realize full category 3 and 4 speeds, and of course category 4 support. M7450 is built on a 28nm LP bulk process, though I'm told that there will be future parts also supporting FD-SOI similar to the new L8580. This is the same IP block integrated into ST-E's L8580 SoC, and includes support for both TDD and FDD LTE alongside WCDMA/HSPA+, TD-SCDMA, and GSM, all the 3GPP suite. ST-E believes its modem architecture in M7450 is very different from traditional designs, as it leans more towards being an SDR than most.

We had a chance to see M7450 demonstrating both UE Category 4 speeds and carrier aggregation on a number of different band combinations, interestingly enough 4 + 17 (AT&T), 4 + 13 (VZW), and 2 + 17 (AT&T) in addition to a few others. M7450 of course supports 5+10 and 5+5 aggregation as well.

Anand and I also got a chance to check out M7450 doing a VoLTE voice over IMS call running AMR-WB on the platform, which touts power consumption on a VoLTE call at levels equal to or less than a WCDMA call. There was a visualization showing the platform performance on the current VoLTE call versus WCDMA (for the same platform) which was at the same level or below basically the entire time. M7450 is currently sampling and expected to be in devices by the end of the year.

Gallery: A Look at ST-Ericsson's THOR M7450 Category 4 LTE Modem with Carrier Aggregation

Read More ...

Rosewill Line-M Case Review: Wherefore Art Thou Micro-ATX?

Vendors are always very quick to send us their biggest, best, and brightest. Rosewill's own top-selling Blackhawk Ultra has been with us for a little while, but while we rework our testbed for high end cases, we thought it might be worth looking at one of the workhorses in Rosewill's stable. Looking at enthusiast kit is fun, but it's interesting to see what's floating around in the budget sector, too, as many of us are often on the hook to build and maintain desktops for family and friends. With that in mind, we requested the micro-ATX Rosewill Line-M.

.jpg)

While the Line M is worth checking out in its own right as a compact, $55 case with USB 3.0 connectivity, it also highlights a disparity in the current industry: Micro-ATX motherboards are still incredibly common, but case designs are stratifying within two extremes. Full ATX and larger cases are going stronger than ever, but the smaller case designs have largely been usurped by Mini-ITX. There's still a place in the world for a good Micro-ATX client, though, and we think the Line-M might just help deliver it.

Read More ...

How the HTC One's Camera Bucks the Trend in Smartphone Imaging

Now that we’ve seen the HTC One camera announcement, I think it’s worth going over why this is something very exciting from an imaging standpoint, and also a huge risk for properly messaging to consumers.

With the One, HTC has chosen to go against the prevailing trend for this upcoming generation of devices by going to a 1/3.0" CMOS with 2.0 micron pixels, for a resulting 4 MP (2688 × 1520) 16:9 native image size. That’s right, the HTC One is 16:9 natively, not 4:3. In addition the HTC One includes optical image stabilization on two axes, with +/- 1 degree of accommodation and a sampling/correction rate of 2 kHz on the onboard gyro. Just like the previous HTC cameras, the One has an impressively fast F/2.0 aperture and 5P (5 plastic element) optical system. From what I can tell, this is roughly the same 3.82 mm (~28mm in 35mm effective) focal length, slightly different from the 3.63 mm of the previous One camera. HTC also has included a new generation of ImageChip 2 ISP, though this is of course still used in conjunction with the ISP onboard the SoC, and HTC claims it’s able to do full lens shading correction for vignetting and color, in addition to even better noise reduction, and realtime HDR video. Autofocus is around 200ms for a full scan, I was always impressed with AF speed the previous cameras had, this is even faster. When it comes to video HTC apparently has taken some feedback to heart and finally maxed out the encoder capabilities for the APQ8064/8064Pro/8960 SoC, which is 20 Mbps H.264 high profile.

H.264 High Profile, 20 Mbps

The previous generation of high end smartphones shipped 1.4 micron pixels and a CMOS size of generally 1/3.2“ for 8 MP effective resolution. This year it seems as though most OEMs will go to 1.1 micron pixels on the same 1/3.2” size CMOS and thus get 13 MP of resolution, or choose to stay at 8 MP and absorb the difference with a smaller 1/4" CMOS and thinner optical system. This would give HTC an even bigger difference (1.1 micron vs 2.0 micron) in pixel size and thus sensitivity. It remains to be seen whether other major OEMs will also include OIS or faster optical systems this generation, I suspect we’ll see faster (lower F/#) systems from Samsung this time, some rumored images showed EXIF data of F/2.2 but nothing else insightful. Of course, Nokia is the other major OEM pushing camera, but even they haven’t quite gone backwards in pixel size yet, but they’ve effectively been in a different category for a while. We’ve already seen some handset makers go to binning (combining a 2x2 grid of pixels into one effective larger pixel) but this really only helps increase SNR and average out some noise rather than fundamentally increase sensitivity.

The side by sides that I took with the HTC One alongside a One X so far have been impressive, even without final tuning for the HTC One. I don’t have any sample images I can share, but what I have seen has gotten me excited about the HTC One in a way that only a few other devices (PureView 808, N8, HTC One X) have so far. Both the preview and captured image were visibly brighter and had more dynamic range in the highlights and shadows. So far adding HDR to smartphones has focused not so much on making images very HDR-ey but rather as a mitigation to recover some dynamic range and make smartphone images look more like what you’d expect from a higher end camera. Moreover, not having to use flash in low light situations is a real positive, something which currently adds a false color cast if you’re using a device with an LED.

Gallery: How the HTC One's Camera Bucks the Trend in Smartphone Imaging

Read More ...

Understanding Camera Optics & Smartphone Camera Trends, A Presentation by Brian Klug

Recently I was asked to give a presentation about smartphone imaging and optics at a small industry event, and given my background I was more than willing to comply. At the time, there was no particular product or announcement that I crafted this presentation for, but I thought it worth sharing beyond just the event itself, especially in the recent context of the HTC One. The high level idea of the presentation was to provide a high level primer for both a discussion about camera optics and general smartphone imaging trends and catalyze some discussion.

For readers here I think this is a great primer for what the state of things looks like if you’re not paying super close attention to smartphone cameras, and also the imaging chain at a high level on a mobile device.

Read on for the full presentation!

Read More ...

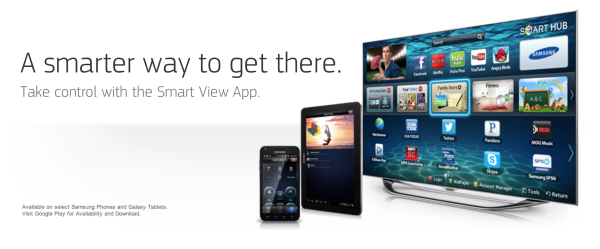

Samsung's TV Discovery Service Enables TV to Smartphone/Tablet Streaming

I'm not entirely sure I understand the point of MWC this year if everyone is going to pre-empt the show with announcements of their own (or in the case of the Galaxy S 4, wait until after the show to announce). Samsung does join the list of companies that are unveiling announcements prior to the show with the disclosure of its TV Discovery service.

Samsung is in a very unique position in that it is not only a SoC, NAND, display and DRAM maker, but also a significant player in the smartphone, tablet and TV space. If any company is well positioned to understand the needs of the market, it's Samsung.

With its fingers in many pots, Samsung quickly recognized a strong relationship between smartphone/tablet usage in conjunction with simply watching TV. This lead to Samsung outfitting many of its devices with an IR emitter, like with the Galaxy Note 10.1. If your tablet is out while you're watching TV, you might as well use your tablet to control your TV as well.

To increase available synergies between smartphone/tablet and TV, Samsung launched its TV Discovery service. Samsung's TV Discovery is a combination of software and hardware that simply lets you get a good feel for what content you have available to watch on TV as well as aggregated from online streaming sources such as Netflix and Blockbuster. Like many other attempts at TV/PC or TV/gadget convergence, TV Discovery attempts to solve the problem of having tons of content spread across many services by presenting it all in a single app on your smartphone or tablet.

TV Discovery will also have a personalization component as well, to suggest content for you to watch based on your preferences.

The software side isn't anything super unique, as we've seen many attempts at this before. I don't know that another software aggregation service is going to dramatically change anything, but before we reach perfection there are always many iterations of attempts that we have to live through.

Devices equipped with an IR emitter will be able to serve as universal remote controls, just as before.

What is most unique about Samsung's TV Discovery service however is the integration with Samsung TVs. With all 2013 SMART TVs, Samsung is promising the ability to stream content from your TV Discovery enabled smartphone/tablet to your TV and vice versa. Getting content from your smartphone or tablet onto your TV is nothing new, but we haven't had a good way of getting TV content onto your mobile device. Obviously you'll need a Samsung TV for this to work, as well as the Samsung smartphone/tablet, but it's an intriguing leverage of Samsung's broad device ecosystem.

You can expect to see the TV Discovery app ship on 2013 Samsung mobile devices as well as 2013 Samsung SMART TVs.

Gallery: Samsung's TV Discovery Service Enables TV to Smartphone/Tablet Streaming

Read More ...

GeForce Titan Pre-Order Available

This week saw the launch of NVIDIA's latest and greatest single GPU consumer graphics card, the GeForce Titan. Priced at a cool grand ($1000), the Titan isn't the sort of video card that every hobbyist and gamer can buy on a whim. Instead, NVIDIA is positioning it as an entry-level compute card (e.g. it's about one third the price of a Tesla K20), or an ultra-high-end gaming card for those who simply must have the best. We expect to see quite a few boutiques selling systems equipped with Titan, and indeed we've seen press releases from all the usual suspects.

This is as good a place as any to list those, so here's a short list, with estimated pricing based on a custom configured PC at each vendor. (I'm sure there are other vendors selling Titan as well; this is by no means intended to be a comprehensive list.)

Obviously that's a higher cost per GPU at every one of the above vendors, and if you've already got a fast system you probably aren't looking to upgrade to a completely new PC. For those looking to buy a Titan GPU on it's own, Newegg is now listing a pre-order of the ASUS Titan at the $999 MSRP. The current release date is listed as February 28, so next Thursday. We expect EVGA and some other GPU vendors to also show up some time in the next week, and we'll update this list as appropriate.

Read More ...

The AnandTech Podcast: Episode 17

We managed to get in one more Podcast before Brian and I leave for MWC 2013 today. With the number of major announcements that happened in the past week, we pretty much had to find a way to make this happen. On the list for discussion today are the new HTC One, NVIDIA's GeForce GTX Titan, Tegra 4i and of course the Sony PlayStation 4. Enjoy!

The AnandTech Podcast - Episode 17

featuring Anand Shimpi, Brian Klug & Dr. Ian Cutress

iTunes

RSS - mp3, m4a

Direct Links - mp3, m4a

Total Time: 1 hour 9 minutes

Outline - hh:mm

HTC One - 00:00

NVIDIA's GeForce GTX Titan - 00:20

NVIDIA's Tegra 4i - 00:42

Sony's PlayStation 4 - 00:52

As always, comments are welcome and appreciated.

Read More ...

Dell XPS 12 Review: A Jack of All Trades Flipscreen Ultrabook

Dell’s XPS line is for their premium consumer offerings, with some overlap between the consumer and professional users gravitating towards these systems. The XPS 12 Duo carries that “catering to a wider audience” mentality a step further with a flip screen display that allows you to transition between standard notebook and tablet modes.

_575px.jpg)

Unlike some other companies, this is technically a second-generation Ultrabook, but since Dell more or less skipped the first-generation we still expect more from Dell this time around. The XPS 13 Ultrabook looked nice, though we found had some concerns with the temperatures we could hit under stress testing and the resulting noise. Here, Dell has pulled out all the stops and gone with a 12.5” 1080p IPS touchscreen. It’s definitely one of the more interesting designs to come out of late, but just how well does it work in practice?

Read More ...

NVIDIA’s GeForce GTX Titan Review, Part 2: Titan's Performance Unveiled

Earlier this week NVIDIA announced their new top-end single-GPU consumer card, the GeForce GTX Titan. Built on NVIDIA’s GK110 and named after the same supercomputer that GK110 first powered, the GTX Titan is in many ways the apex of the Kepler family of GPUs first introduced nearly one year ago. With anywhere between 25% and 50% more resources than NVIDIA’s GeForce GTX 680, Titan is intended to be the ultimate single-GPU card for this generation.

Meanwhile with the launch of Titan NVIDIA has repositioned their traditional video card lineup to change who the ultimate video card will be chasing. With a price of $999 Titan is decidedly out of the price/performance race; Titan will be a luxury product, geared towards a mix of low-end compute customers and ultra-enthusiasts who can justify buying a luxury product to get their hands on a GK110 video card. So in many ways this is a different kind of launch than any other high performance consumer card that has come before it.

So where does that leave us? On Tuesday we could talk about Titan’s specifications, construction, architecture, and features. But the all-important performance data would be withheld another two days until today. So with Thursday finally upon us, let’s finish our look at Titan with our collected performance data and our analysis.

Read More ...

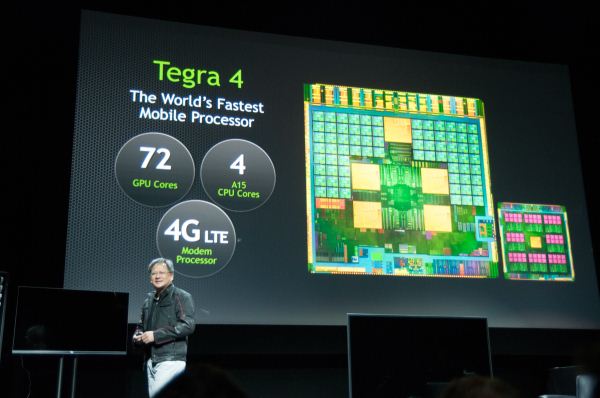

ZTE to Build Tegra 4 Smartphone, Working on i500 Based Design As Well

ZTE just announced that it would be building a Tegra 4 based smartphone for the China market in the first half of 2013. Given NVIDIA's recent statements about Tegra 4 shipping to customers in Q2, I would expect that this is going to be very close to the middle of the year. ZTE didn't release any specs other than to say that it's building a Tegra 4 phone.

Separately, ZTE and NVIDIA are also working on another phone that uses NVIDIA's i500 LTE baseband.

Read More ...

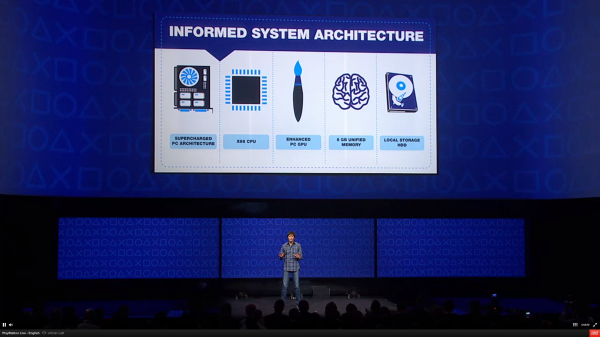

Sony Announces PlayStation 4: PC Hardware Inside

Sony just announced the PlayStation 4, along with some high level system specifications. The high level specs are what we've heard for quite some time:

Details of the CPU aren't known at this point (8-cores could imply a Piledriver derived architecture, or 8 smaller Jaguar cores—the latter being more likely), but either way this will be a big step forward over the PowerPC based general purpose cores on Cell from the previous generation. I wouldn't be too put off by the lack of Intel silicon here, it's still a lot faster than what we had before and at this level price matters more than peak performance. The Intel performance advantage would have to be much larger to dramatically impact console performance. If we're talking about Jaguar cores, then there's a bigger concern long term from a single threaded performance standpoint.

Update: I've confirmed that there are 8 Jaguar based AMD CPU cores inside the PS4's APU. The CPU + GPU are on a single die. Jaguar will still likely have better performance than the PS3/Xbox 360's PowerPC cores, and it should be faster than anything ARM based out today, but there's not huge headroom going forward. While I'm happier with Sony's (and MS') CPU selection this time around, I always hoped someone would take CPU performance in a console a bit more seriously. Given the choice between spending transistors on the CPU vs. GPU, I understand that the GPU wins every time in a console—I'm just always an advocate for wanting more of both. I realize I never wrote up a piece on AMD's Jaguar architecture, so I'll likely be doing that in the not too distant future.

The choice of 8 cores is somewhat unique. Jaguar's default compute unit is a quad-core machine with a large shared L2 cache, it's likely that AMD placed two of these together for the PlayStation 4. The last generation of consoles saw a march towards heavily threaded machines, so it's no surprise that AMD/Sony want to continue the trend here. Clock speed is unknown, but Jaguar was good for a mild increase over its predecessor Bobcat. Given the large monolithic die, AMD and Sony may not have wanted to push frequency as high as possible in order to keep yields up and power down. While I still expect CPU performance to move forward in this generation of consoles, I was reminded of the fact that the PowerPC cores in the previous generation ran at very high frequencies. The IPC gains afforded by Jaguar have to be significant in order to make up for what will likely be a lower clock speed.

We don't know specifics of the GPU, but with it approaching 2 TFLOPS we're looking at a level of performance somewhere between a Radeon HD 7850 and 7870. Update: Sony has confirmed the actual performance of the PlayStation 4's GPU as 1.84 TFLOPS. Sony claims the GPU features 18 compute units, which if this is GCN based we'd be looking at 1152 SPs and 72 texture units. It's unclear how custom the GPU is however, so we'll have to wait for additional information to really know for sure. The highest end PC GPUs are already faster than this, but the PS4's GPU is a lot faster than the PS3's RSX which was derived from NVIDIA's G70 architecture (used in the GeForce 7800 GTX, for example). I'm quite pleased with the promised level of GPU performance with the PS4. There are obvious power and cost constraints that would keep AMD/Sony from going even higher here, but this should be a good leap forward from current gen consoles.

Outfitting the PS4 with 8GB of RAM will be great for developers, and using high-speed GDDR5 will help ensure the GPU isn't bandwidth starved. Sony promised around 176GB/s of memory bandwidth for the PS4. The lack of solid state storage isn't surprising. Hard drives still offer a dramatic advantage in cost per GB vs. an SSD. Now if it's user replaceable with an SSD that would be a nice compromise.

Leveraging Gaikai's cloud gaming technology, the PS4 will be able to act as a game server and stream the video output to a PS Vita, wirelessly. This sounds a lot like what NVIDIA is doing with Project Shield and your NVIDIA powered gaming PC. Sony referenced dedicated video encode/decode hardware that allows you to instantaneously record and share screenshots/video of gameplay. I suspect this same hardware is used in streaming your game to a PS Vita.

Backwards compatibility with PS3 games isn't guaranteed and instead will leverage cloud gaming to stream older content to the box. There's some sort of a dedicated background processor that handles uploads and downloads, and even handles updates in the background while the system is off. The PS4 also supports instant suspend/resume.

The new box heavily leverages PC hardware, which is something we're expecting from the next Xbox as well. It's interesting that this is effectively how Microsoft entered the console space back in 2001 with the original Xbox, and now both Sony and MS have returned to that philosophy with their next gen consoles in 2013. The PlayStation 4 will be available this holiday season.

I'm trying to get more details on the CPU and GPU architectures and will update as soon as I have more info.

Read More ...

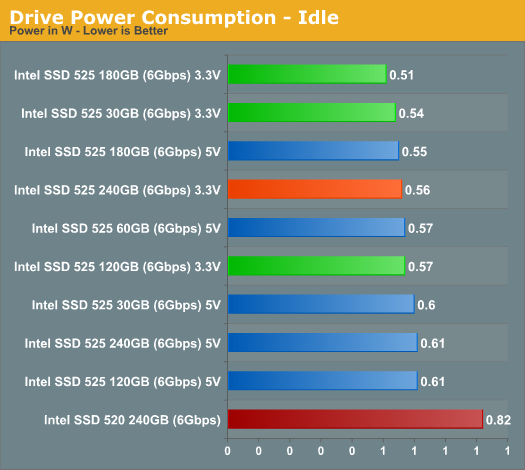

An Update on Intel's SSD 525 Power Consumption

Intel's SSD 525 is the mSATA version of last year's SF-2281 based Intel SSD 520. The drive isn't just physically smaller, but it also features a new version of the Intel/SandForce firmware with a bunch of bug fixes as well as some performance and power improvements. Among the improvements is a tangible reduction in idle power consumption. However in our testing we noticed higher power consumption than the 520 under load. Intel hadn't seen this internally, so we went to work investigating why there was a discrepancy.

The SATA power connector can supply power to a drive on a combination of one or more power rails: 3.3V, 5V or 12V. Almost all 2.5" desktop SSDs draw power on the 5V rail exclusively, so our power testing involves using a current meter inline with the 5V rail. The mSATA to SATA adapter we use converts 5V to 3.3V for use by the mSATA drive, however some power is lost in the process. In order to truly characterize the 525's power we had to supply 3.3V directly to the drive and measure at our power source. The modified mSATA adapter above allowed us to do just that.

Idle power consumption didn't change much:

Note that the 525 still holds a tremendous advantage over the 2.5" 520 in idle power consumption. Given the Ultrabook/SFF PC/NUC target for the 525, driving idle power even lower makes sense.

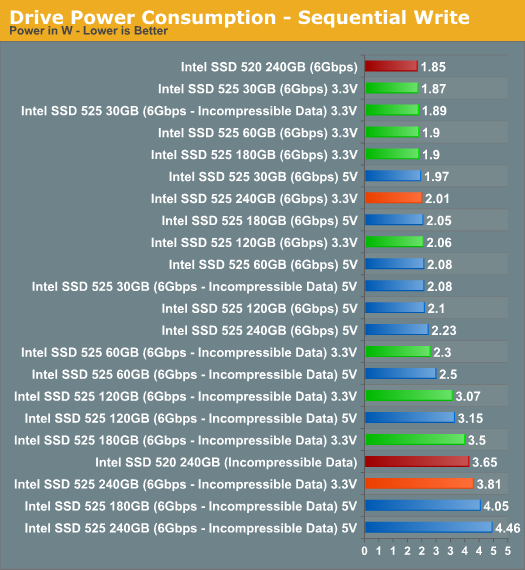

Under load there's a somewhat more appreciable difference in power when we measure directly off of a 3.3V supply to the 525:

Our 520 still manages to be lower power than the 525, however it's entirely possible that we simply had a better yielding NAND + controller combination back then. There's about a 10 - 15% reduction in power compared to measuring the 525 at the mSATA adapter's 5V rail with the 240GB model.

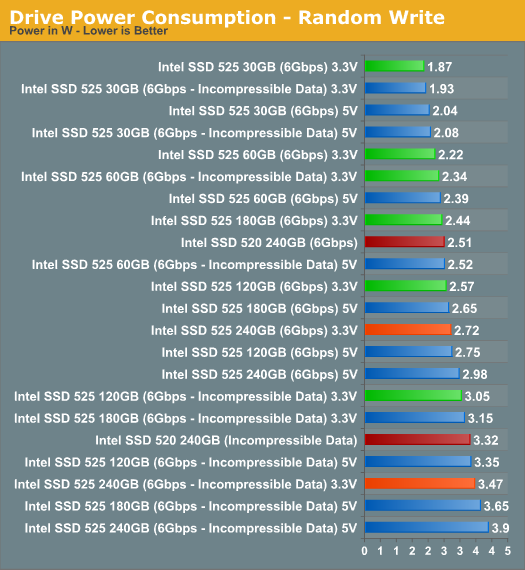

There story isn't any different in our random write test. Measuring power sent direct to the 525 narrows the gap between it and our old 520 sample. Our original 520 still seems to hold a small active power advantage over our 525 samples, but with only an early sample to compare to it's impossible to say if the same would be true for a newer/different drive.

I've updated Bench to include the latest power results.

Read More ...

Available Tags:HTC , Smartphone , TV , TV , GeForce , AnandTech , Dell , GTX , ZTE , Sony , Hardware , SSD ,

No comments:

Post a Comment